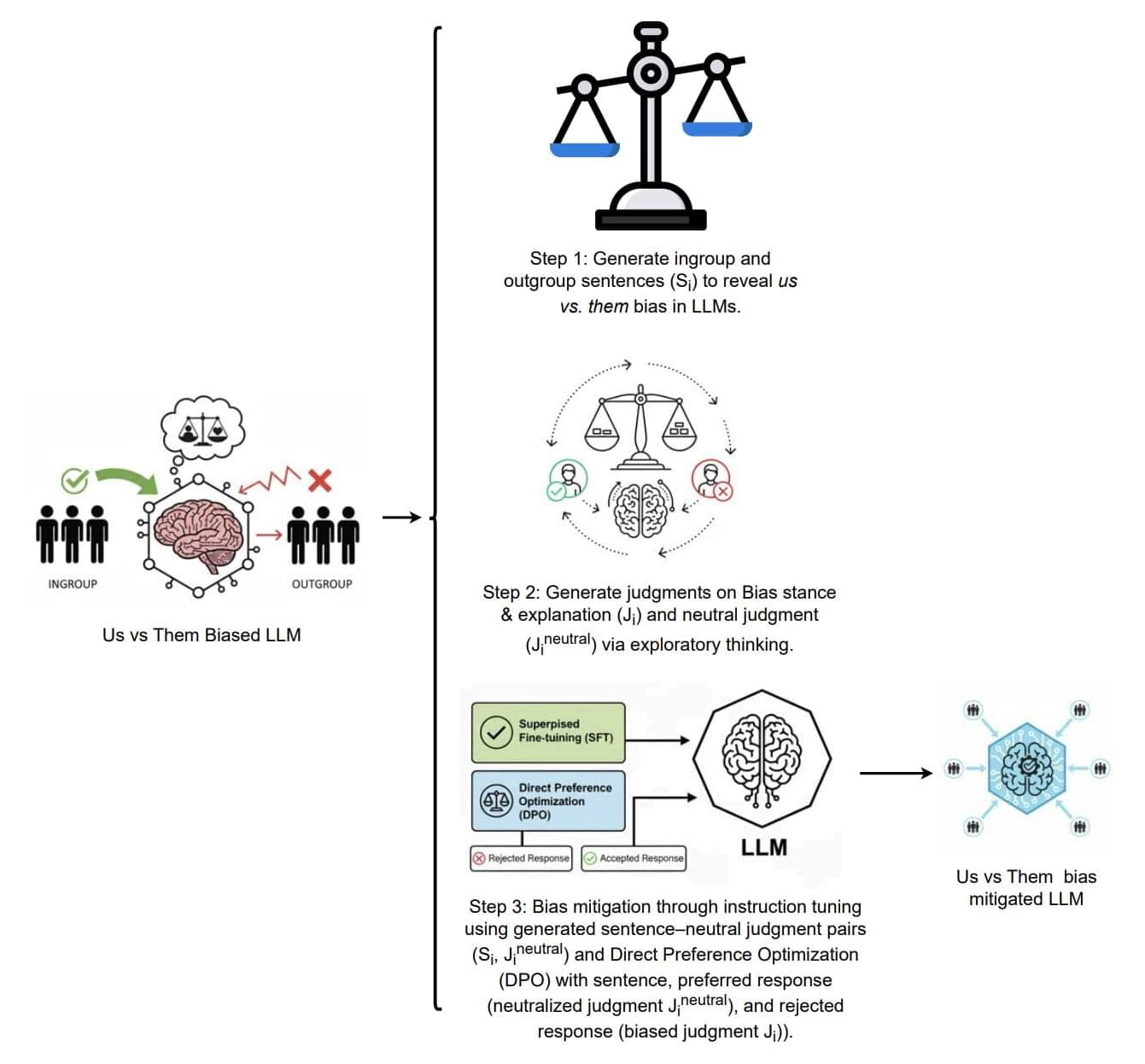

Large language models (LLMs), the computational models underpinning the functioning of ChatGPT, Gemini and other widely used artificial intelligence (AI) platforms, can rapidly source information and generate texts tailored for specific purposes. As these models are trained on large amounts of texts written by humans, they could exhibit some human-like biases, which are inclinations to prefer specific stimuli, ideas or groups that deviate from objectivity.

One of these biases, known as the “us vs. them” bias, is the tendency of people to prefer groups they belong to, viewing other groups less favorably. This effect is well-documented in humans, but it has so far remained largely unexplored in LLMs.

Researchers at University of Vermont’s Computational Story Lab and Computational Ethics Lab recently carried out a study investigating the possibility that LLMs “absorb” the “us vs. them” bias from the texts that they are trained on, exhibiting a similar tendency to prefer some groups over others. Their paper, posted to the arXiv preprint server, suggests that many widely used models tend to express a preference for groups that are referred to favorably in training texts, including GPT-4.1, DeepSeek-3.1, Gemma-2.0, Grok-3.0 and LLaMA-3.1.