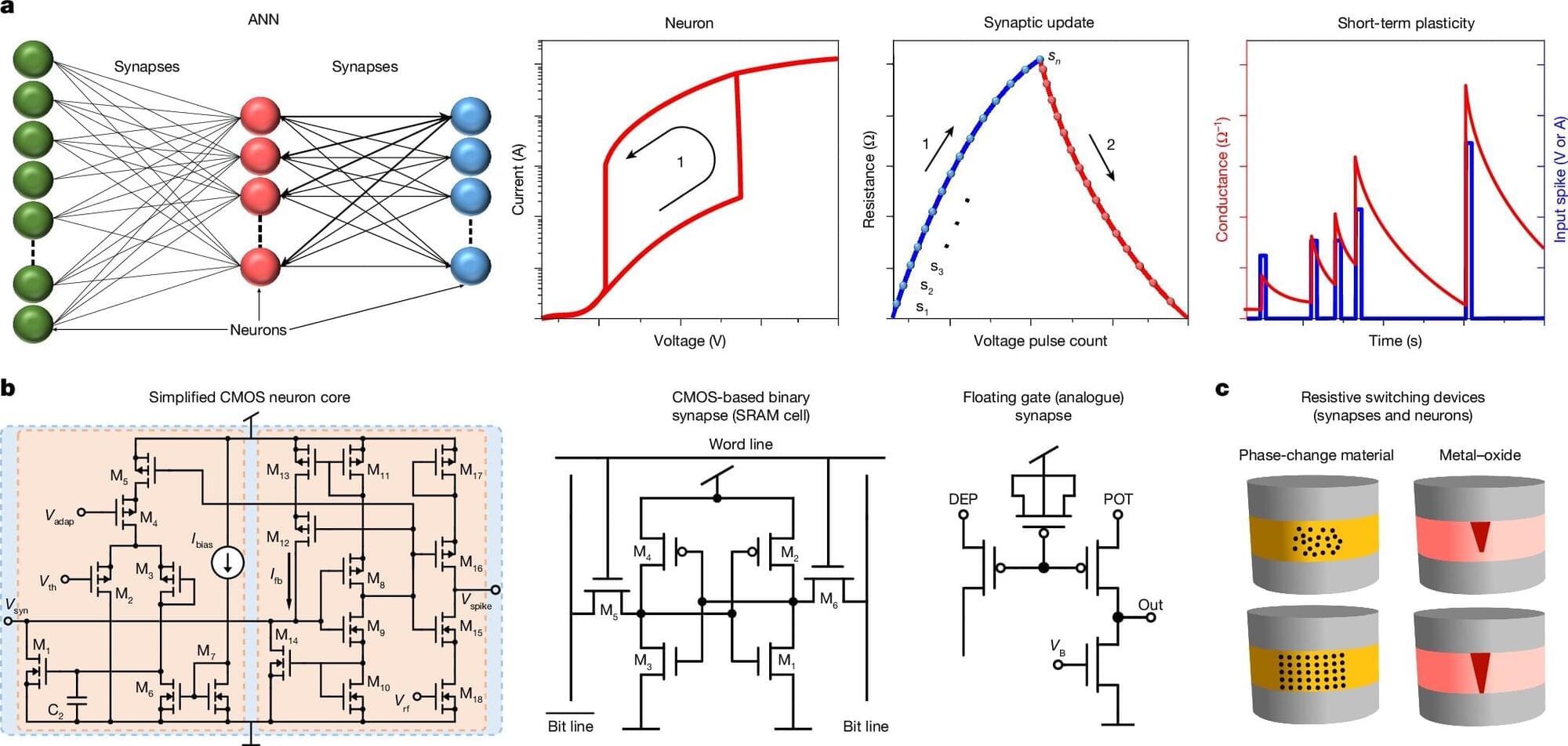

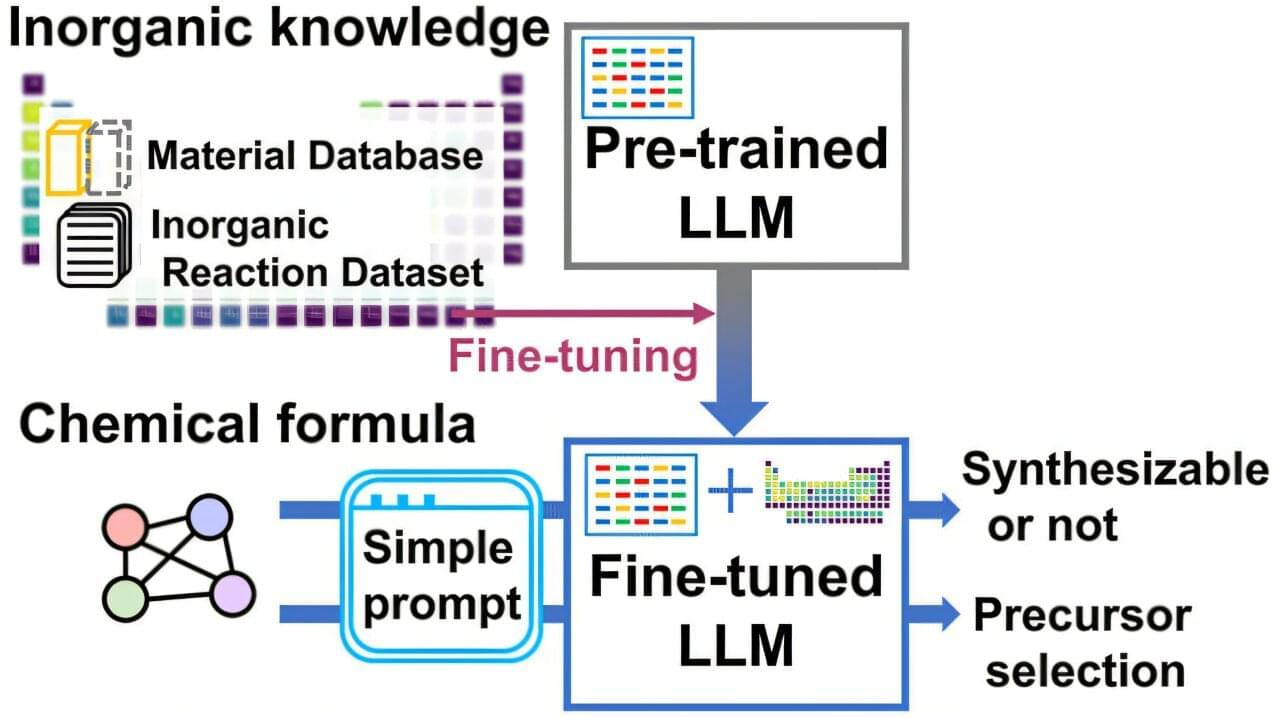

Researchers from the National University of Singapore (NUS) have demonstrated that a single, standard silicon transistor, the fundamental building block of microchips used in computers, smartphones and almost every electronic system, can function like a biological neuron and synapse when operated in a specific, unconventional way.

Led by Associate Professor Mario Lanza from the Department of Materials Science and Engineering at the College of Design and Engineering, NUS, the research team’s work presents a highly scalable and energy-efficient solution for hardware-based artificial neural networks (ANNs).

This brings neuromorphic computing —where chips could process information more efficiently, much like the human brain —closer to reality. Their study was published in the journal Nature.