Years after outsourcing marketing and customer service gigs to AI, the Swedish company Klarna is looking to hire its humans back.

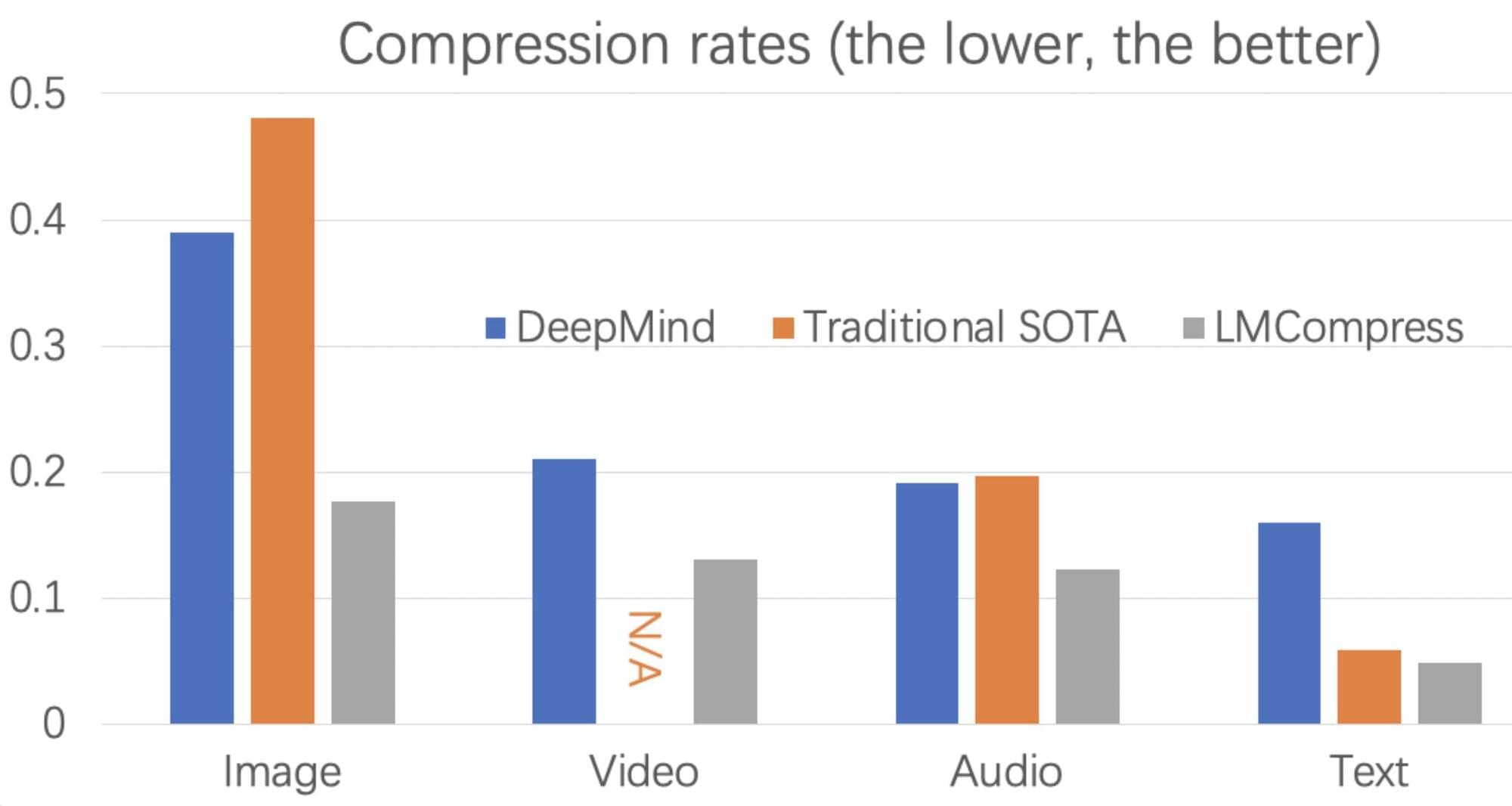

People store large quantities of data in their electronic devices and transfer some of this data to others, whether for professional or personal reasons. Data compression methods are thus of the utmost importance, as they can boost the efficiency of devices and communications, making users less reliant on cloud data services and external storage devices.

Researchers at the Central China Institute of Artificial Intelligence, Peng Cheng Laboratory, Dalian University of Technology, the Chinese Academy of Sciences and University of Waterloo recently introduced LMCompress, a new data compression approach based on large language models (LLMs), such as the model underpinning the AI conversational platform ChatGPT.

Their proposed method, outlined in a paper published in Nature Machine Intelligence, was found to be significantly more powerful than classical data compression algorithms.

Persuasion is a fundamental aspect of communication, influencing decision-making across diverse contexts, from everyday conversations to high-stakes scenarios such as politics, marketing, and law. The rise of conversational AI systems has significantly expanded the scope of persuasion, introducing both opportunities and risks. AI-driven persuasion can be leveraged for beneficial applications, but also poses threats through manipulation and unethical influence. Moreover, AI systems are not only persuaders, but also susceptible to persuasion, making them vulnerable to adversarial attacks and bias reinforcement. Despite rapid advancements in AI-generated persuasive content, our understanding of what makes persuasion effective remains limited due to its inherently subjective and context-dependent nature. In this survey, we provide a comprehensive overview of computational persuasion, structured around three key perspectives: AI as a Persuader, which explores AI-generated persuasive content and its applications; AI as a Persuadee, which examines AI’s susceptibility to influence and manipulation; and AI as a Persuasion Judge, which analyzes AI’s role in evaluating persuasive strategies, detecting manipulation, and ensuring ethical persuasion. We introduce a taxonomy for computational persuasion research and discuss key challenges, including evaluating persuasiveness, mitigating manipulative persuasion, and developing responsible AI-driven persuasive systems. Our survey outlines future research directions to enhance the safety, fairness, and effectiveness of AI-powered persuasion while addressing the risks posed by increasingly capable language models.

A new AI model from Tokyo called the Continuous Thought Machine mimics how the human brain works by thinking in real-time “ticks” instead of layers. Built by Sakana, this brain-inspired AI allows each neuron to decide when it’s done thinking, showing signs of what experts call proximate consciousness. With no fixed depth and a flexible thinking process, it marks a major shift away from traditional Transformer models in artificial intelligence.

🔍 What’s Inside:

Sakana’s brain-like AI thinks in real-time ticks instead of fixed layers.

https://shorturl.at/UPSTt.

Deep Agent’s new MCP connects AI to over 5,000 real-world tools via Zapier.

http://deepagent.abacus.ai.

also visit: http://chatllm.abacus.ai.

Alibaba’s ZEROSEARCH fakes Google results and slashes training costs by 88%

https://www.alizila.com/alibabas-new–… phones run Google’s new Veo 2 model before Pixel even gets access https://shorturl.at/Ki0YP Tencent drops a deepfake engine with shocking face accuracy https://github.com/Tencent/HunyuanCustom Apple uses on-device AI in iOS 19 to predict and extend battery life https://www.theverge.com/news/665249/.… Saudi Arabia launches a \$940B AI empire with support from Musk and Altman https://techcrunch.com/2025/05/12/sau… 🎥 What You’ll See:

📊 Why It Matters: AI is breaking out of the lab—thinking like brains, automating your work, and reshaping global power. From self-regulating neurons to trillion-dollar GPU wars, this is where the future starts. #ai #robotics #consciousai.

Honor phones run Google’s new Veo 2 model before Pixel even gets access.

https://shorturl.at/Ki0YP

Tencent drops a deepfake engine with shocking face accuracy.

https://github.com/Tencent/HunyuanCustom.

Apple uses on-device AI in iOS 19 to predict and extend battery life.

https://www.theverge.com/news/665249/.…

Saudi Arabia launches a \$940B AI empire with support from Musk and Altman.

https://techcrunch.com/2025/05/12/sau…

🎥 What You’ll See:

How Sakana’s AI mimics human neurons and rewrites how machines process thought.

Why Abacus’ Deep Agent now acts like a fully autonomous digital worker.

How Alibaba trains top AI models without using live search engines.

Why Google let Honor debut its video AI before its own users.

What Tencent’s face-swapping tech means for the future of video generation.

How iPhones will soon think ahead to save power.

Why Saudi Arabia’s GPU superpower plan could shake the entire AI industry.

📊 Why It Matters:

AI is breaking out of the lab—thinking like brains, automating your work, and reshaping global power. From self-regulating neurons to trillion-dollar GPU wars, this is where the future starts.

#ai #robotics #consciousai

Brighter with Herbert