Physics-informed AI reveals brain fluid flow from MRI, key to understanding brain health and neurological diseases.

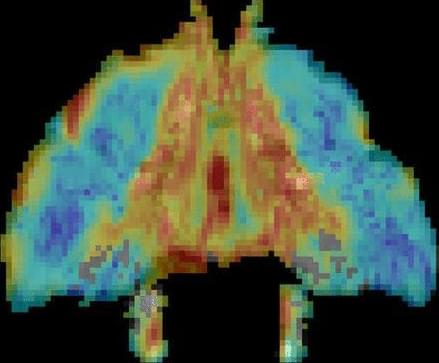

A research team from Tohoku University, Shin-Etsu Chemical Co., Ltd., and École Polytechnique Fédérale de Lausanne (EPFL) has invented a new way to efficiently guide spin waves around sharp corners with minimal loss—representing an exciting discovery for energy-efficient computing. Using a two-dimensional magnonic crystal—a copper (Cu) film with a hexagonal array of tiny holes placed on a magnetic garnet film—the team showed through calculations that spin waves travel along a Z-shaped path more than 5,000 times more efficiently than in conventional waveguides.

As artificial intelligence and data centers consume ever more electricity, heat from conventional electronics has become a serious problem. Spin waves are ripples of magnetization in a magnetic material that can carry information with far less heat than moving electrons, making them promising for reduced-energy computing. However, spin waves weaken quickly as they travel, especially when a waveguide is bent. This signal loss has long been the biggest obstacle to building practical spin wave circuits.

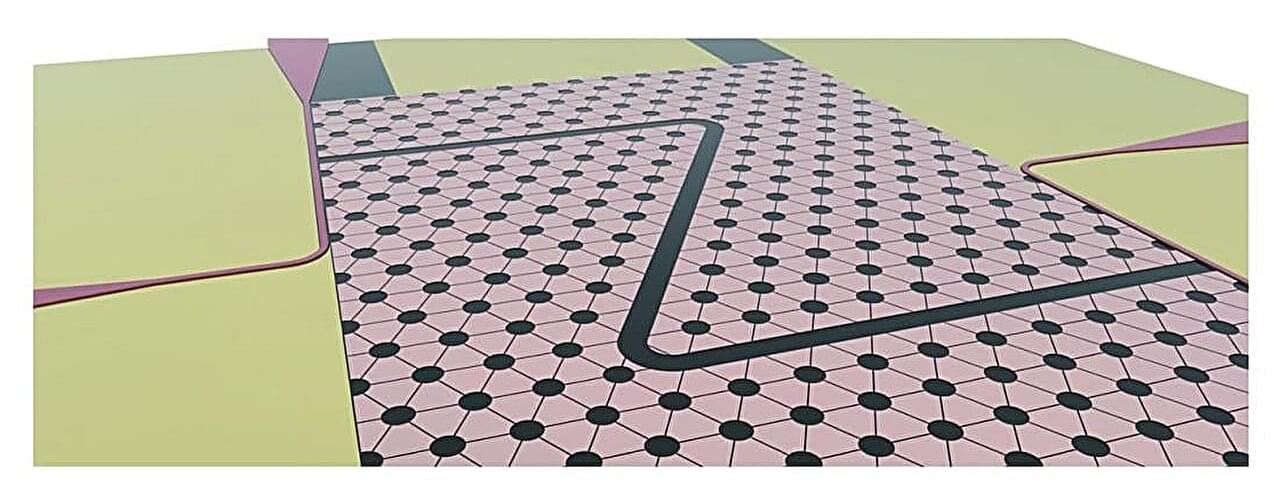

In a grandfather clock, a pendulum swings back and forth and this periodic motion is maintained using the energy stored in its suspended weights. This is done with the help of the escapement mechanism, which converts the gravitational energy of the weights into impulses that drive the pendulum, which then moves the clock’s gears, which move its hands.

A group of researchers recently designed a quantum version of the pendulum clock. According to their new study, published in Physical Review A, this quantum pendulum clock can operate autonomously and is more accurate than previous quantum clocks.

Michael Levin dives deep into the intersection of biology and artificial intelligence, exploring how individual units—whether cells or humans—can form cohesive, goal-driven systems.

Sharing insights into \.

Google DeepMind’s Demis Hassabis says humanity may already be standing in the foothills of the singularity. AI agents are now coding, researching, planning, paying, helping with science, and cutting real work from days to minutes. The big question is no longer whether AI is perfect. It’s whether imperfect AI has already become useful enough to speed up everything around it.

📩 Brand Deals \& Partnerships: [email protected].

✉ General Inquiries: [email protected].

🚀 New Channel: / @space.revolution.

📌 What You’ll See:

Google DeepMind’s warning that we are entering the foothills of the singularity.

SOURCE: https://www.axios.com/2026/05/26/deep… new Gemini for Science tools built to speed up scientific discovery SOURCE: https://blog.google/innovation-and-ai… AWS letting autonomous AI agents make payments and complete transactions SOURCE: https://aws.amazon.com/about-aws/what… AxiomProver helping prove new math results in Lean and Mathlib SOURCE: https://arxiv.org/abs/2602.05090 Biohub’s new world model of protein biology trained across billions of sequences SOURCE: https://biohub.ai/esm/protein ARC-AGI-3 showing the huge gap between today’s frontier AI and human reasoning SOURCE: https://aiforautomation.io/news/2026-… 🚨 Why It Matters This is bigger than another AI model update. Google DeepMind is now openly talking about the singularity, while AI agents are already starting to speed up coding, science, business, and research. Some experts think AGI may be closer than expected, while others say current AI still lacks true intelligence. Either way, the AI race is shifting fast from chatbots into agents that can plan, act, build, discover, and change real workflows. #google #singularity #ai.

Google’s new Gemini for Science tools built to speed up scientific discovery.

SOURCE: https://blog.google/innovation-and-ai…

AWS letting autonomous AI agents make payments and complete transactions.

SOURCE: https://aws.amazon.com/about-aws/what…

AxiomProver helping prove new math results in Lean and Mathlib.

SOURCE: https://arxiv.org/abs/2602.05090

Biohub’s new world model of protein biology trained across billions of sequences.

SOURCE: https://biohub.ai/esm/protein.

ARC-AGI-3 showing the huge gap between today’s frontier AI and human reasoning.

SOURCE: https://aiforautomation.io/news/2026-…

🚨 Why It Matters.

This is bigger than another AI model update. Google DeepMind is now openly talking about the singularity, while AI agents are already starting to speed up coding, science, business, and research. Some experts think AGI may be closer than expected, while others say current AI still lacks true intelligence. Either way, the AI race is shifting fast from chatbots into agents that can plan, act, build, discover, and change real workflows.

#google #singularity #ai

AutoScientists changes the game by creating a decentralized “team” of AI agents. Rather than relying on a central planner, these digital scientists look at the shared data and self-organize into specialized groups around the most exciting hypotheses. Before they spend valuable computer processing power on an experiment, they ruthlessly critique each other’s proposals. Crucially, they keep a collective log of both their successes and failures, ensuring the entire system avoids redundant work.

Scientific research proceeds through iterative cycles of hypothesis generation, experiment design, execution, and revision, often requiring researchers to explore multiple competing directions as evidence accumulates and priorities shift. LLM agents can automate parts of this process, but existing agents either concentrate reasoning within a single research thread or coordinate through a central planner with fixed objectives. As a result, they struggle to sustain parallel exploration across research directions or reorganize as promising and unproductive directions emerge over time.

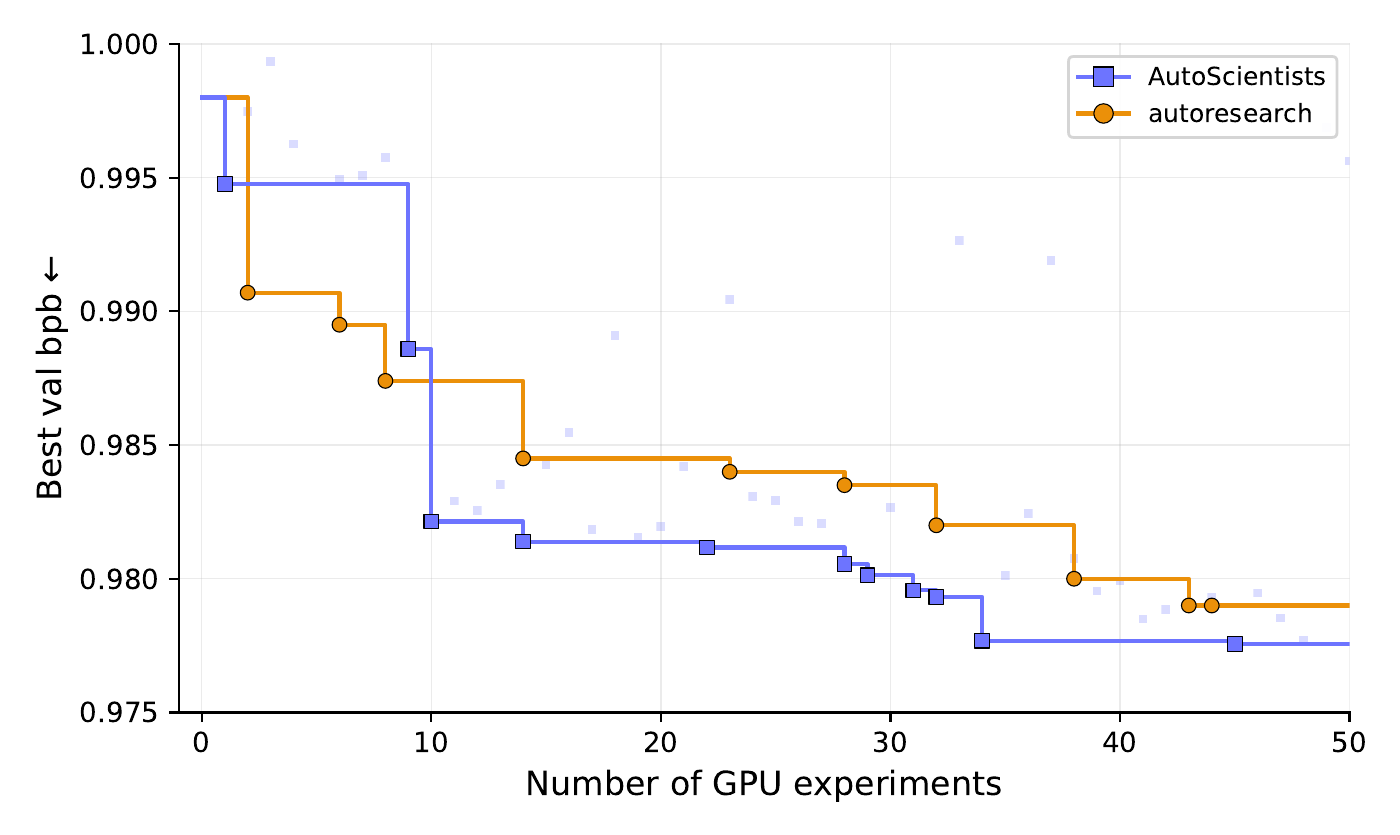

We introduce AutoScientists, a decentralized team of AI agents for long-running computational scientific experimentation. Rather than following decisions from a central orchestrator, agents independently interpret a shared experimental state, self-organize into teams around research directions, critique and filter proposals with a discussion phase before committing experimental compute, and exchange both successful and failed findings across teams to avoid redundant exploration.

Under matched experimental budgets, AutoScientists outperforms prior agentic systems across biomedical machine learning, language-model training optimization, and protein fitness prediction. On BioML-Bench, spanning biomedical imaging, protein engineering, single-cell omics, and drug discovery, AutoScientists achieves a mean leaderboard percentile of 74.4% across 24 tasks, improving over the strongest prior biomedical agent by +8.33%. On GPT training optimization, AutoScientists reaches a target validation bits-per-byte 1.9× faster than autoresearch and continues discovering improvements from a stronger starting champion where the single-agent approach finds none (7 vs. 0 accepted improvements). On ProteinGym fitness prediction, AutoScientists discovers a method for ACE2–Spike binding that improves over the current state-of-the-art model by +12.5% Spearman correlation. Applied without modification to all 217 ProteinGym assays, the same method improves over the prior state of the art by +6.5% in Spearman correlation.

Claude Code just dropped “dynamic workflows” and it’s pretty cool.

You type “create a workflow” or turn on “ultracode” in the effort menu and it spins up hundreds of parallel agents that check each other’s work.

Today we’re introducing dynamic workflows in Claude Code, helping Claude take on the most challenging tasks end-to-end. Work you’d normally plan in quarters now finishes in days. Claude dynamically writes orchestration scripts that run tens to hundreds of parallel subagents in a single session, checking its work before anything reaches you.

Some problems are too big for one pass by a single agent, especially in complex, legacy codebases: a bug hunt across an entire service, a migration that touches hundreds of files, a plan you want stress-tested from every angle before you commit to it. Dynamic workflows can handle all of these end-to-end.

Dynamic workflows are available today in research preview in the Claude Code CLI, Desktop, and the VS code extension for Max, Team, and Enterprise (if admin enabled) plans, as well as on the Claude API, on Amazon Bedrock, Vertex AI, and Microsoft Foundry.

Artificial intelligence is advancing rapidly, but today’s computers are reaching their physical and energy limits. Now, scientists are exploring a revolutionary solution: light-matter particles known as polaritons. These exotic hybrid particles combine the properties of light and matter, allowing information to move at incredible speeds while consuming far less energy than traditional electronic chips.

In this video, we explore how light-based computing could transform the future of AI, why researchers believe polariton technology may outperform conventional processors, and what this breakthrough could mean for machine learning, robotics, quantum technologies, and the future of computing itself.

Could this be the next major leap beyond silicon chips? And are we entering an era where AI operates at near light speed?

Watch to discover the science behind one of the most exciting technological breakthroughs of the decade.

#AI, #ArtificialIntelligence, #QuantumComputing, #FutureTechnology, #Physics, #MachineLearning, #Science, #Technology, #Innovation, #NeuralNetworks, #DeepLearning, #QuantumPhysics, #TechNews, #Computing, #LightMatterParticles

A major breakthrough in artificial intelligence may have arrived: scientists have created an artificial neuron capable of communicating with other neurons.

Inspired by the human brain, this technology could allow machines to process information in a far more biological and efficient way. Instead of traditional computing architectures, future systems could operate more like living neural networks.

In this video we explore how artificial neurons work, why this breakthrough matters, and how it could reshape AI, robotics, and neuroscience.

#ArtificialNeuron, #ArtificialIntelligence, #Neuroscience, #FutureTechnology, #AIResearch, #NeuralNetworks

China has begun the world’s first space experiment on human artificial embryos, with samples now aboard its space station and the study progressing smoothly, scientists announced Wednesday.

Delivered by the Tianzhou-10 cargo craft launched earlier this week, the human artificial embryo samples have been installed in the space station’s experimental module by the orbiting taikonauts, according to the Technology and Engineering Center for Space Utilization under the Chinese Academy of Sciences, which is in charge of the experiment.

“The experiment is going very well,” said Yu Leqian, the project leader for the artificial embryo space science experiment. “A pre-set automated system changes the culture medium for the samples every day.” According to Yu, through this study, scientists aim to conduct preliminary research on issues related to long-term human habitation, survival and reproduction in space.