In the study, the researchers also explored how the accelerated maturation of later-born inhibitory neurons is regulated. They identified specific genes involved in this process and uncovered how they control when and to what extent a cell reads and uses different parts of its genetic code. They found that the faster development of later-born inhibitory neurons turns out to be linked to changes in the developmental potential of the precursor cells that generate them—changes which are, in turn, triggered by a reorganization of the so-called ‘chromatin landscape.’

In simple terms, this means that cells adjust the accessibility of certain regions of DNA in the cell nucleus, making key instructions on how and when to develop more readable.

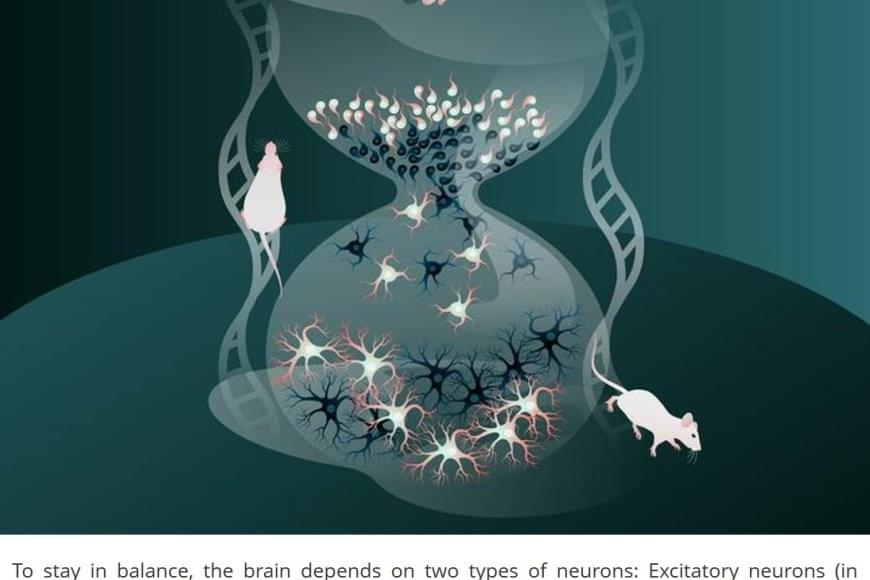

The human brain is made up of billions of nerve cells, or neurons, that communicate with each other in vast, interconnected networks. For the brain to function reliably, there needs to be a fine balance between two types of signals: Excitatory neurons that pass on information and increase activity, and inhibitory neurons that limit activity and prevent other neurons from becoming too active or firing out of control. This balance between excitation and inhibition is essential for a healthy, stable brain.

Inhibitory neurons are generated during brain development through the division of progenitor cells – immature cells not yet specialized but already on the path to becoming neurons. The new study uncovered a surprising feature of brain development based on findings in mice: During this essential process, cells born later in development mature much more quickly than those produced earlier.

“This faster growth helps later-born neurons catch up to those produced earlier, so that by the time all these neurons are incorporated into neural networks, they are at a similar stage of development,” said a research group leader. “This is important, as otherwise, earlier-born neurons—having had more time to form connections—could end up with far more synaptic links than those created later. Without this adjustment, the network could be thrown off balance, and individual cells would have too many or too few connections.”