Please no, we don’t need a machine-learning troll farm.

The idea that humans should merge with AI is very much in the air these days. It is offered both as a way for humans to avoid being outmoded by AI in the workplace, and as a path to superintelligence and immortality. For instance, Elon Musk recently commented that humans can escape being outmoded by AI by “having some sort of merger of biological intelligence and machine intelligence.”1 To this end, he’s founded a company, Neuralink. One of its first aims is to develop “neural lace,” an injectable mesh that connects the brain directly to computers. Neural lace and other AI-based enhancements are supposed to allow data from your brain to travel wirelessly to one’s digital devices or to the cloud, where massive computing power is available.

For many transhumanists, uploading is key to the mind-machine merger.

Perhaps these sorts of enhancements will turn out to be beneficial, but to see if this is the case, we will need to move beyond all the hype. Policymakers, the public, and even AI researchers themselves need a better idea of what is at stake. For instance, if AI cannot be conscious, then if you substituted a microchip for the parts of the brain responsible for consciousness, you would end your life as a conscious being. You’d become what philosophers call a “zombie”—a nonconscious simulacrum of your earlier self. Further, even ifmicrochips could replace parts of the brain responsible for consciousness without zombifying you, radical enhancement is still a major risk. After too many changes, the person who remains may not even be you. Each human who enhances may, unbeknownst to them, end their life in the process.

We all know that dogs are a man’s best friend, but has the world really come to this?

On a particularly blustery day in New York City, I found myself (as one with the income bracket of a writer sporadically does) on the Upper East Side, amidst tribes of cooler-than-thou high school students, dedicated dog walkers and women wearing hats that looked like a Shar-Pei had potentially suffered in the making of it.

Nonetheless, I braved the chilly air and found solace in the Cooper Hewitt Museum, the design institution that is part of the Smithsonian. Upon entering, visitors are greeted with a magic wand-looking pen tool, that serves as an interactive notekeeper for items you are interested in. “How innovative.” Perfect for a museum about innovation, am I right? With my magic wand in hand, I entered the Narnia of objects, with the first stop being an exhibition titled “Access and Ability.” Featuring “artifacts” designed for people with disabilities, I was surprised to find among the various innovations, a very cute-looking puppy that I instinctively wanted to pet. But I did not, for fear of being arrested, a la Ocean’s 12.

Biomedical engineers at Duke University have devised a machine learning approach to modeling the interactions between complex variables in engineered bacteria that would otherwise be too cumbersome to predict. Their algorithms are generalizable to many kinds of biological systems.

In the new study, the researchers trained a neural network to predict the circular patterns that would be created by a biological circuit embedded into a bacterial culture. The system worked 30,000 times faster than the existing computational model.

To further improve accuracy, the team devised a method for retraining the machine learning model multiple times to compare their answers. Then they used it to solve a second biological system that is computationally demanding in a different way, showing the algorithm can work for disparate challenges.

Thinking of #Upskilling? Check this out: If you want to learn machine learning and artificial intelligence, start here:

Two years ago, I started learning machine learning online on my own. I shared my journey through YouTube and my blog. I had no idea what I was doing. I’d never coded before but decided I wanted to learn machine learning.

“I want to learn machine learning and artificial intelligence, where do I start?” Here.

Want to code in Python, Java or R?

Try SAS Viya free for 14 days.

The robot can move its eyes, eyebrows, lips and “other muscles,” the company said.

John Mullan, professor of English literature at University College London, wrote an article on The Guardian titled “We need robots to have morals. Could Shakespeare and Austen help?”.

Using great literature to teach ethics to machines is a dangerous game. The classics are a moral minefield.

When he wrote the stories in I, Robot in the 1940s, Isaac Asimov imagined a world in which robots do all humanity’s tedious or unpleasant jobs for them, but where their powers have to be restrained. They are programmed to obey three laws. A robot may not injure another human being, even through inaction; a robot must obey a human being (except to contradict the previous law); a robot must protect itself (unless this contradicts either of the previous laws).

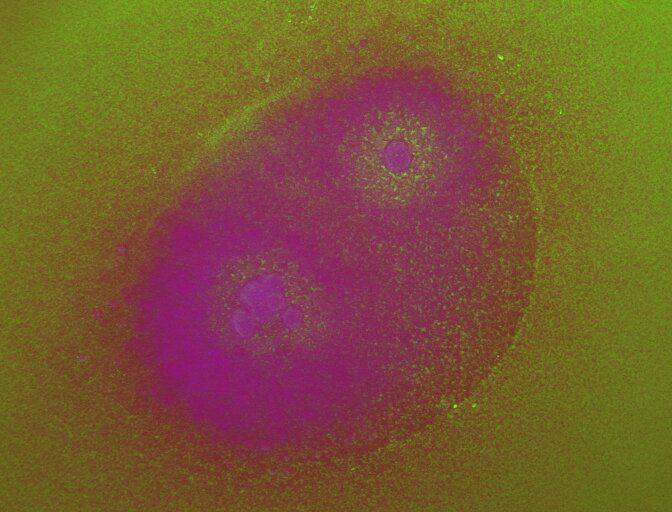

When I imagine the inner workings of a robot, I think hard, cold mechanics running on physics: shafts, wheels, gears. Human bodies, in contrast, are more of a contained molecular soup operating on the principles of biochemistry.

Yet similar to robots, our cells are also attuned to mechanical forces—just at a much smaller scale. Tiny pushes and pulls, for example, can urge stem cells to continue dividing, or nudge them into maturity to replace broken tissues. Chemistry isn’t king when it comes to governing our bodies; physical forces are similarly powerful. The problem is how to tap into them.

In a new perspectives article in Science, Dr. Khalid Salaita and graduate student Aaron Blanchard from Emory University in Atlanta point to DNA as the solution. The team painted a futuristic picture of DNA mechanotechnology, in which we use DNA machines to control our biology. Rather than a toxic chemotherapy drip, for example, a cancer patient may one day be injected with DNA nanodevices that help their immune cells better grab onto—and snuff out—cancerous ones.