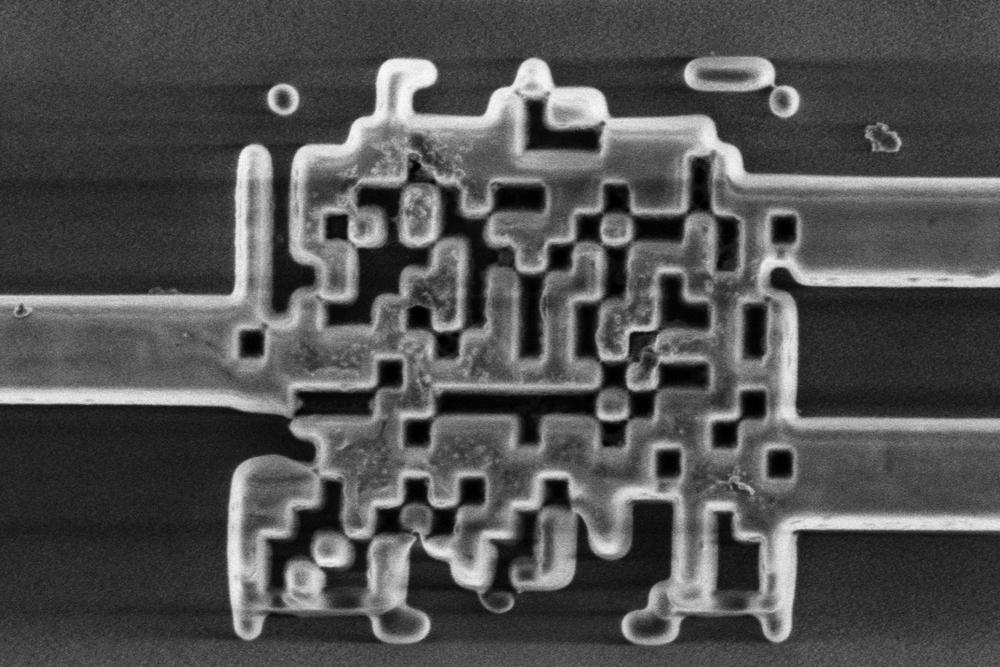

Another important question is the extent to which continued increases in computational capacity are economically viable. The Stanford Index reports a 300,000-fold increase in capacity since 2012. But in the same month that the Report was issued, Jerome Pesenti, Facebook’s AI head, warned that “The rate of progress is not sustainable…If you look at top experiments, each year the cost is going up 10-fold. Right now, an experiment might be in seven figures but it’s not going to go to nine or 10 figures, it’s not possible, nobody can afford that.”

AI has feasted on low-hanging fruit, like search engines and board games. Now comes the hard part — distinguishing causal relationships from coincidences, making high-level decisions in the face of unfamiliar ambiguity, and matching the wisdom and commonsense that humans acquire by living in the real world. These are the capabilities that are needed in complex applications such as driverless vehicles, health care, accounting, law, and engineering.

Despite the hype, AI has had very little measurable effect on the economy. Yes, people spend a lot of time on social media and playing ultra-realistic video games. But does that boost or diminish productivity? Technology in general and AI in particular are supposed to be creating a new New Economy, where algorithms and robots do all our work for us, increasing productivity by unheard-of amounts. The reality has been the opposite. For decades, U.S. productivity grew by about 3% a year. Then, after 1970, it slowed to 1.5% a year, then 1%, now about 0.5%. Perhaps we are spending too much time on our smartphones.