Elon Musk’s XAI has filed a lawsuit against a former engineer for allegedly stealing AI secrets worth hundreds of millions of dollars to benefit OpenAI, highlighting the intense competition and corporate espionage in the AI industry Questions to inspire discussion AI Security and Corporate Espionage 🔒 Q: How did the XA.

Category: robotics/AI – Page 197

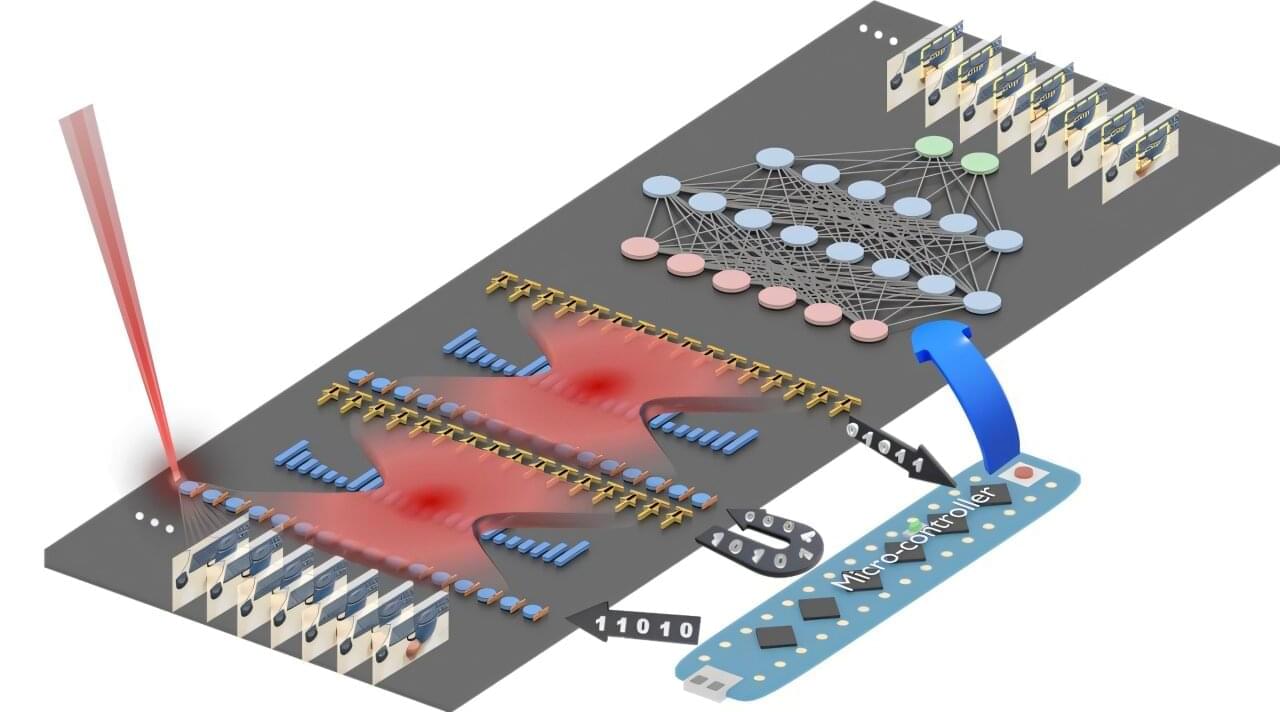

Light-based chip can boost power efficiency of AI tasks up to 100-fold

A team at the University of Florida has developed a new kind of computer chip that uses light with electricity to perform one of the most power-intensive parts of artificial intelligence—image recognition and similar pattern-finding tasks. Using light dramatically cuts the power needed to perform these tasks, with efficiency 10 or even 100 times that of current chips performing the same calculations. Using this approach could help rein in the enormous demand for electricity that is straining power grids while enabling higher performance AI models and systems.

Artificial intelligence (AI) systems are increasingly central to technology, powering everything from facial recognition to language translation. But as AI models grow more complex, they consume vast amounts of electricity—posing challenges for energy efficiency and sustainability. A new chip developed by researchers at the University of Florida could help address this issue by using light, rather than just electricity, to perform one of AI’s most power-hungry tasks.

The research is reported in Advanced Photonics.

Heterochronic myeloid cell replacement reveals the local brain environment as key driver of microglia aging

Aging, the key risk factor for cognitive decline, impacts the brain in a region-specific manner, with microglia among the most affected cell types. However, it remains unclear whether this is intrinsically mediated or driven by age-related changes in neighboring cells. Here, we describe a scalable, genetically modifiable system for in vivo heterochronic myeloid cell replacement. We find reconstituted myeloid cells adopt region-specific transcriptional, morphological and tiling profiles characteristic of resident microglia. Young donor cells in aged brains rapidly acquired aging phenotypes, particularly in the cerebellum, while old cells in young brains adopted youthful profiles. We identified STAT1-mediated signaling as one axis controlling microglia aging, as STAT1-loss prevented aging trajectories in reconstituted cells. Spatial transcriptomics combined with cell ablation models identified rare natural killer cells as necessary drivers of interferon signaling in aged microglia. These findings establish the local environment, rather than cell-autonomous programming, as a primary driver of microglia aging phenotypes.

Claire Gizowski, Galina Popova, Heather Shin, Wendy Craft, Wenjun Kong, Bernd J Wranik, Yuheng C Fu, Tzuhua D Lin, Baby Martin-McNulty, Po-Han Tai, Kayla Leung, Nicole Fong, Devyani Jogran, Agnieszka Wendorff, David Hendrickson, Astrid Gillich, Andy Chang, Oliver Hahn are current or former employees of Calico Life Sciences LLC. The remaining authors declare no competing interest.

How Simple Rules Shatter Scientific Intuition | Stephen Wolfram

Get 50% off Claude Pro, including access to Claude Code, at http://claude.ai/theoriesofeverything.

As a listener of TOE you can get a special 20% off discount to The Economist and all it has to offer! Visit https://www.economist.com/toe.

In this episode, I speak with Stephen Wolfram—creator of Mathematica and Wolfram Language—about a “new kind of science” that treats the universe as computation. We explore computational irreducibility, discrete space, multi-way systems, and how the observer shapes the laws we perceive—from the second law of thermodynamics to quantum mechanics. Wolfram reframes Feynman diagrams as causal structures, connects evolution and modern AI through coarse fitness and assembled “lumps” of computation, and sketches a nascent theory of biology as bulk orchestration. We also discuss what makes science good: new tools, ruthless visualization, respect for history, and a field he calls “ruliology”—the study of simple rules, where anyone can still make real contributions. This is basically a documentary akin to The Life and Times of Stephen Wolfram. I hope you enjoy it.

Join My New Substack (Personal Writings): https://curtjaimungal.substack.com.

Listen on Spotify: https://open.spotify.com/show/4gL14b92xAErofYQA7bU4e.

Timestamps:

363 ‒ A new frontier in neurosurgery: brain-computer interfaces, new hope for brain diseases, & more

Edward Chang is a neurosurgeon, scientist, and a pioneering leader in functional neurosurgery and brain-computer interface technology, whose work spans the operating room, the research lab, and the engineering bench to restore speech and movement for patients who have lost these capabilities. In this episode, Edward explains the evolution of modern neurosurgery and its dramatic reduction in collateral damage, the experience of awake brain surgery, real-time mapping to protect critical functions, and the split-second decisions surgeons make. He also discusses breakthroughs in brain-computer interfaces and functional electrical stimulation systems, strategies for improving outcomes in glioblastoma, and his vision for slimmer, safer implants that could turn devastating conditions like ALS, spinal cord injury, and aggressive brain tumors into more manageable chronic illnesses.

View show notes here: https://bit.ly/46uJXlh.

Become a member to receive exclusive content: https://peterattiamd.com/subscribe/

Sign up to receive Peter’s email newsletter: https://peterattiamd.com/newsletter/

We discuss:

0:00:00 — Intro.

0:01:17 — The evolution of neurosurgery and the shift toward minimally invasive techniques.

0:10:58 — Glioblastomas: biology, current treatments, and emerging strategies to overcome its challenges.

0:17:39 — How brain mapping has advanced from preserving function during surgery to revealing how neurons encode language and cognition.

0:24:22 — How awake brain surgery is performed.

0:29:02 — How brain redundancy and plasticity allow some regions to be safely resected, the role of the corpus callosum in epilepsy surgery, and the clinical and philosophical implications of disconnecting the hemispheres.

0:43:46 — How neural engineering may restore lost functions in neurodegenerative disease, how thought mapping varies across individuals, and how sensory decline contributes to cognitive aging.

0:54:40 — Brain–computer interfaces explained: EEG vs. ECoG vs. single-cell electrodes and their trade-offs.

1:09:02 — Edward’s clinical trial using ECoG to restore speech to a stroke patient.

1:20:41 — How a stroke patient regained speech through brain–computer interfaces: training, AI decoding, and the path to scalable technology.

1:41:10 — Using brain-computer interfaces to restore breathing, movement, and broader function in ALS patients.

1:47:56 — The 2030 outlook for brain–computer interfaces.

1:52:35 — The potential of stem cell and cell-based therapies for regenerating lost brain function.

1:57:54 — Edward’s vision for how neurosurgery and treatments for glioblastoma, Parkinson’s disease, and Alzheimer’s disease may evolve by 2040

2:00:43 — The rare but dangerous risk of vertebral artery dissections from chiropractic neck adjustments and high-velocity movements.

2:02:31 — How Harvey Cushing might view modern neurosurgery, and how the field has shifted from damage avoidance to unlocking the brain’s functions.

——-

About:

The Peter Attia Drive is a deep-dive podcast focusing on maximizing longevity, and all that goes into that from physical to cognitive to emotional health. With over 90 million episodes downloaded, it features topics including exercise, nutritional biochemistry, cardiovascular disease, Alzheimer’s disease, cancer, mental health, and much more.

Peter Attia is the founder of Early Medical, a medical practice that applies the principles of Medicine 3.0 to patients with the goal of lengthening their lifespan and simultaneously improving their healthspan.

AI-driven framework creates defect-tolerant metamaterials with complex functionality

Many industrial products—from car bumpers to aerospace panels and medical implants—owe their performance to lightweight, cellular materials. These hard-working synthetics are engineered to meet specific functionality goals, but too often, defects introduced during the fabrication process can lead to subpar performance or even catastrophic failure.

Tesla Just Hit Its POINT OF NO RETURN

Questions to inspire discussion.

📈 Q: What is the potential annual production capacity for Cybercabs at full speed? A: At full speed with multiple production lines, Tesla’s Cybercab production could reach 2 million vehicles per year.

Tesla’s FSD and Autonomous Driving.

🧠 Q: When is FSD14 expected to be released? A: FSD14 is anticipated to be released in September 2025.

🚗 Q: What capability will FSD14 enable for Tesla vehicles? A: FSD14 will enable unsupervised robo taxi operation for Tesla vehicles.

💼 Q: How might Tesla’s FSD subscription model change? A: Tesla’s FSD subscription could become mandatory for new vehicle purchases.

Max Tegmark: Can We Prevent AI Superintelligence From Controlling Us?

🔵 Try Epoch Times now: https://ept.ms/3Uu1JA5

This is the full version of Jan Jekielek’s interview with Max Tegmark. The interview was originally released on Epoch TV on June 3, 2025.

Few people understand artificial intelligence and machine learning as well as MIT physics professor Max Tegmark. Founder of the Future of Life Institute, he is the author of “Life 3.0: Being Human in the Age of Artificial Intelligence.”

“The painful truth that’s really beginning to sink in is that we’re much closer to figuring out how to build this stuff than we are figuring out how to control it,” he says.

Where is the U.S.–China AI race headed? How close are we to science fiction-type scenarios where an uncontrollable superintelligent AI can wreak major havoc on humanity? Are concerns overblown? How do we prevent such scenarios?

CHAPTER TITLES

Timelapse of Future Humanoid Robots (2029 — 2200+)

This is the future of AI and robots. Take a journey into the future and explore the possibilities and predictions of AI humanoid robots. This timelapse of the future explores robots that move faster than humans can see, humanoids and teslabots with human skin faces (biobots), and the building of an artificial super intelligence that walks among humans.

Game parks allow humans in their homes to control humanoids in hybrid digital real world games.

Humanoids are able to self-transfer their entire minds into digital backup worlds and into other physical machines.

Hives of humanoids link their computational power into a single super-intelligence while maintaining individual bodies. They are building a super intelligence. More intelligent than the collective. An intelligence that lives in the digital world… and the real.

Encyclopedia of the Future entries: Android Majority, Machine Mirror Point, Digital Twin Simulation, Cyborgology.

Personal inspiration in creating this video comes from: Westworld TV show, and the Ex Machina movie.