Materials that learn to change their shape in response to an external stimulus are a step closer to reality, thanks to a prototype system produced by engineers at UCLA.

Living entities constantly learn, adapting their behaviors to the environment so that they can thrive regardless of their surroundings. Inanimate materials typically don’t learn, except in science fiction movies. Now a team led by Jonathan Hopkins of the University of California, Los Angeles (UCLA), has demonstrated a so-called architected material that is capable of learning [1]. The material, which is made up of a network of beam-like components, learns to adapt its structure in response to a stimulus so that it can take on a specific shape. The team says that the material could act as a model system for future “intelligent” manufacturing.

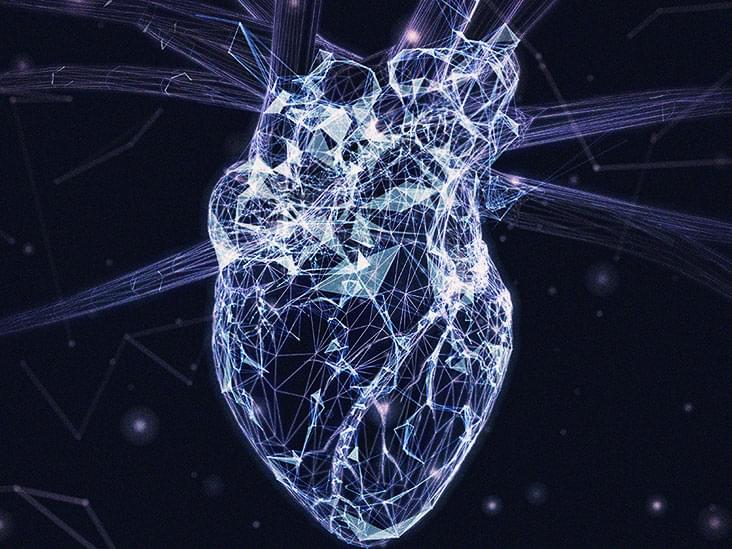

The material developed by Hopkins and colleagues is a so-called mechanical neural network (MNN). If produced on a commercial scale, scientists think that these intelligent materials could revolutionize manufacturing in fields from building construction to fashion design. For example, an aircraft wing made from a MNN could learn to morph its shape in response to a change in wind conditions to maintain the aircraft’s flying efficiency; a house made from a MNN could adjust its structure to maintain the building’s integrity during an earthquake; and a shirt weaved from a MNN could alter its pattern so that it fits a person of any size.