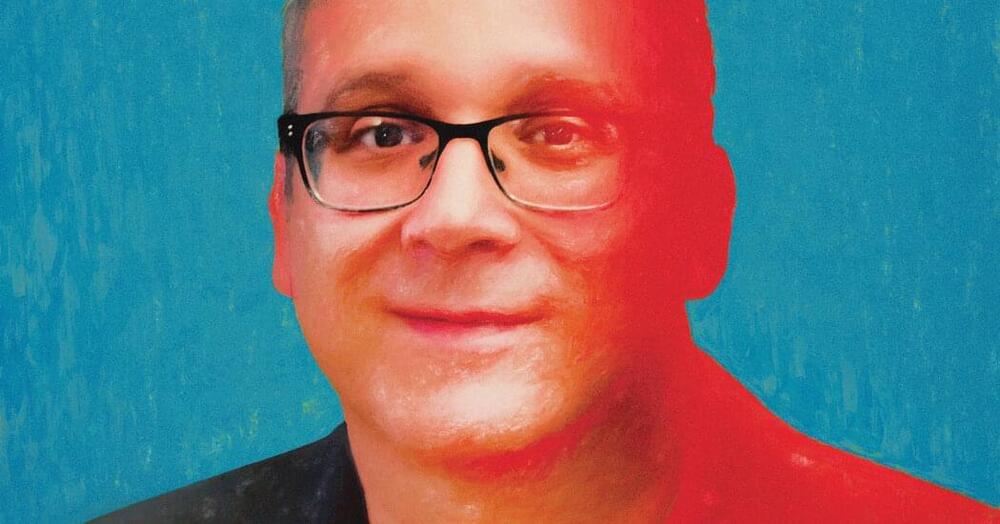

Microsoft’s corporate VP and chief economist tells the World Economic Forum (WEF) that AI will be used by bad actors, but “we shouldn’t regulate AI until we see some meaningful harm.”

Speaking at the WEF Growth Summit 2023 during a panel on “Growth Hotspots: Harnessing the Generative AI Revolution,” Microsoft’s Michael Schwarz argued that when it came to AI, it would be best not to regulate it until something bad happens, so as to not suppress the potentially greater benefits.

“I am quite confident that yes, AI will be used by bad actors; and yes, it will cause real damage; and yes, we have to be very careful and very vigilant,” Schwarz told the WEF panel.