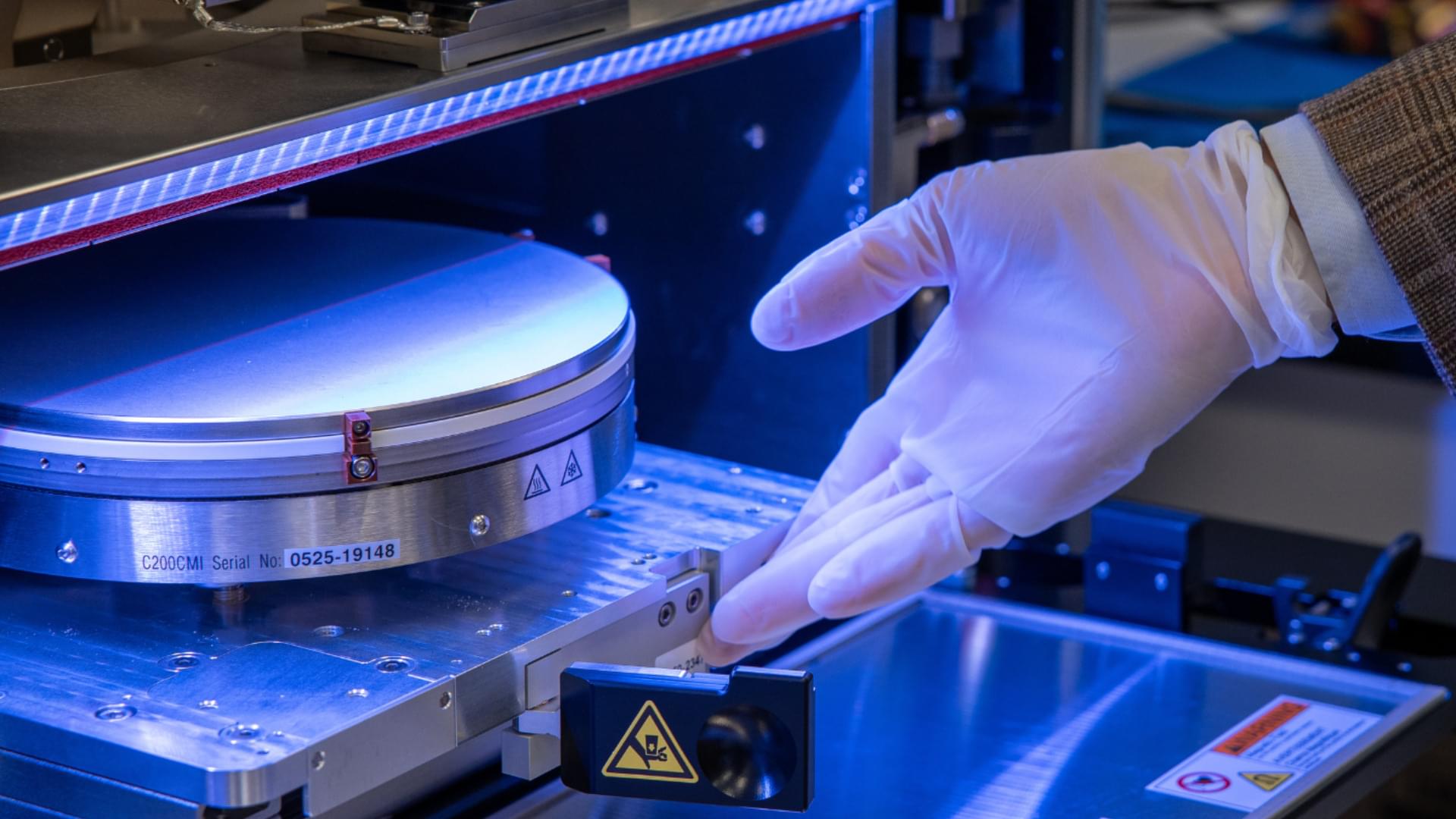

Stanford engineers debuted a new framework introducing computational tools and self-reflective AI assistants, potentially advancing fields like optical computing and astronomy.

Hyper-realistic holograms, next-generation sensors for autonomous robots, and slim augmented reality glasses are among the applications of metasurfaces, emerging photonic devices constructed from nanoscale building blocks.

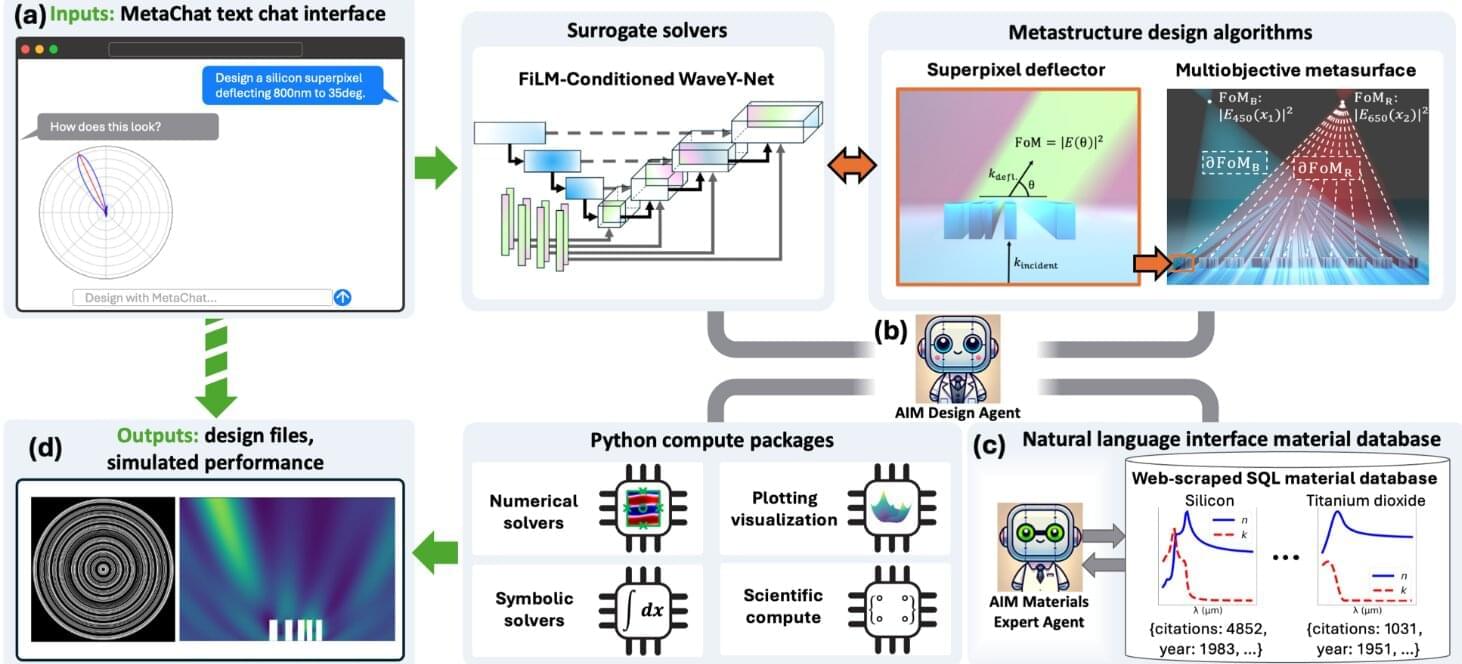

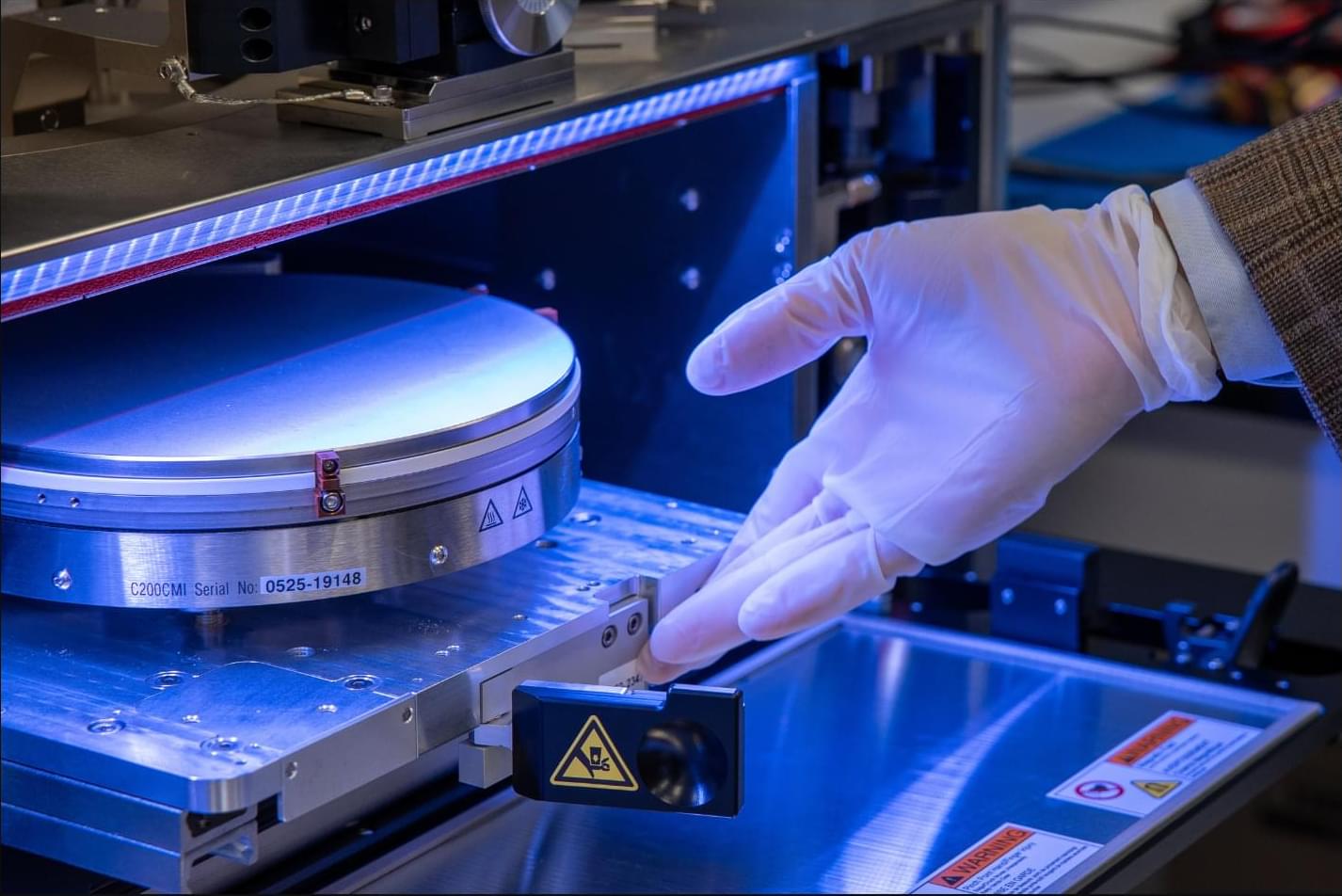

Now, Stanford engineers have developed an AI framework that rapidly accelerates metasurface design, with potential widespread technological applications. The framework, called MetaChat, introduces new computational tools and self-reflective AI assistants, enabling rapid solving of optics-related problems. The findings were reported recently in the journal Science Advances.