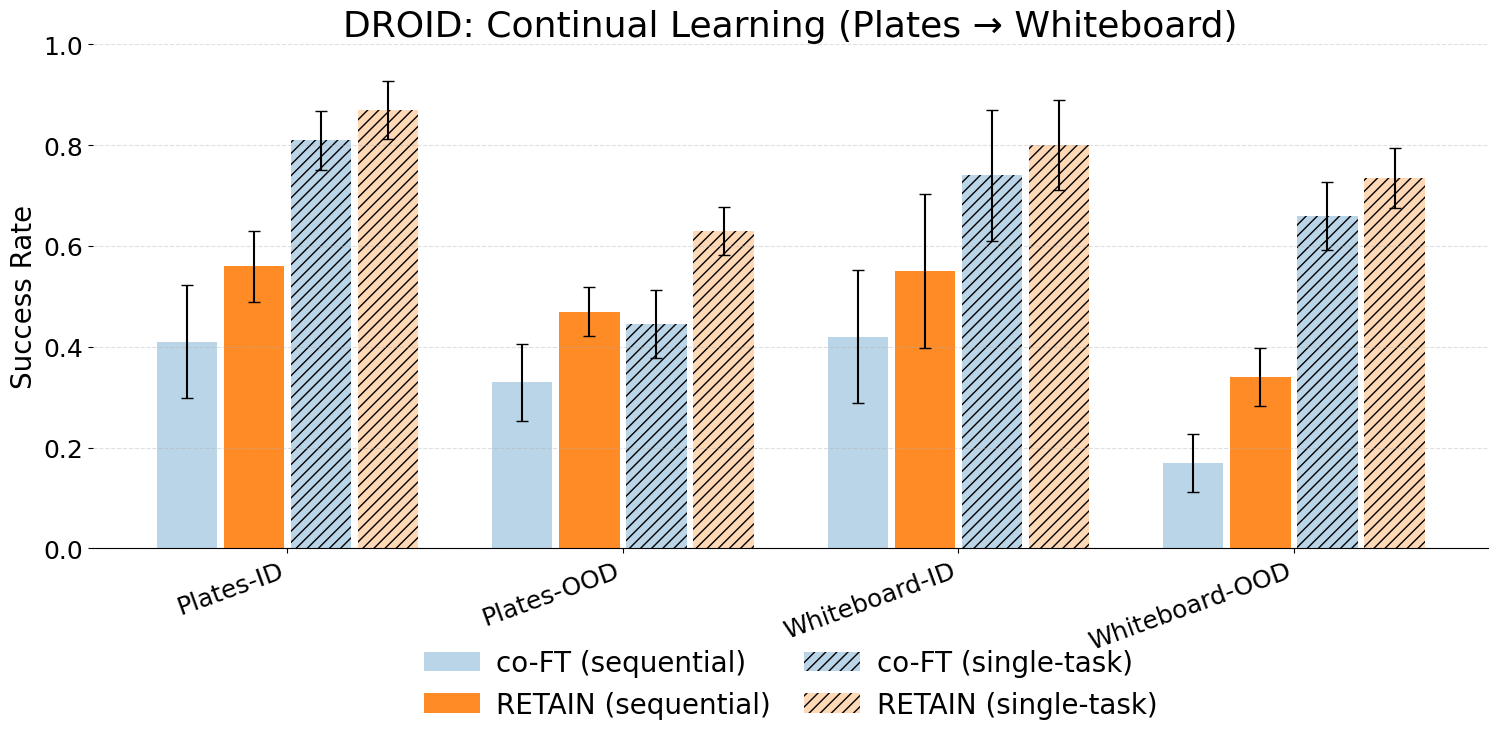

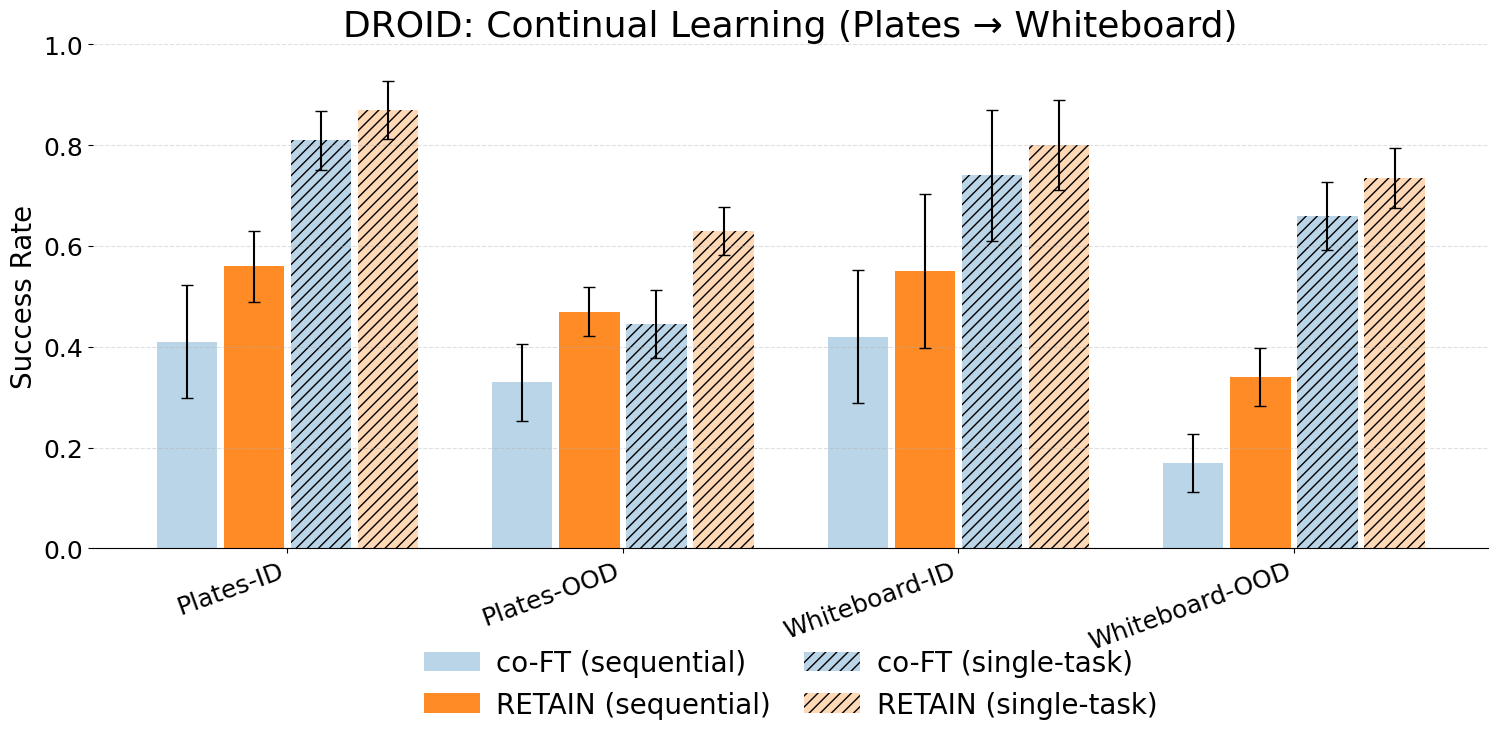

Via Parameter Merging.

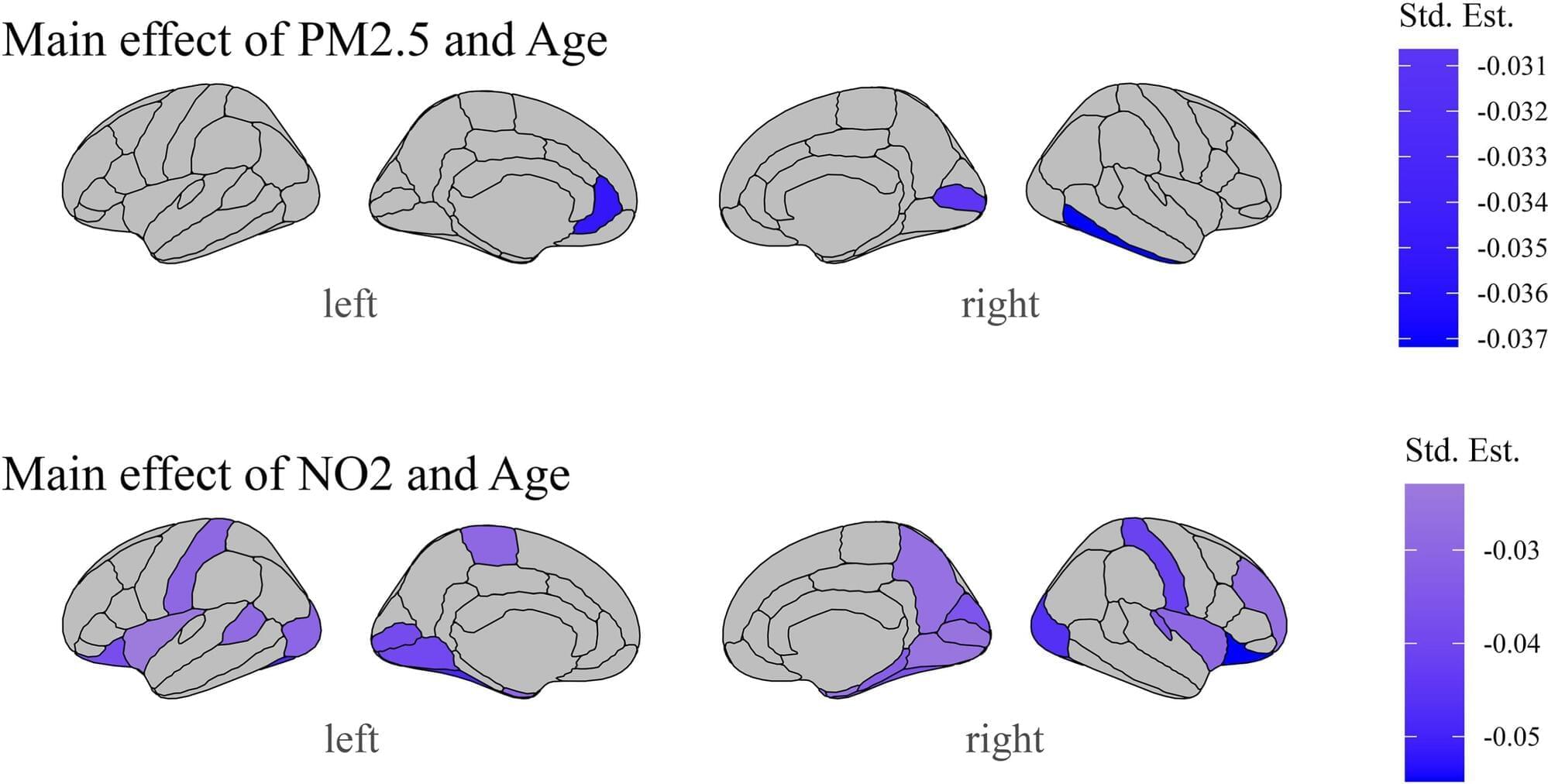

Physician-scientists at Oregon Health & Science University warn that exposure to air pollution may have serious implications for a child’s developing brain.

In a recent study published in the journal Environmental Research, researchers in OHSU’s Developmental Brain Imaging Lab found that air pollution is associated with structural changes in the adolescent brain, specifically in the frontal and temporal regions —the areas responsible for executive function, language, mood regulation and socioemotional processing.

Air pollution causes harmful contaminants, such as particulate matter, nitrogen dioxide and ozone, to circulate in the environment. It has been exacerbated over the past two centuries by industrialization, vehicle emissions, and, more recently, wildfires.

2025 Year in Review of LLM paradigm changes

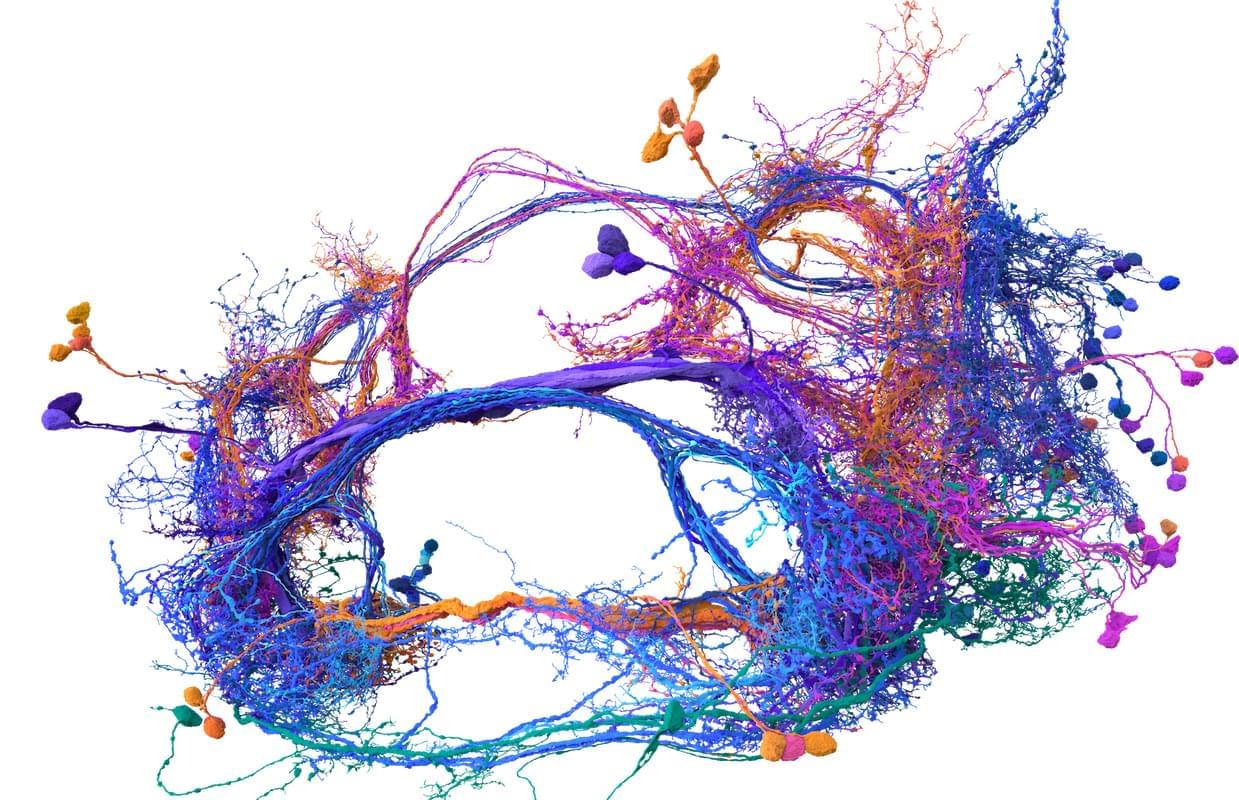

On a Sunday evening earlier this month, a Stanford professor held a salon at her home near the university’s campus. The main topic for the event was “synthesizing consciousness through neuroscience,” and the home filled with dozens of people, including artificial intelligence researchers, doctors, neuroscientists, philosophers and a former monk, eager to discuss the current collision between new AI and biological tools and how we might identify the arrival of a digital consciousness.

The opening speaker for the salon was Sebastian Seung, and this made a lot of sense. Seung, a neuroscience and computer science professor at Princeton University, has spent much of the last year enjoying the afterglow of his (and others’) breakthrough research describing the inner workings of the fly brain. Seung, you see, helped create the first complete wiring diagram of a fly brain and its 140,000 neurons and 55 million synapses. (Nature put out a special issue last October to document the achievement and its implications.) This diagram, known as a connectome, took more than a decade to finish and stands as the most detailed look at the most complex whole brain ever produced.

Meet Memazing.

Translated and dubbed using AI#southkorea #newstoday #samsung☞ Subscribe to MBN YouTube ☞ https://goo.gl/6ZsJGT📢 MBN YouTube Community

The humble pocket calculator may not be able to keep up with the mathematical capabilities of new technology, but it will never hallucinate.

The device’s enduring reliability equates to millions of sales each year for Japan’s Casio, which is even eyeing expansion in certain regions.

Despite lightning-speed advances in artificial intelligence, chatbots still sometimes stumble on basic addition.

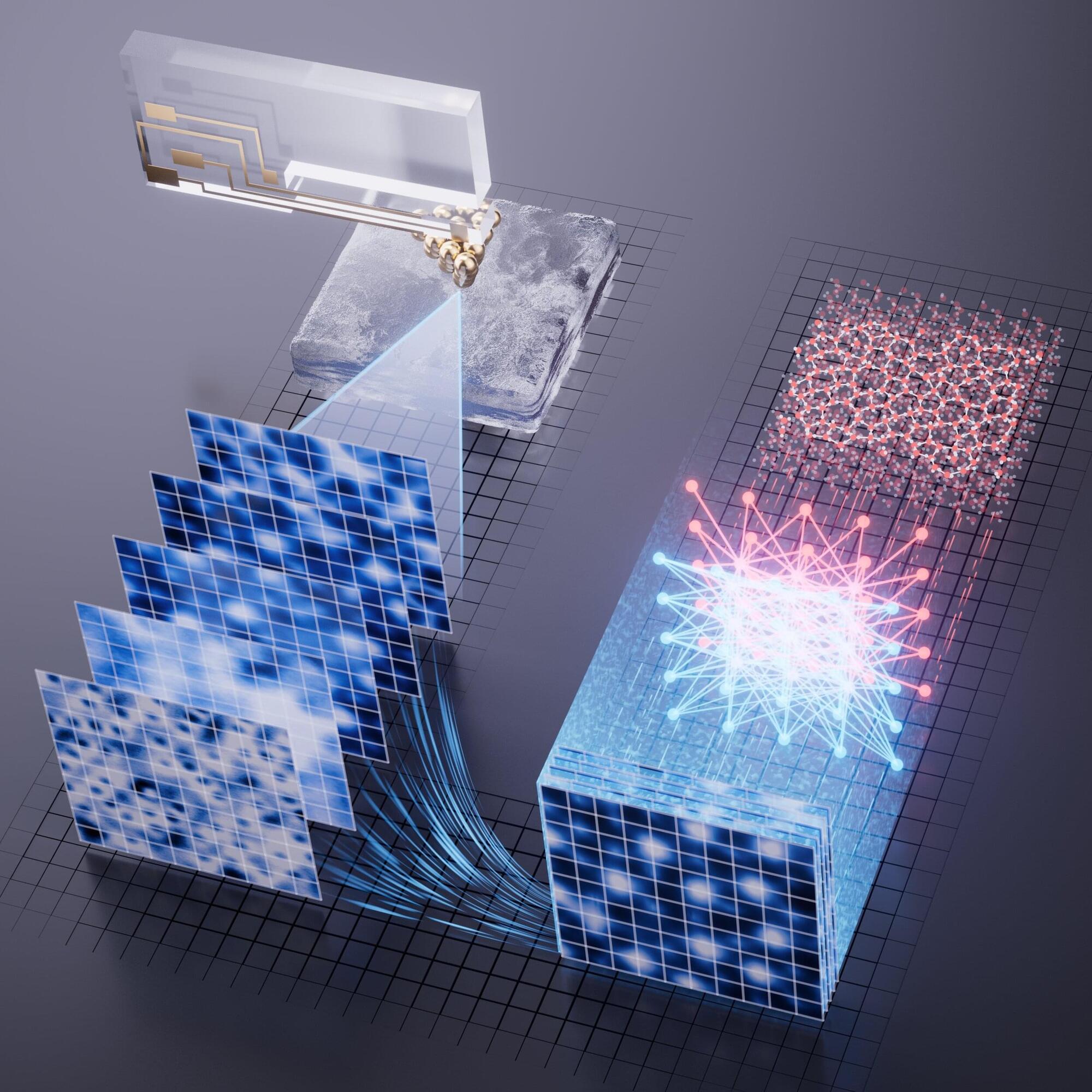

Through a novel combination of machine learning and atomic force microscopy, researchers in China have unveiled the molecular surface structure of “premelted” ice, resolving a long-standing mystery surrounding the liquid-like layer which forms on icy surfaces.

Detailed in a study in Physical Review X, the approach could also be applied more widely to reveal surface features that are too challenging for existing microscopy techniques to resolve.

Money is supposed to be the reward for effort. Elon Musk thinks it eventually becomes unnecessary paperwork.

While talking with Indian entrepreneur and investor Nikhil Kamath on the “People by WTF” podcast last month, the Tesla and SpaceX CEO and richest man in the world returned to a theme he’s raised before. It’s one he treats less like a theory and more like an inevitability. As artificial intelligence and robotics accelerate, Musk believes society moves past jobs, past income debates, and straight into something stranger.

Prediction Market powered by.