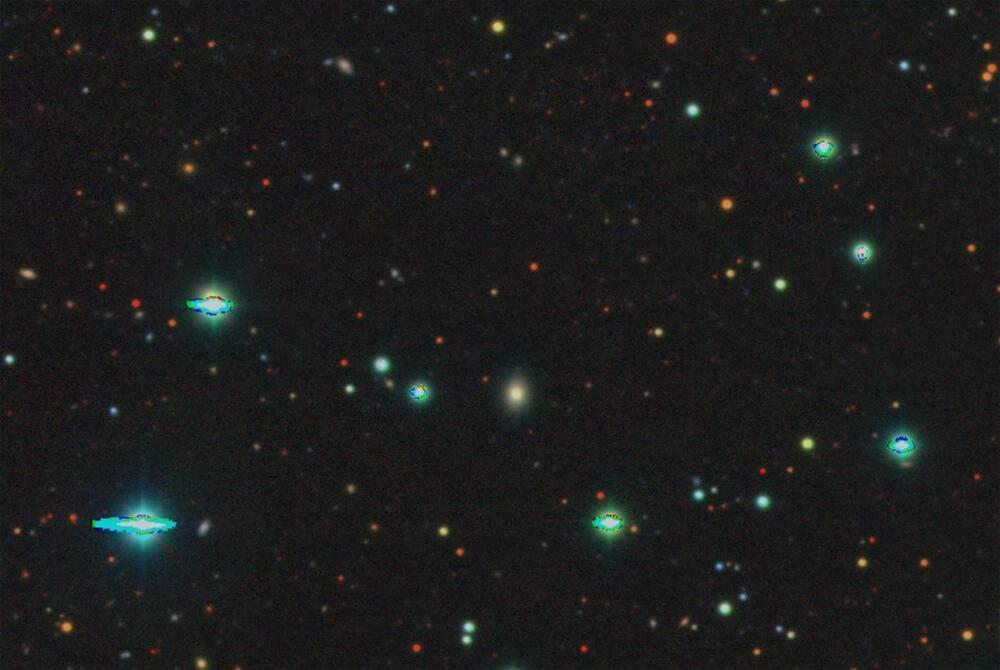

A fully automated process, including a brand-new artificial intelligence (AI) tool, has successfully detected, identified and classified its first supernova.

Developed by an international collaboration led by Northwestern University, the new system automates the entire search for new supernovae across the night sky—effectively removing humans from the process. Not only does this rapidly accelerate the process of analyzing and classifying new supernova candidates, it also bypasses human error.

The team alerted the astronomical community to the launch and success of the new tool, called the Bright Transient Survey Bot (BTSbot), this week. In the past six years, humans have spent an estimated total of 2,200 hours visually inspecting and classifying supernova candidates. With the new tool now officially online, researchers can redirect this precious time toward other responsibilities in order to accelerate the pace of discovery.