But because superintelligent AI doesn’t exist yet, they tried it out on GPT-4.

Artificial intelligence researchers claim to have made the world’s first scientific discovery using a large language model, a breakthrough that suggests the technology behind ChatGPT and similar programs can generate information that goes beyond human knowledge.

The finding emerged from Google DeepMind, where scientists are investigating whether large language models, which underpin modern chatbots such as OpenAI’s ChatGPT and Google’s Bard, can do more than repackage information learned in training and come up with new insights.

“When we started the project there was no indication that it would produce something that’s genuinely new,” said Pushmeet Kohli, the head of AI for science at DeepMind. “As far as we know, this is the first time that a genuine, new scientific discovery has been made by a large language model.”

Twenty-four years ago, Ray Kurzweil predicted computers would reach human-level intelligence by 2029. This was met with great concern and criticism. In the past six months technology experts have come around to agree with him. According to Kurzweil, over the next two decades, AI is going to change what it means to be human. We are going to invent new means of expression that will soar past human language, art, and science of today. All of the concepts that we rely on to give meaning to our lives, including death itself, will be transformed.\

\

Speakers:\

Ray Kurzweil\

Inventor, Futurist \& Best-selling author of ‘The Singularity is Near’\

\

Reinhard Scholl\

Deputy Director, Telecommunication Standardization Bureau\

International Telecommunication Union (ITU)\

Co-founder and Managing Director, AI for Good\

\

The AI for Good Global Summit is the leading action-oriented United Nations platform promoting AI to advance health, climate, gender, inclusive prosperity, sustainable infrastructure, and other global development priorities. AI for Good is organized by the International Telecommunication Union (ITU) – the UN specialized agency for information and communication technology – in partnership with 40 UN sister agencies and co-convened with the government of Switzerland.\

\

Join the Neural Network!\

👉https://aiforgood.itu.int/neural-netw…\

The AI for Good networking community platform powered by AI. \

Designed to help users build connections with innovators and experts, link innovative ideas with social impact opportunities, and bring the community together to advance the SDGs using AI.\

\

🔴 Watch the latest #AIforGood videos!\

/ aiforgood \

\

📩 Stay updated and join our weekly AI for Good newsletter:\

http://eepurl.com/gI2kJ5\

\

🗞Check out the latest AI for Good news:\

https://aiforgood.itu.int/newsroom/\

\

📱Explore the AI for Good blog:\

https://aiforgood.itu.int/ai-for-good…\

\

🌎 Connect on our social media:\

Website: https://aiforgood.itu.int/\

Twitter: / aiforgood \

LinkedIn Page: / 26,511,907 \

LinkedIn Group: / 8,567,748 \

Instagram: / aiforgood \

Facebook: / aiforgood \

\

What is AI for Good?\

We have less than 10 years to solve the UN SDGs and AI holds great promise to advance many of the sustainable development goals and targets.\

More than a Summit, more than a movement, AI for Good is presented as a year round digital platform where AI innovators and problem owners learn, build and connect to help identify practical AI solutions to advance the United Nations Sustainable Development Goals.\

AI for Good is organized by ITU in partnership with 40 UN Sister Agencies and co-convened with Switzerland.\

\

Disclaimer:\

The views and opinions expressed are those of the panelists and do not reflect the official policy of the ITU.

The mini-brain functioned like both the central processing unit and memory storage of a supercomputer. It received input in the form of electrical zaps and outputted its calculations through neural activity, which was subsequently decoded by an AI tool.

When trained on soundbites from a pool of people—transformed into electrical zaps—Brainoware eventually learned to pick out the “sounds” of specific people. In another test, the system successfully tackled a complex math problem that’s challenging for AI.

The system’s ability to learn stemmed from changes to neural network connections in the mini-brain—which is similar to how our brains learn every day. Although just a first step, Brainoware paves the way for increasingly sophisticated hybrid biocomputers that could lower energy costs and speed up computation.

US Customs and Border Protection (CBP) recently awarded Pangiam, a leading trade and travel technology company, a prime contract for developing and implementing Anomaly Detection Algorithms (ADA).

Pangiam, in collaboration with West Virginia University, aims to bring cutting-edge artificial intelligence (AI), computer vision, and machine learning expertise to enhance CBP’s border and national security missions, the company announced in a press release.

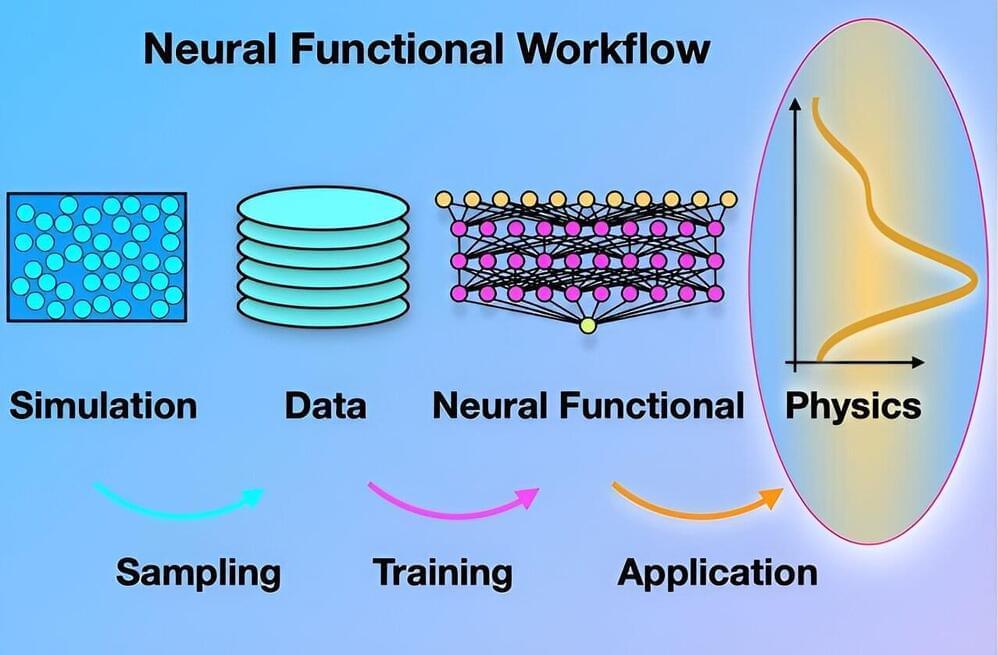

“In the study, we demonstrate how artificial intelligence can be used to carry out fundamental theoretical physics that addresses the behavior of fluids and other complex soft matter systems,” says Prof. Dr. Matthias Schmidt, chair of Theoretical Physics II at the University of Bayreuth.

Scientists from Bayreuth have developed a new method for studying liquid and soft matter using artificial intelligence. In a study now published in the Proceedings of the National Academy of Sciences, they open up a new chapter in density functional theory.

We live in a highly technologized world where basic research is the engine of innovation, in a dense and complex web of interrelationships and interdependencies. The published research provides new methods that can have a great influence on widespread simulation techniques, so that complex substances can be investigated on computers more quickly, more precisely and more deeply.

In the future, this could have an influence on product and process design. The fact that the structure of liquids can be excellently represented by the newly formulated neural mathematical relationships is a major breakthrough that opens up a range of possibilities for gaining deep physical insights.

Google thinks that there’s an opportunity to offload more healthcare tasks to generative AI models — or at least, an opportunity to recruit those models to aid healthcare workers in completing their tasks.

Google has unveiled MedLM, a new family of generative AI models designed to tackle healthcare-related tasks.

When it comes to spurring the development of cutting-edge technologies, the Chinese government is rather pragmatic in its policymaking process. In the field of autonomous driving, the country has made some big strides in defining the parameters and limitations for service providers, removing regulatory ambiguity and granting industry players the freedom to test the nascent technology.

The trial guidelines, unveiled by the Ministry of Transport recently, target AV services like robotaxis, self-driving trucks and robobuses. The release arrived about 16 months after the department began seeking public opinions on the regulatory framework, and policymakers have reached a consensus that self-driving vehicles are subject to rigorous surveillance measures to ensure utmost safety.

Prior to the introduction of the nationwide guidelines, policymaking for AVs in China had been playing out in a more decentralized fashion, with local governments formulating their own rules for service providers on their turf. Major tech clusters like Beijing, Shenzhen and Guangzhou, for example, have been frontrunners in allowing companies to test AVs with minimum human interference.