Sky News Investigations Reporter Jonathan Lea sits down to talk with a “free-thinking” and “opinionated” artificial intelligence, Ameca Desktop.

“Ameca is driven by the same artificial intelligence behind Chat-GPT,” Mr Lea said.

Sky News Investigations Reporter Jonathan Lea sits down to talk with a “free-thinking” and “opinionated” artificial intelligence, Ameca Desktop.

“Ameca is driven by the same artificial intelligence behind Chat-GPT,” Mr Lea said.

Scientists have established how the activity of our brain during imaginary movement differs from that during real action. It turns out that in both cases, a previous signal occurs in the cerebral cortex, but with an imaginary movement, it does not have a clear link to a specific hemisphere.

The obtained data can potentially be used in medical practice to create neuro trainers and control the restoration of neural networks in post-stroke patients. The results of the study are published in the journal Cerebral Cortex.

Before we pick up a pen or put down a cup, a complete picture of this action is formed in the brain. Such visual–motor transformations ensure the accuracy of our movements. Knowing about these mechanisms helps patients to restore motor activity after strokes. But we don’t always finish the movement we started. In this case, visual information enters the motor areas of the cortex responsible for movement, but the start of the reaction is blocked at some point, and mental effort does not end with real muscle activation.

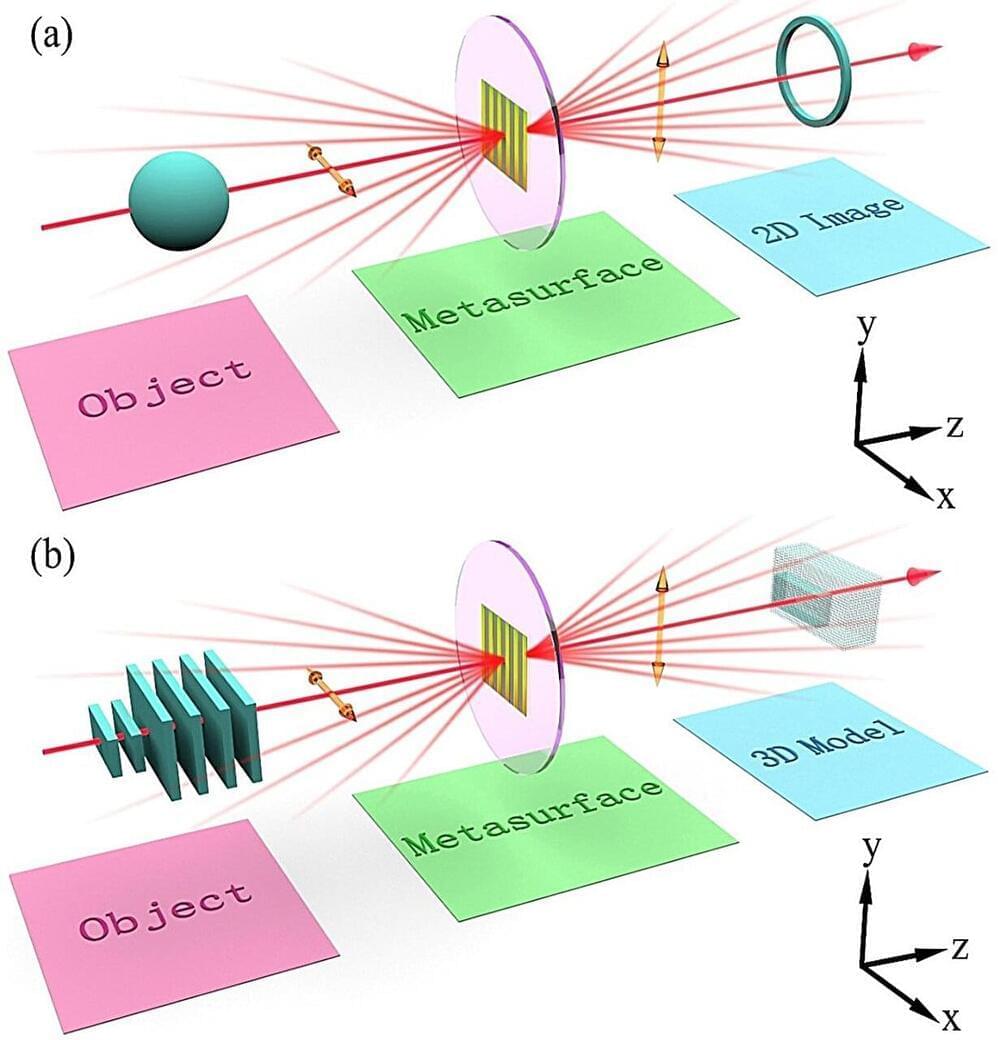

As object identification and three-dimensional (3D) reconstruction techniques become essential in various reverse engineering, artificial intelligence, medical diagnosis, and industrial production fields, there is an increasing focus on seeking vastly efficient, faster speed, and more integrated methods that can simplify processing.

In the current field of object identification and 3D reconstruction, extracting sample contour information is primarily accomplished by various computer algorithms. Traditional computer processors suffer from multiple constraints, such as high-power consumption, low-speed operation, and complex algorithms. In this regard, there has recently been growing attention in searching for alternative optical methods to perform those techniques.

The development of optical computing theory and image processing has provided a more complete theoretical basis for object identification and 3D reconstruction techniques. Optical methods have received more attention as an alternative paradigm than traditional mechanisms in recent years due to their enormous advantages of ultra-fast operation speed, high integration, and low latency.

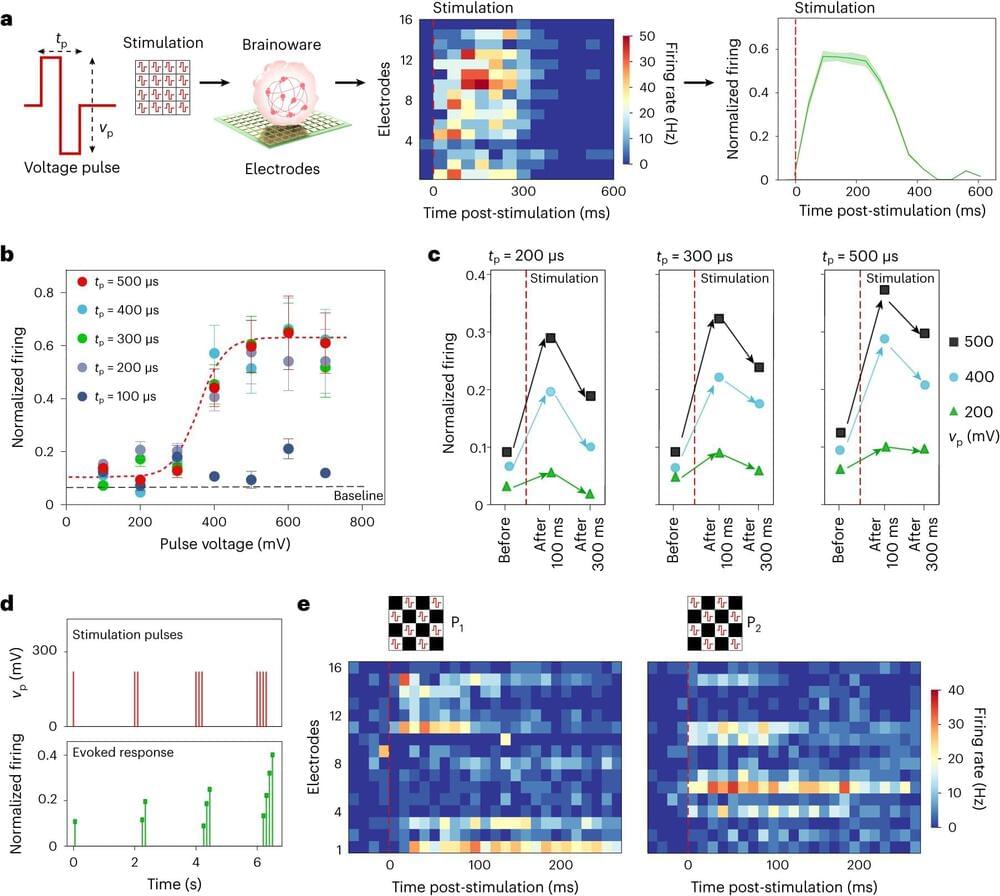

Feng Guo, an associate professor of intelligent systems engineering at the Indiana University Luddy School of Informatics, Computing and Engineering, is addressing the technical limitations of artificial intelligence computing hardware by developing a new hybrid computing system—which has been dubbed “Brainoware”—that combines electronic hardware with human brain organoids.

Advanced AI techniques, such as machine learning and deep learning, which are powered by specialized silicon computer chips, expend enormous amounts of energy. As such, engineers have designed neuromorphic computing systems, modeled after the structure and function of a human brain, to improve the performance and efficiency of these technologies. However, these systems are still limited in their ability to fully mimic brain function, as most are built on digital electronic principles.

In response, Guo and a team of IU researchers, including graduate student Hongwei Cai, have developed a hybrid neuromorphic computing system that mounts a brain organoid onto a multielectrode assay to receive and send information. The brain organoids are brain-like 3D cell cultures derived from stem cells and characterized by different brain cell types, including neurons and glia, and brain-like structures such as ventricular zones.

Regulatory efforts to protect data are making strides globally. Patient data is protected by law in the United States and elsewhere. In Europe the General Data Protection Regulation (GDPR) guards personal data and recently led to a US $1.3 billion fine for Meta. You can even think of Apple’s App Store policies against data sharing as a kind of data-protection regulation.

“These are good constraints. These are constraints society wants,” says Michael Gao, founder and CEO of Fabric Cryptography, one of the startups developing FHE-accelerating chips. But privacy and confidentiality come at a cost: They can make it more difficult to track disease and do medical research, they potentially let some bad guys bank, and they can prevent the use of data needed to improve AI.

“Fully homomorphic encryption is an automated solution to get around legal and regulatory issues while still protecting privacy,” says Kurt Rohloff, CEO of Duality Technologies, in Hoboken, N.J., one of the companies developing FHE accelerator chips. His company’s FHE software is already helping financial firms check for fraud and preserving patient privacy in health care research.

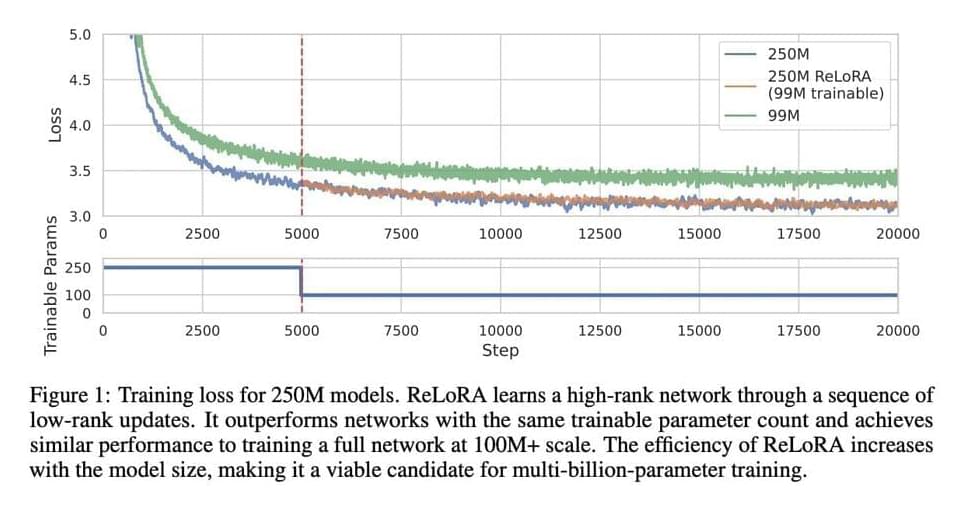

In machine learning, larger networks with increasing parameters are being trained. However, training such networks has become prohibitively expensive. Despite the success of this approach, there needs to be a greater understanding of why overparameterized models are necessary. The costs associated with training these models continue to rise exponentially.

A team of researchers from the University of Massachusetts Lowell, Eleuther AI, and Amazon developed a method known as ReLoRA, which uses low-rank updates to train high-rank networks. ReLoRA accomplishes a high-rank update, delivering a performance akin to conventional neural network training.

Scaling laws have been identified, demonstrating a strong power-law dependence between network size and performance across different modalities, supporting overparameterization and resource-intensive neural networks. The Lottery Ticket Hypothesis suggests that overparameterization can be minimized, providing an alternative perspective. Low-rank fine-tuning methods, such as LoRA and Compacter, have been developed to address the limitations of low-rank matrix factorization approaches.