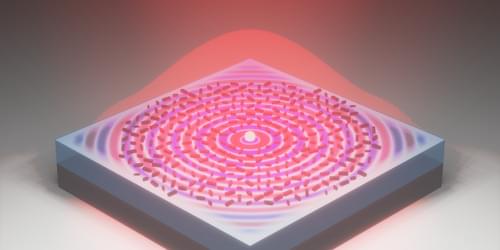

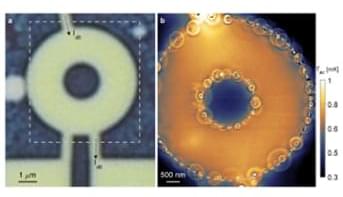

In a new study, physicists at the University of Colorado Boulder have used a cloud of atoms chilled down to incredibly cold temperatures to simultaneously measure acceleration in three dimensions—a feat that many scientists didn’t think was possible.

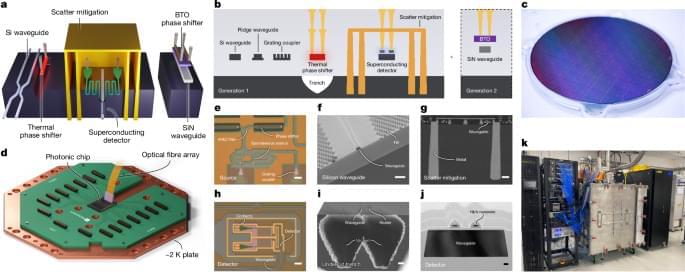

The device, a new type of atom “interferometer,” could one day help people navigate submarines, spacecraft, cars and other vehicles more precisely.

“Traditional atom interferometers can only measure acceleration in a single dimension, but we live within a three-dimensional world,” said Kendall Mehling, a co-author of the new study and a graduate student in the Department of Physics at CU Boulder. “To know where I’m going, and to know where I’ve been, I need to track my acceleration in all three dimensions.”

The researchers published their paper, titled “Vector atom accelerometry in an optical lattice,” this month in the journal Science Advances. The team included Mehling; Catie LeDesma, a postdoctoral researcher in physics; and Murray Holland, professor of physics and fellow of JILA, a joint research institutebetween CU Boulder and the National Institute of Standards and Technology (NIST) (More information about the new quantum GPS)

A new quantum device could one day help spacecraft travel beyond Earth’s orbit or aid submarines as they navigate deep under the ocean with more precision than.