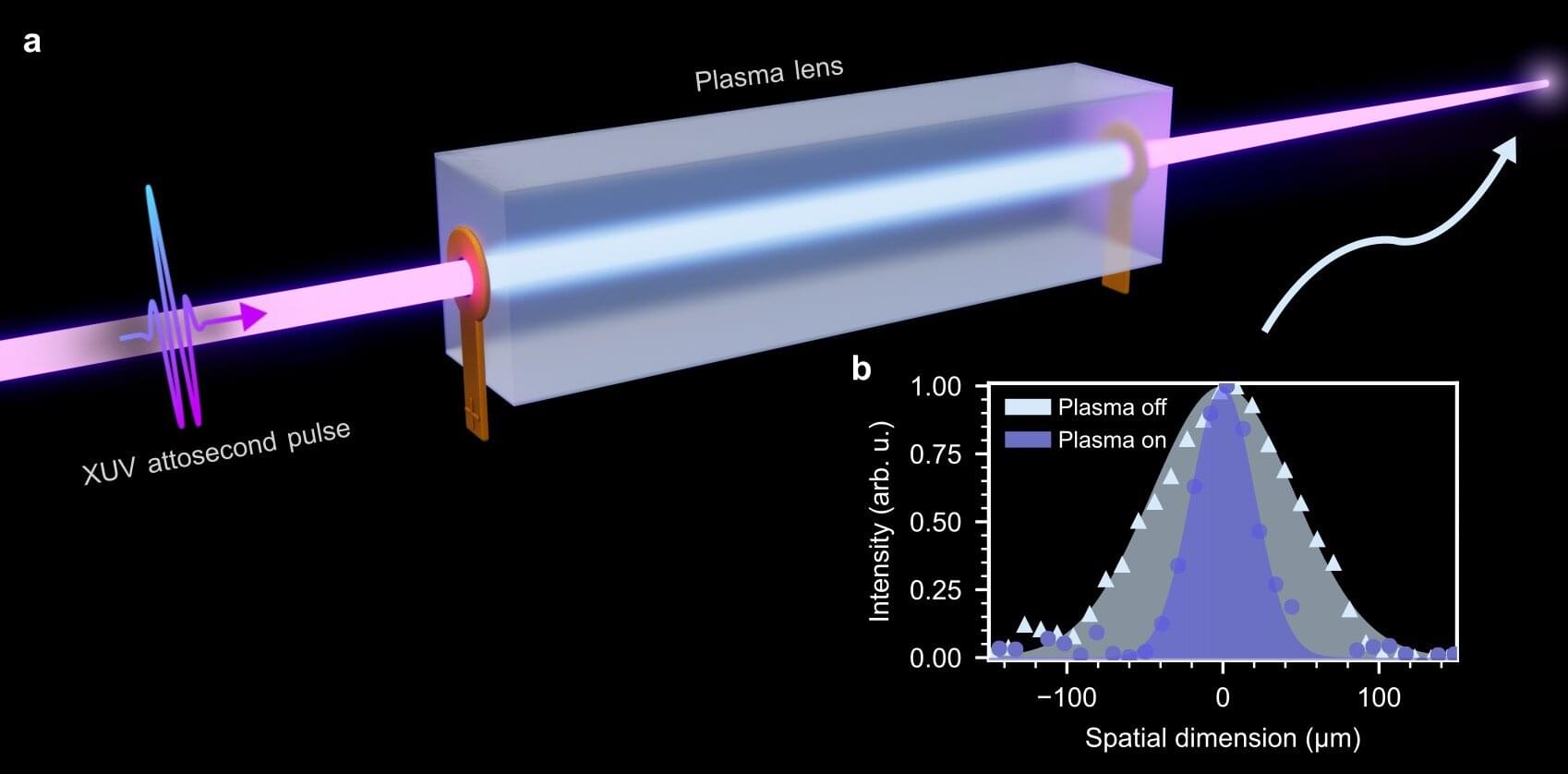

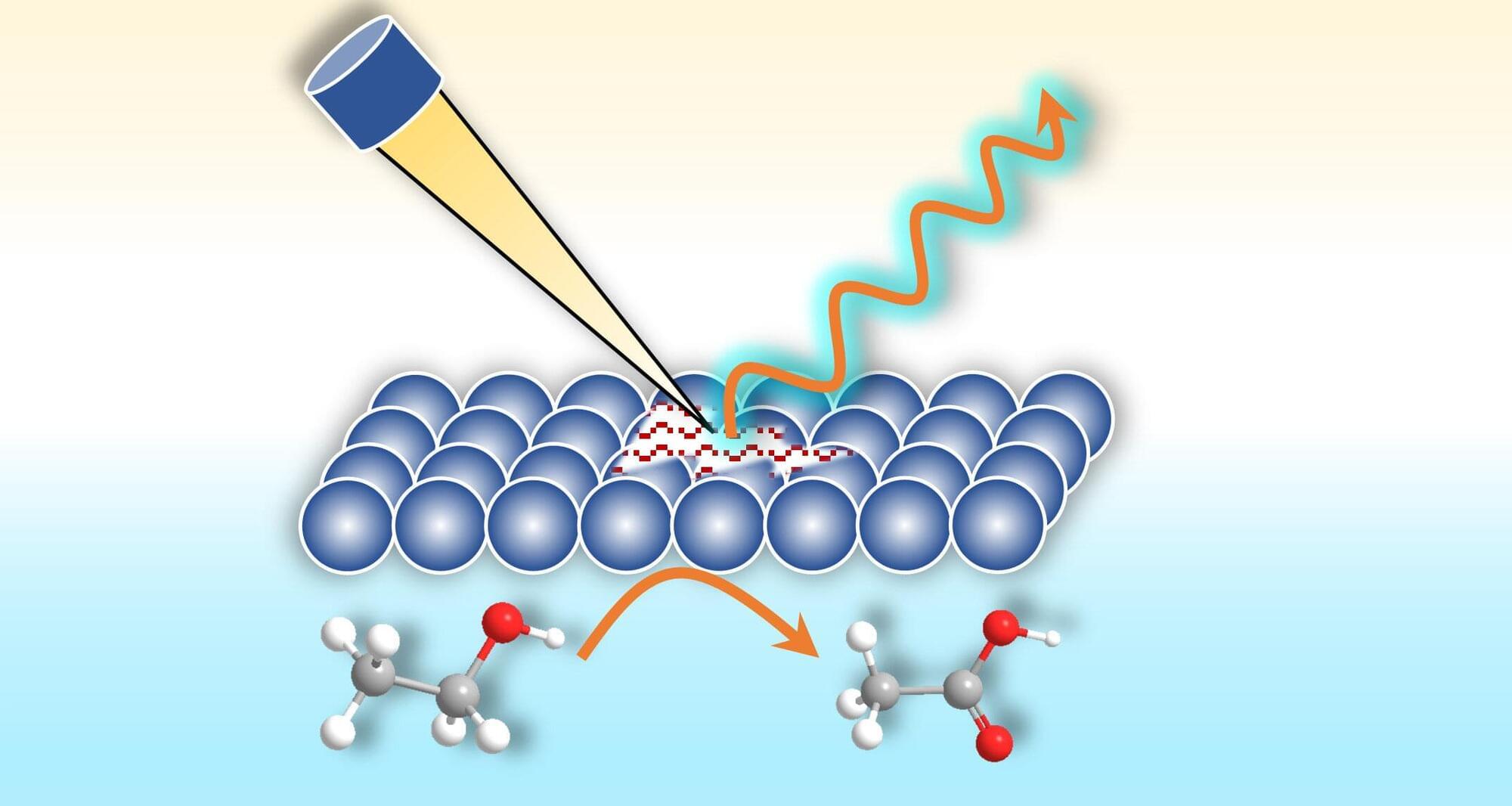

A team of researchers from the Max Born Institute (MBI) in Berlin and DESY in Hamburg has demonstrated a plasma lens capable of focusing attosecond pulses. This breakthrough substantially increases the attosecond power available for experiments, opening up new opportunities for studying ultrafast electron dynamics. The results have now been published in Nature Photonics.

Attosecond pulses—bursts of light lasting only billionths of a billionth of a second—are essential tools for observing and controlling electronic motion in atoms, molecules, and solids. However, focusing these pulses, which lie in the extreme-ultraviolet (XUV) or X-ray region of the electromagnetic spectrum, has proven highly challenging due to the lack of suitable optics.

Mirrors are commonly used, but they offer low reflectivity and degrade quickly. Lenses, though the most straightforward tool for focusing visible light, are not suitable for focusing attosecond pulses, because they absorb the XUV light and stretch the attosecond pulses in time.