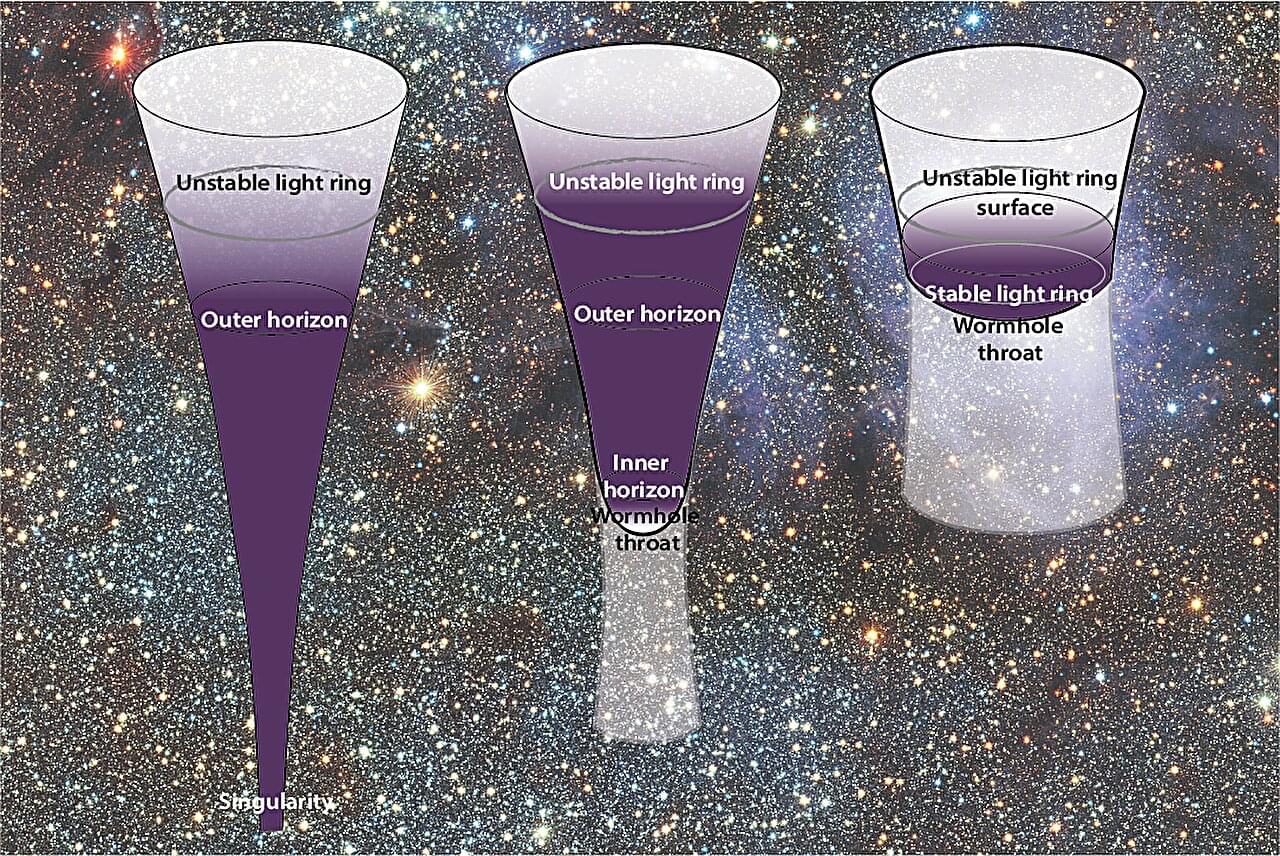

Ever since general relativity pointed to the existence of black holes, the scientific community has been wary of one peculiar feature: the singularity at the center—a point, hidden behind the event horizon, where the laws of physics that govern the rest of the universe appear to break down completely. For some time now, researchers have been working on alternative models that are free of singularities.

A new paper published in the Journal of Cosmology and Astroparticle Physics, the outcome of work carried out at the Institute for Fundamental Physics of the Universe (IFPU) in Trieste, reviews the state of the art in this area. It describes two alternative models, proposes observational tests, and explores how this line of research could also contribute to the development of a theory of quantum gravity.

“Hic sunt leones,” remarks Stefano Liberati, one of the authors of the paper and director of IFPU. The phrase refers to the hypothetical singularity predicted at the center of standard black holes —those described by solutions to Einstein’s field equations. To understand what this means, a brief historical recap is helpful.