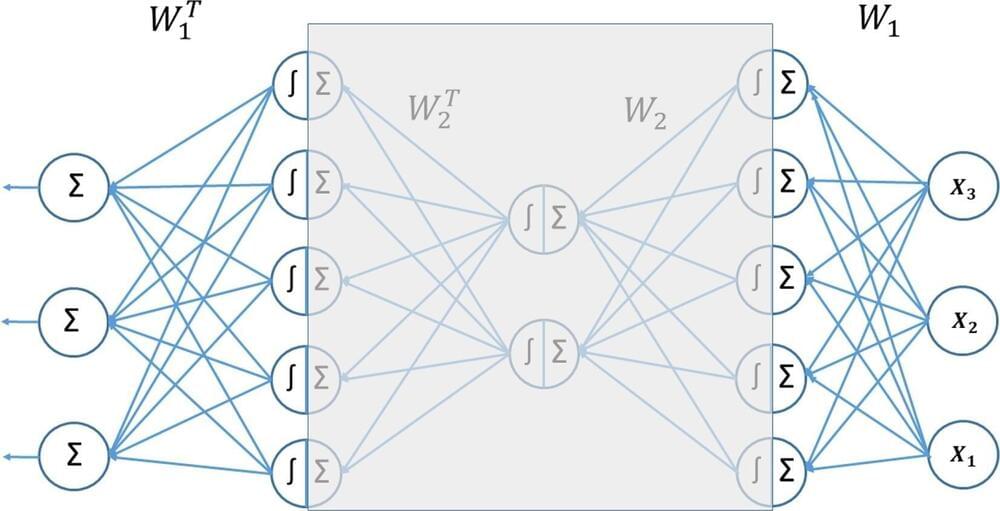

The most widely used machine learning algorithms were designed by humans and thus are hindered by our cognitive biases and limitations. Can we also construct meta-learning algorithms that can learn better learning algorithms so that our self-improving AIs have no limits other than those inherited from computability and physics? This question has been a main driver of my research since I wrote a thesis on it in 1987. In the past decade, it has become a driver of many other people’s research as well. Here I summarize our work starting in 1994 on meta-reinforcement learning with self-modifying policies in a single lifelong trial, and — since 2003 — mathematically optimal meta-learning through the self-referential Gödel Machine. This talk was previously presented at meta-learning workshops at ICML 2020 and NeurIPS 2021. Many additional publications on meta-learning can be found at https://people.idsia.ch/~juergen/metalearning.html.

Jürgen Schmidhuber.

Director, AI Initiative, KAUST

Scientific Director of the Swiss AI Lab IDSIA

Co-Founder & Chief Scientist, NNAISENSE

http://www.idsia.ch/~juergen/blog.html.