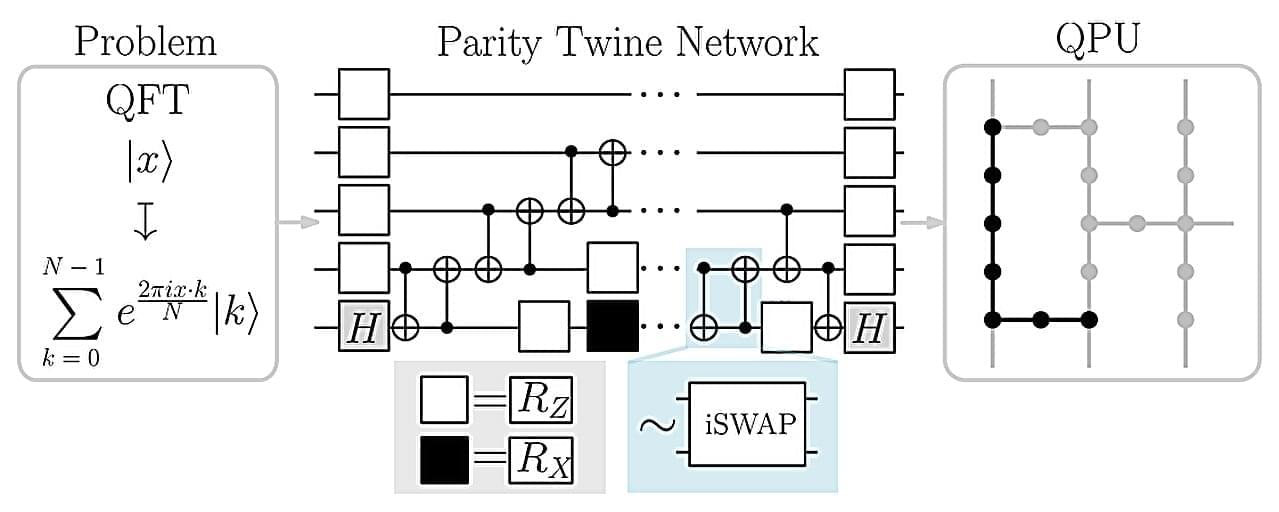

The spin-off company ParityQC has implemented the largest quantum Fourier transform ever reported using an IBM quantum computer, thereby setting a new milestone on the path toward the industrial application of quantum computers. The quantum Fourier transform is a cornerstone algorithm with applications in cryptography, financial modeling, and materials science.

Innsbruck-based quantum architecture company ParityQC performed a quantum Fourier transform using 52 superconducting qubits on an IBM Heron quantum processor. This surpasses the previous record of 27 qubits, which was set two years ago using an ion-trap quantum computer. The results were published this week on the arXiv preprint server.

“This milestone was only possible through the synergy of IBM’s latest quantum hardware and the ParityQC Architecture, which unlocked an exponential improvement in efficiency,” say Wolfgang Lechner and Magdalena Hauser, Co-CEOs of ParityQC. “What we are witnessing is European quantum innovation taking a global lead in translating theoretical potential into real-world performance.”