Data governs our lives more than ever. But when it comes to disease and death, every data point is a person, someone who became sick and needed treatment.

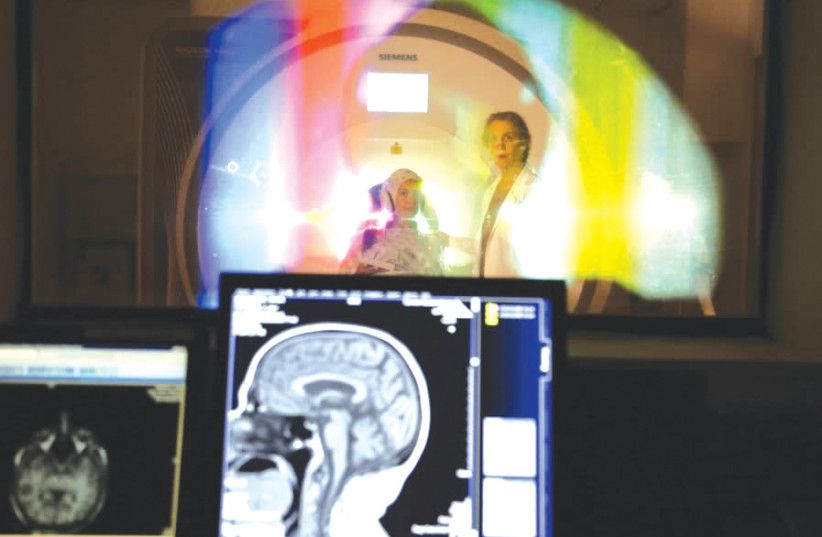

Recent studies have revealed that people suffering from the same disease category may have different manifestations. As doctors and scientists better understand the reasons underlying this variability, they can develop novel preventive, diagnostic and therapeutic approaches and provide optimal, personalized care for every patient.

To accomplish this goal often requires broadscale collaborations between physicians, basic researchers, theoreticians, experimentalists, computational biologists, computer scientists and data scientists, engineers, statisticians, epidemiologists and others. They must work together to integrate scientific and medical knowledge, theory, analysis of medical big data and extensive experimental work.

This year, the Israel Precision Medicine Partnership (IPMP) selected 16 research projects to receive NIS 60 million in grants with the goal of advancing the implementation of personalized healthcare approaches – providing the right treatment to the right patient at the right time. All the research projects pull data from Israel’s unique and vast medical databases.

HEALTH AND SCIENCE AFFAIRS: 16 Israeli projects get NIS 60m. to innovate next stage of healthcare.