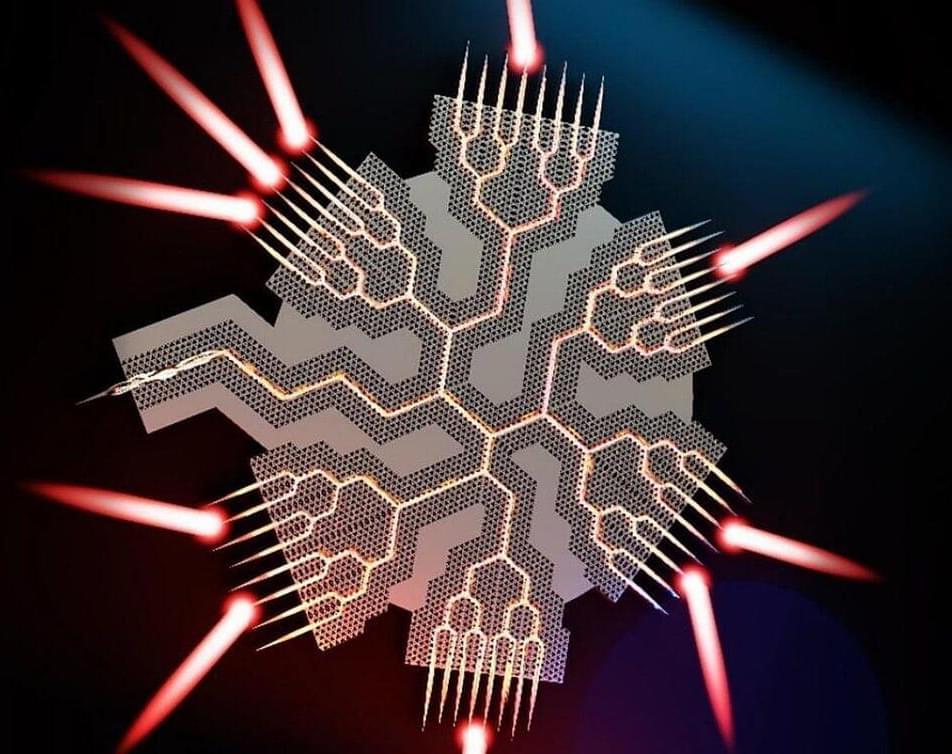

HAPPI seeks demonstrations of low-loss, high-density optical interconnects using scalable manufacturing compatible with microelectronics.

Related: SDR: a spectrum of possibilities

NAVWAR awarded the order on behalf of the Navy’s Program Executive Office for Command, Control, Communication, Computers, and Intelligence (PEO C4I) in San Diego.

The AN/USC-61© is a maritime software-defined radio (SDR) that has become standard for the U.S. military. The compact, multi-channel DMR provides several different waveforms and multi-level information security for voice and data communications.

“Not being able to communicate is so frustrating and demoralizing. It is like you are trapped,” Harrell said. “Something like this technology will help people back into life and society.”

For the researchers involved, seeing the impact of their work on Harrell’s life has been deeply rewarding. “It has been immensely rewarding to see Casey regain his ability to speak with his family and friends through this technology,” said the study’s lead author, Nicholas Card, a postdoctoral scholar in the UC Davis Department of Neurological Surgery.

Leigh Hochberg, a neurologist and neuroscientist involved in the BrainGate trial, praised Harrell and other participants for their contributions to this groundbreaking research. “Casey and our other BrainGate participants are truly extraordinary. They deserve tremendous credit for joining these early clinical trials,” Hochberg said. “They do this not because they’re hoping to gain any personal benefit, but to help us develop a system that will restore communication and mobility for other people with paralysis.”

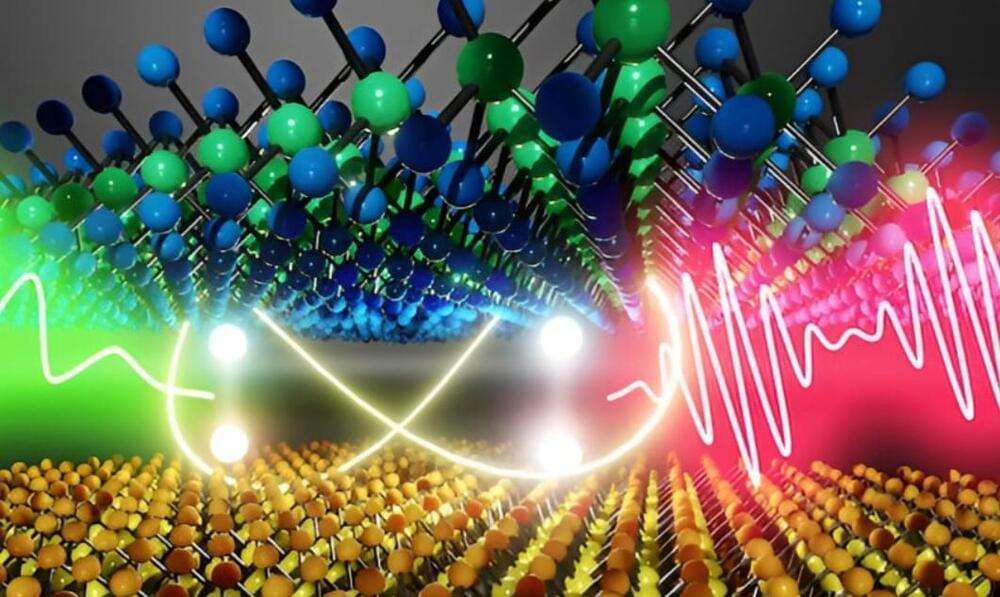

Quantum technology relies on qubits, the fundamental units of quantum computers, whose operation is influenced by quantum coherence time. Scientists believe that moiré excitons — electron-hole pairs trapped in overlapping moiré interference fringes — could serve as qubits in future nano-semiconductors. However, previous limitations in focusing light have caused optical interference, making it difficult to measure these excitons accurately.

Kyoto University researchers have developed a new technique to reduce moiré excitons, allowing for accurate measurement of quantum coherence time. Their findings, published in Nature Communications, reveal that the quantum coherence of a single moiré exciton remains stable for over 12 picoseconds at −269°C, significantly longer than that of excitons in traditional two-dimensional semiconductors. The confined moiré excitons in interference fringes help maintain quantum coherence, advancing the potential of quantum technology.

“We combined electron beam microfabrication techniques with reactive ion etching. By utilizing Michelson interferometry on the emission signal from a single moiré exciton, we could directly measure its quantum coherence time,” said Kazunari Matsuda of KyotoU’s Institute Advanced Energy.

Karl Friston is a leading neuroscientist and pioneer of the free energy principle, celebrated for his influential work in computational neuroscience and his profound impact on understanding brain function and cognition. Karl is a Professor of Neuroscience at University College London and a Fellow of the Royal Society, with numerous awards recognizing his contributions to theoretical neurobiology.

Listen on Spotify: https://open.spotify.com/show/4gL14b9…

Become a YouTube Member Here:

/ @theoriesofeverything.

Patreon: / curtjaimungal (early access to ad-free audio episodes!)

Join TOEmail at https://www.curtjaimungal.org.

LINKS: — karl’s previous TOE episode: • karl friston: the \

Microsoft removed today an arbitrary 32GB size limit for FAT32 partitions in the latest Windows 11 Canary build, now allowing for a maximum size of 2TB.

“When formatting disks from the command line using the format command, we’ve increased the FAT32 size limit from 32GB to 2TB,” the Windows Insider team said today.

Previously, despite this artificial 32GB limit, Windows systems could still read larger FAT32 file systems if they were created on other operating systems or through alternative methods (e.g., from a Windows PowerShell prompt with administrative privileges or using third-party apps that ignored this artificial size limit).

Security researchers disclosed PoC exploit codes for three vulnerabilities (CVE-2023–4206, CVE-2023–4207, and CVE-2023–4208) in the Linux kernel, impacting versions v3.18-rc1 to v6.5-rc4. These “use-after-free” vulnerabilities within the net/sched component could allow local privilege escalation, enabling attackers to gain unauthorized control over affected systems. The vulnerabilities have been given a CVSS score of 7.8, indicating their high severity.

Quantum is huge. Because quantum computing allows us to step beyond the current limitations of digital systems, it paves the way for a new era of computing machines with previously unthinkable power. Without recounting another simplified explanation of how quantum gets its power at length, we can reference the double-slit experiment and perhaps the spinning coin explanation.

A coin sat on a desk is either heads or tails, rather like the 1s and 0s that express the on or off values in binary code. Quantum theorists would prefer we think of the coin above the desk, spinning in the air. In this state, the coin is both heads and tails at the same time. This is because, at the quantum level, both values exist until we make an observation of its state at any given point in time. We could further increase the number of positions possible (literally known as quantum superposition) by altering the angle of view we take on the coin, which is somewhat similar to how we work with qubits in quantum mechanics.

So then, Schrödinger’s cat is both alive and dead at the same time and the dummies guide to quantum entanglement is out there on the web if needed. What matters most now is how we will make practical use of quantum computing and where it will be applied for best advantage.

A University of Portsmouth physicist has explored whether a new law of physics could support the much-debated theory that we are simply characters in an advanced virtual world.

The simulated universe hypothesis proposes that what humans experience is actually an artificial reality, much like a computer simulation, in which they themselves are constructs.

The theory is popular among a number of well-known figures including Elon Musk, and within a branch of science known as information physics, which suggests physical reality is fundamentally made up of bits of information.