The concept could prolong the usage duration among users while also increasing areas of application thanks to a lighter-weight device.

Microsoft’s recently approved patent for augmented reality (AR) glasses shows a swappable battery that could make it a top choice among buyers when it becomes available. The patent was published last week, MSPowerUser.

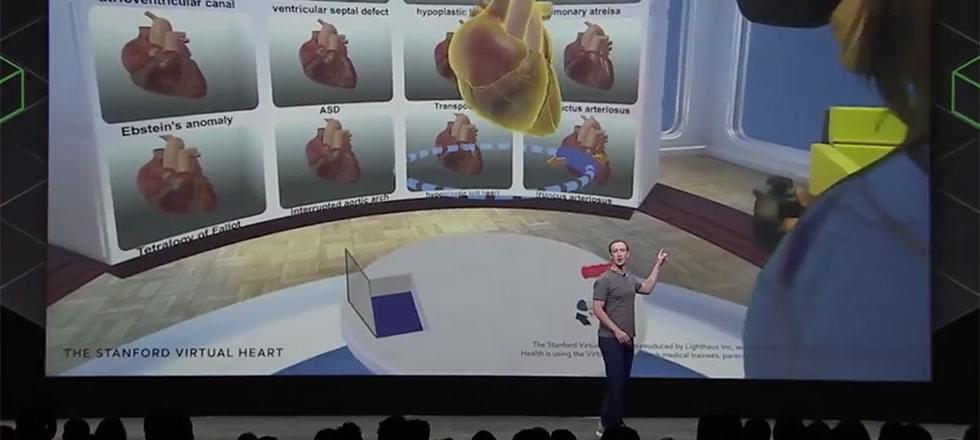

AR glasses are considered the next frontier of mobile technology that promises to replace smartphones today. About a decade ago, Google attempted to develop something along these lines and released its Glass to the public. However, high costs and limited functionality led to its ultimate demise, even though the concept continues to thrive.