An antiferromagnet with a zigzag magnetic structure exhibits a diode effect that has potential applications in spintronics.

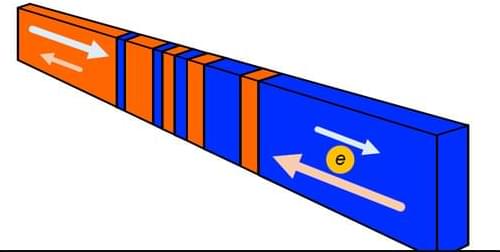

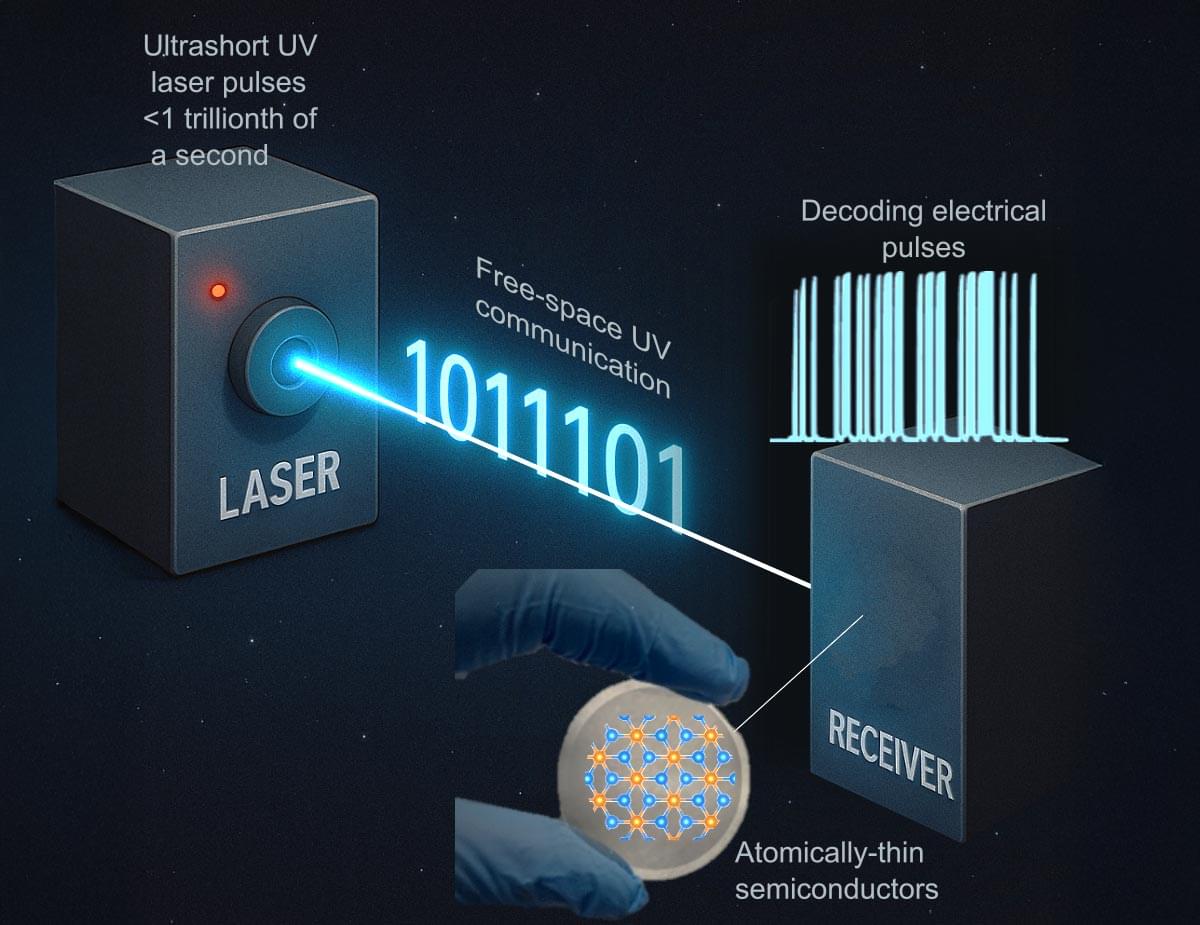

In a traditional diode, current flows in one direction only, thanks to an internal charge imbalance. Researchers have now shown a diode-like effect in an antiferromagnet with a zigzag magnetic structure [1]. The underlying mechanism is different from that in traditional diodes, as the zigzag pattern creates a combined magnetic and electric field that favors current flow in one direction. The strength of the diode effect in the antiferromagnet is relatively small, but rather than exploiting the effect to make a diode for conventional circuits, the team foresees possible applications in spintronics, devices that make use of electron spins.

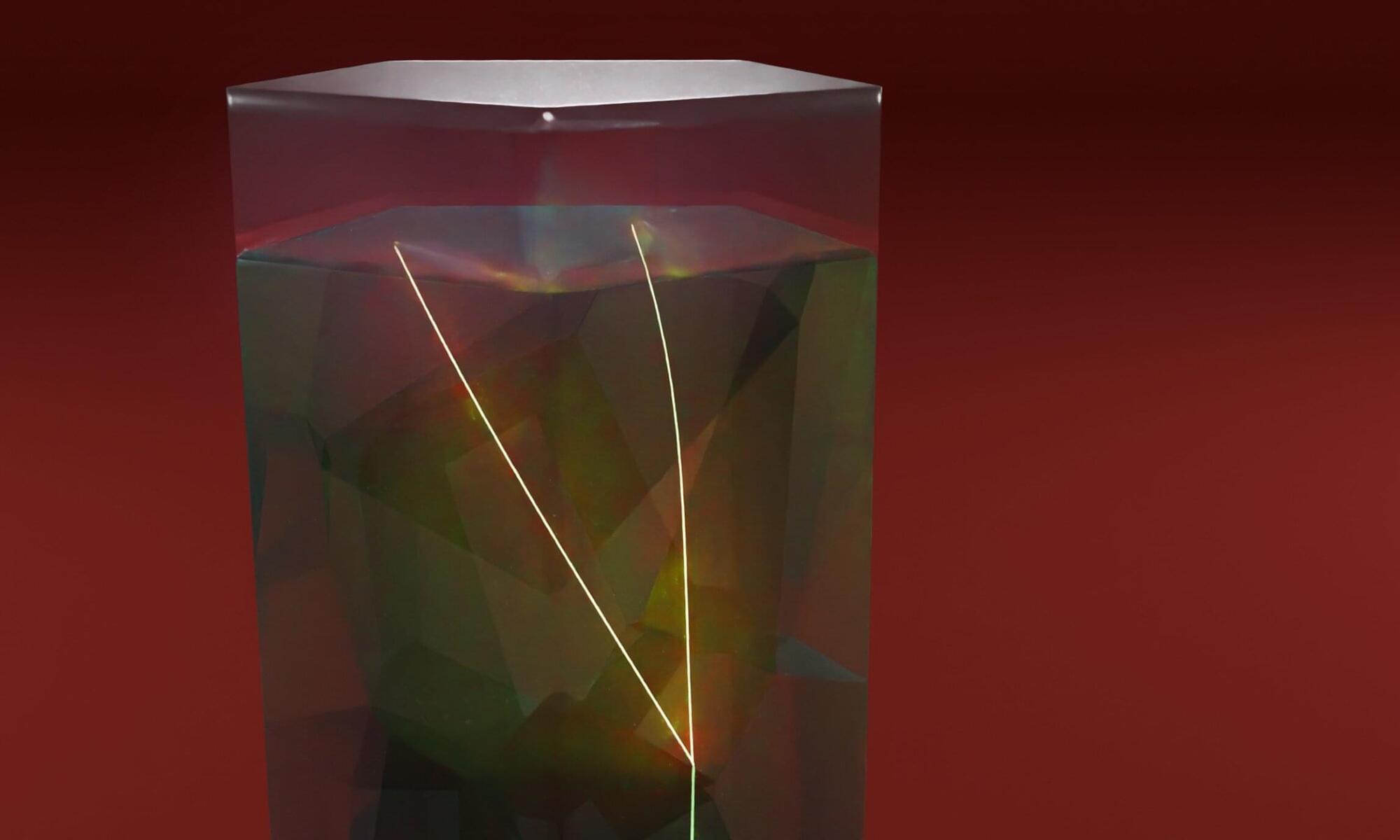

A typical diode is a junction between two semiconductors having different charge carriers. The charge imbalance across this junction restricts current to flow in only one direction. Diode-like behavior can, in principle, occur in a single material, but it requires that the material’s internal structure is asymmetric in a particular way. This asymmetry should produce two effects: an internal electric field and an internal magnetic field. When those two fields are perpendicular to each other, they can exert a one-way force—called a toroidal moment—on electrons moving through the material, explains Kenta Sudo from Tohoku University in Japan.