Aug 28, 2021

Cerebras Upgrades Trillion-Transistor Chip to Train ‘Brain-Scale’ AI

Posted by Chima Wisdom in categories: innovation, robotics/AI

Much of the recent progress in AI has come from building ever-larger neural networks. A new chip powerful enough to handle “brain-scale” models could turbo-charge this approach.

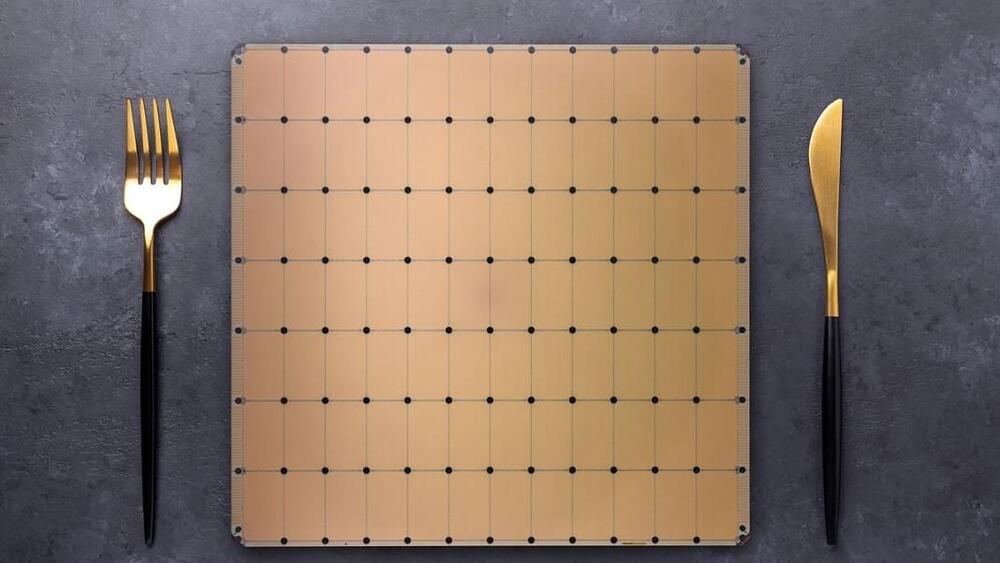

Chip startup Cerebras leaped into the limelight in2019when it came out of stealth to reveal a 1.2-trillion-transistor chip. The size of a dinner plate, the chip is called the Wafer Scale Engine and was the world’s largest computer chip. Earlier this year Cerebras unveiled the Wafer Scale Engine 2 (WSE-2), which more than doubled the number of transistors to 2.6 trillion.

Now the company has outlined a series of innovations that mean its latest chip can train a neural network with up to 120 trillion parameters. For reference, OpenAI’s revolutionary GPT-3 language model contains 175 billion parameters. The largest neural network to date, which was trained by Google, had 1.6 trillion.