Researchers develop skyrmion-based microelectronic device for sustainable, high-performance AI computing with energy-efficient technology.

DoorDash and Wing (an Alphabet company) have announced their first joint drone delivery service in the United States. Targeted at select customers in Christiansburg, Virginia, eligible DoorDash orders from Wendy’s can now be delivered via Wing drone.

Wendy’s orders will be delivered using a Wing drone, which can travel up to 65 mph. Upon reaching its destination, the drone will lower the order to the doorstep using a tether.

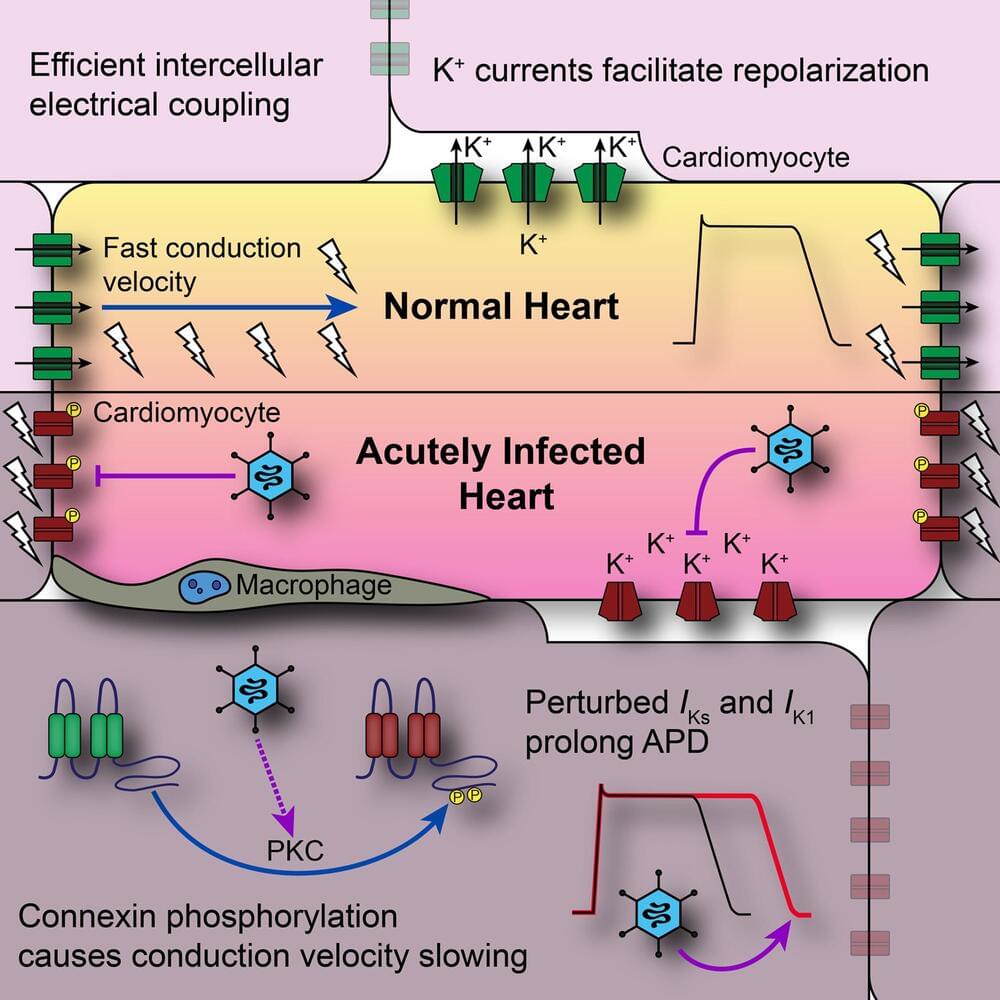

In a potentially game-changing development, scientists with the Fralin Biomedical Research Institute at VTC have revealed a new understanding of sometimes fatal viral infections that affect the heart.

Traditionally, the focus has been on heart inflammation known as myocarditis, which is often triggered by the body’s immune response to a viral infection.

However, a new study led by James Smyth, associate professor at the Fralin Biomedical Research Institute, sheds new light on this notion, revealing that the virus itself creates potentially dangerous conditions in the heart before inflammation sets in.

This post is also available in:  עברית (Hebrew)

עברית (Hebrew)

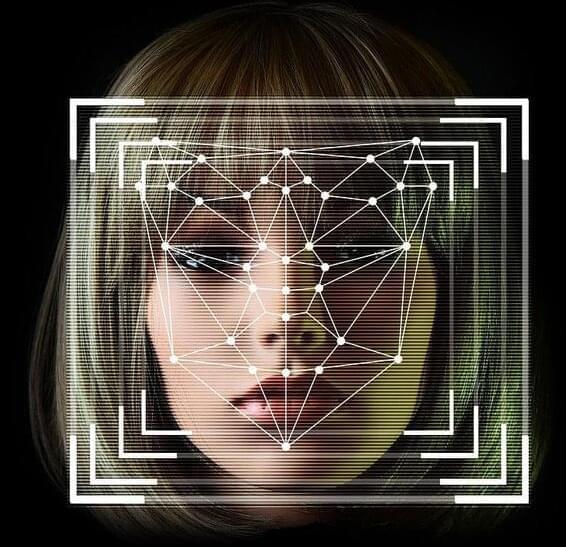

Recent advancements in artificial intelligence make it increasingly harder to detect deepfake voices, and the solution might actually come from AI itself.

Scientists at Klick Labs were inspired by their clinical studies using vocal biomarkers to help enhance health outcomes and created an audio deepfake detection method that taps into signs of life like breathing patterns and micropauses in speech.

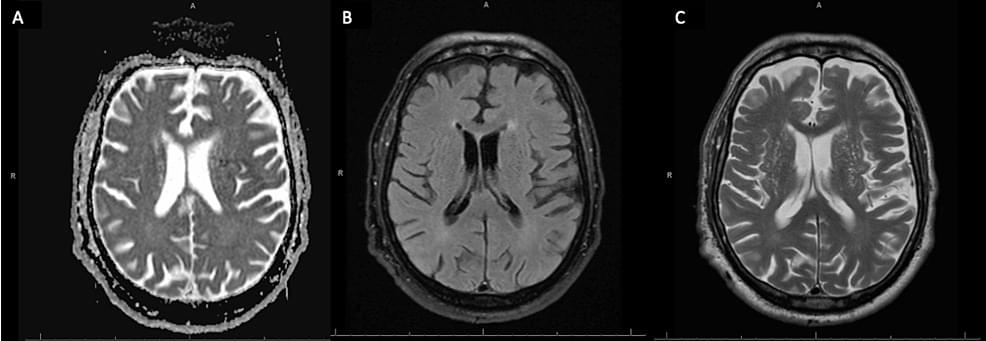

Diving into the complexities of Seronegative Autoimmune Encephalitis — a journey through diagnostic hurdles and treatment paths. Discover more: 👉 https://bit.ly/3TwTulh ✨

Seronegative autoimmune encephalitis (AE) is a rare, immune-mediated inflammatory syndrome that presents with a wide spectrum of neuropsychiatric symptoms, such as cognitive impairment, seizures, psychosis, focal neurological defects, and altered consciousness. This disease process presents with no identifiable autoimmune antibodies, which leads to uncertain diagnosis, delayed treatment, and prolonged hospital admissions. Early diagnosis and prompt treatment of AE should not be delayed, as early recognition and treatment leads to improved outcomes and disease reversibility for these patients. In this study, we present a case report of a 77-year-old male who presented with acutely altered mental status. This patient underwent an extensive workup and demonstrated no signs of clinical improvement throughout a prolonged hospital admission.

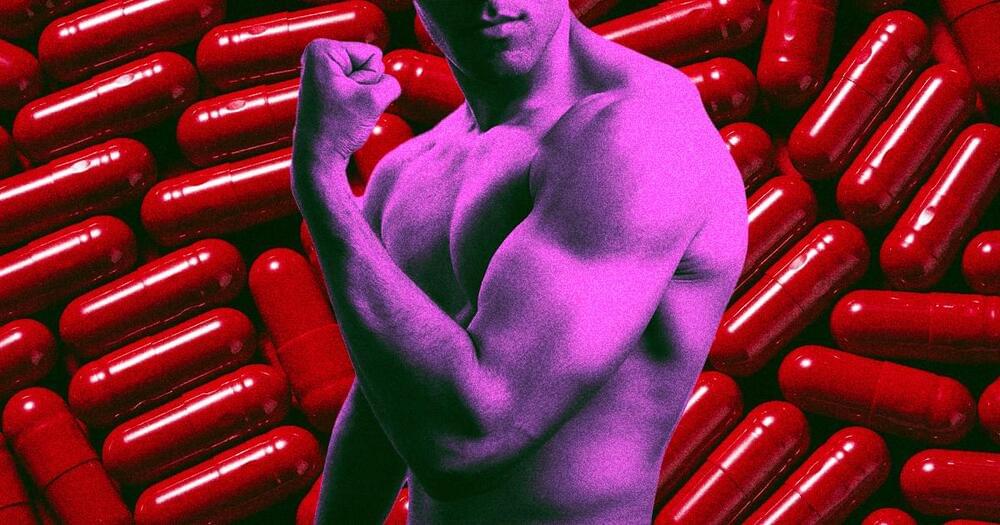

The future is going to be so lazy, yet so cut.

As next-generation weight-loss treatments like Wegovy and Zepbound continue to fly off the shelves, scientists are busy working on a medicine that could mimic the effects of exercise.

As explained in an American Chemical Society press release, trials thus far on SLU-PP-332, the potentially groundbreaking compound in question, show that it seems “capable of mimicking the physical boost of working out.”

“We cannot replace exercise; exercise is important on all levels,” Bahaa Elgendy, an anesthesiology professor at Washington University Medical School in St. Louis who serves as the principal investigator of the new compound, said in the press release. “If I can exercise, I should go ahead and get the physical activity. But there are so many cases in which a substitute is needed.”