Bioengineers demonstrated that an ATP biosensor can be used to reveal microbial energetic dynamics and facilitate bioproduction.

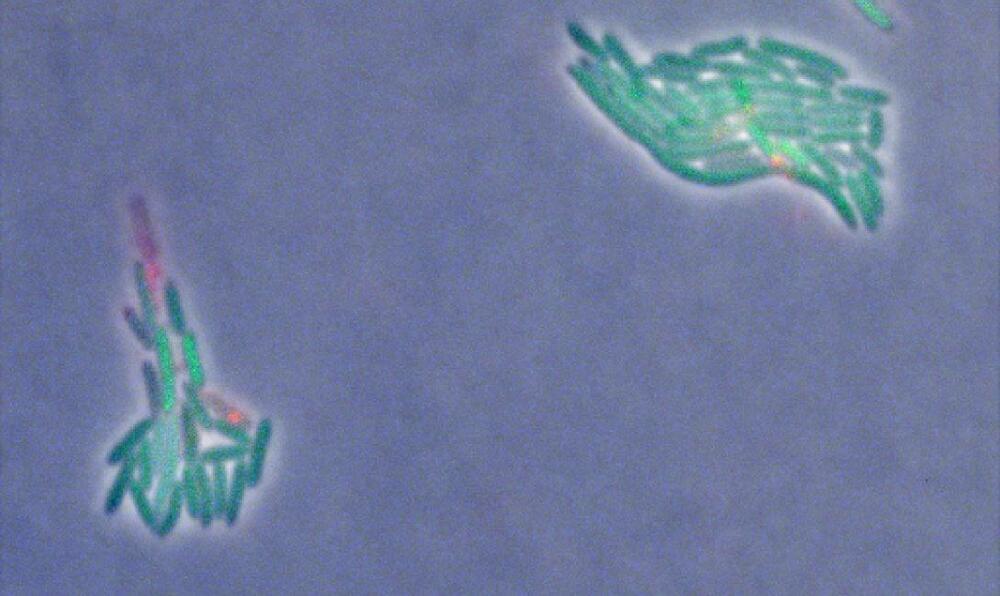

Four dedicated explorers—Jason Lee, Stephanie Navarro, Shareef Al Romaithi, and Piyumi Wijesekara—just returned from a 45-day simulated journey to Mars, testing the boundaries of human endurance and teamwork within NASA’s HERA (Human Exploration Research Analog) habitat at Johnson Space Center in Houston.

Their groundbreaking work on HERA’s Campaign 7 Mission 2 contributes to NASA’s efforts to study how future astronauts may react to isolation and confinement during deep-space journeys.

“Every mission is contributing back to the other missions and future missions in terms of new tools and techniques to develop,” said Dr. Fred Calef III. “It’s not just you working on something. It’s being able to share data between people… getting a higher order of science.”

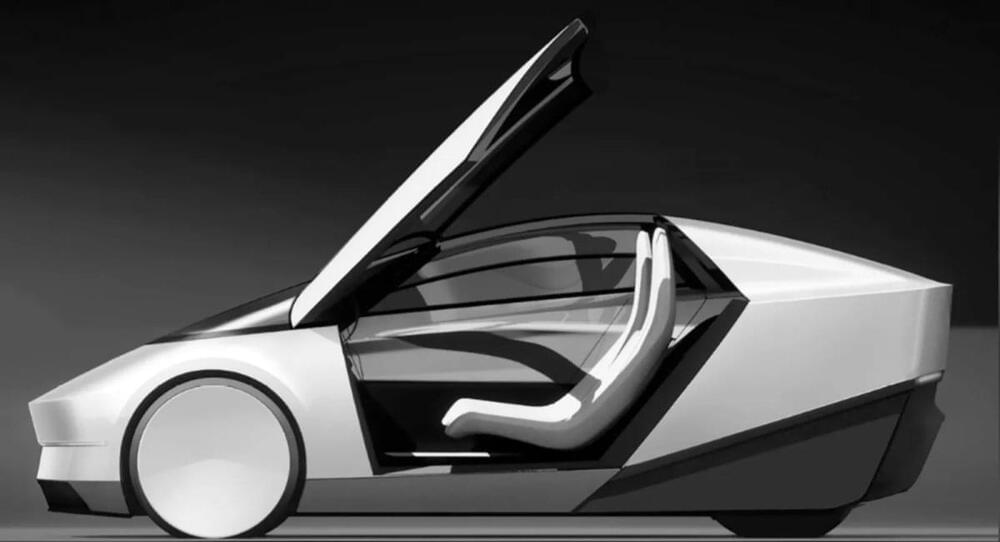

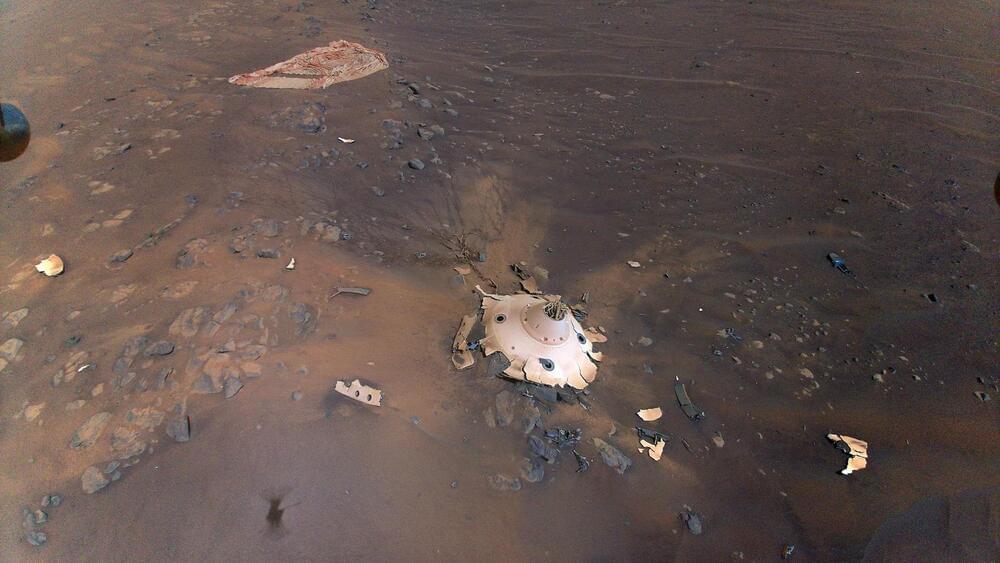

As NASA’s Perseverance rover continues to explore the surface of Mars, an open-source, online mapping software known as Multi-Mission Geographic Information System (MMGIS) has been instrumental in determining the best routes for the car-sized rover and landing sites for its Ingenuity helicopter prior to the latter’s “retirement” but is also available for the public to follow the mission, as well. This software holds the potential to help both scientists and the public explore Mars in new and exciting ways for years to come.

“Maps and images are a common language between different people — scientists, engineers, and management,” said Dr. Nathan Williams, who is a mapping specialist at NASA JPL and was a key player in selecting Jezero Crater as the landing site for the Perseverance rover. “They help make sure everyone’s on the same page moving forward, in a united front to achieve the best science that we can.”

Officially licensed in 2022, MMGIS combines orbital and surface images that allows users to follow the paths of Perseverance and its sister rover, Curiosity, which touched down in Gale Crater in 2012. What makes MMGIS unique is its user-friendly interface, enabling members of the public to explore Mars without needing advanced data analysis experience, instead only needing minor programming knowledge to both download and operate the software. Through this, NASA hopes to enable the public to contribute to the goal of exploring Mars in new and exciting ways. Some of the key features of MMGIS is 2D and 3D data maps, customized layouts, detailed route maps of the rovers, and it’s completely free to both download and use.

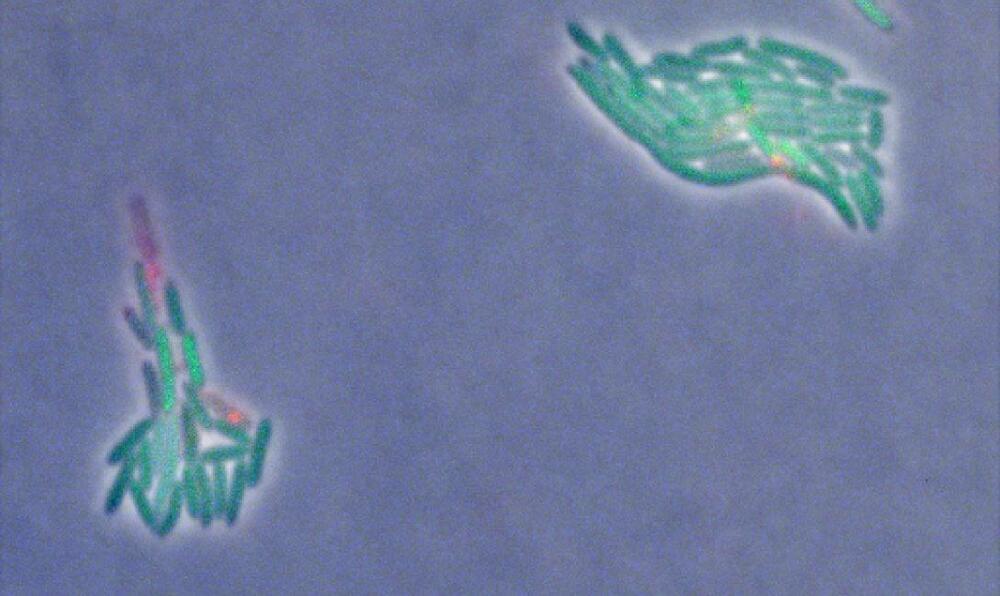

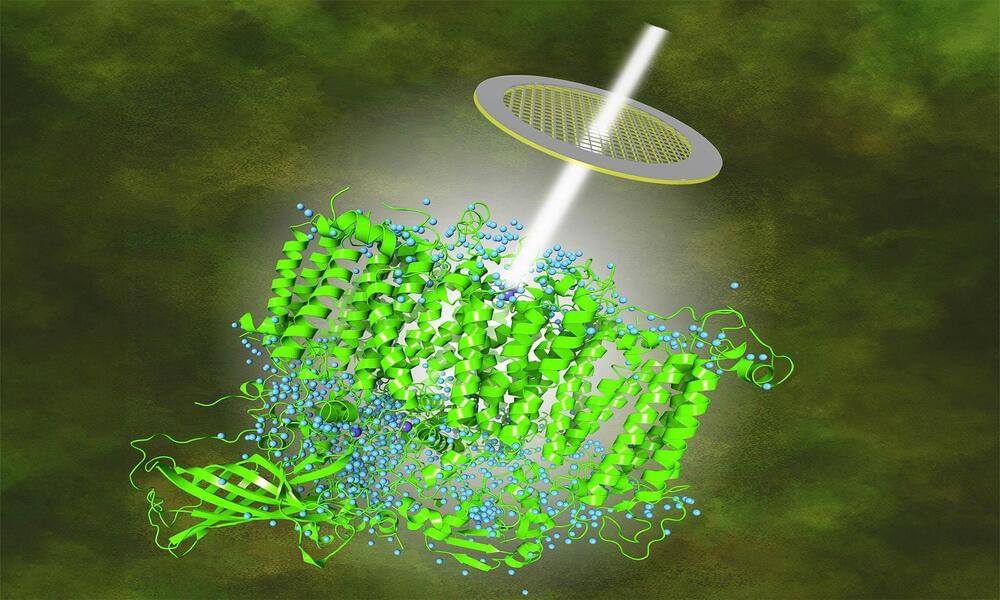

The quest to understand the enigma of photosynthesis, how water is involved, and its critical role on Earth has taken a significant leap forward.

A recent breakthrough in visual technology has resulted in the capture of high-resolution images beyond any achieved before, shedding never-before-seen light on this essential life process.

Our story begins within the walls of a renowned institution, Umeå University, where diligent researchers embarked on a fascinating journey to understand the positions of hydrogen atoms and water molecules in photosynthesis.