Electronics have tons of gold and copper so we need to consider a global recovery and recycling electronics.

Three photojournalists have created an in-depth report on electronic waste — its negative and… positive… consequences.

Summary: Researchers have developed a molecule called LaKe that mimics the metabolic effects of strenuous exercise and fasting. This molecule increases lactate and ketone levels in the body, providing similar benefits to running 10 kilometers on an empty stomach, without physical exertion or dietary changes.

Currently being tested in human trials, LaKe shows promise for helping people with limited physical ability maintain health, and may also aid in treating brain conditions like Parkinson’s and dementia. The discovery offers a potential new path for those unable to follow strict exercise or fasting routines.

The researchers also gathered behavioral data, asking participants how strongly they felt the emotions described in each story and how similar these feelings were across different objects of love. This helped the team link subjective emotional experiences to the observed brain activity.

“We use state-of-the-art technology to measure what happens in the brain when a person feels love,” Rinne told PsyPost. “We studied many different types of love and were able to show how different types of love activate the brain in different ways. Our results help explain why the word ‘love’ is used in so many different contexts. Our research also offers insights into why we feel stronger affection for those we are close to compared to strangers, even though the underlying brain processes of affection are the same for all types of interpersonal relationships.”

The study found that different types of love engage both shared and distinct regions of the brain. At a general level, all types of love activated areas associated with social cognition, including the medial prefrontal cortex, the temporoparietal junction, and the precuneus. These regions are involved in understanding others’ thoughts and emotions, a process known as theory of mind. This suggests that even when we experience love for non-human objects, like nature, our brain still engages these neural pathways.

A team of microchip engineers at Pragmatic Semiconductor, working with a pair of colleagues from Harvard University and another from Qamcom, has developed a bendable, programmable, non-silicon 32-bit RISC-V microprocessor. Their research is published in the journal Nature.

Over the past several years, hardware manufacturers have been developing bendable microprocessors for use in medical applications. A bendable device with bendable components would allow for the creation of 24-hour sensors that could be applied to any part of the body.

For this new project, the research team developed an inexpensive circuit board that could be bent around virtually any curved object. The material was made using indium gallium zinc oxide instead of the more rigid silicon.

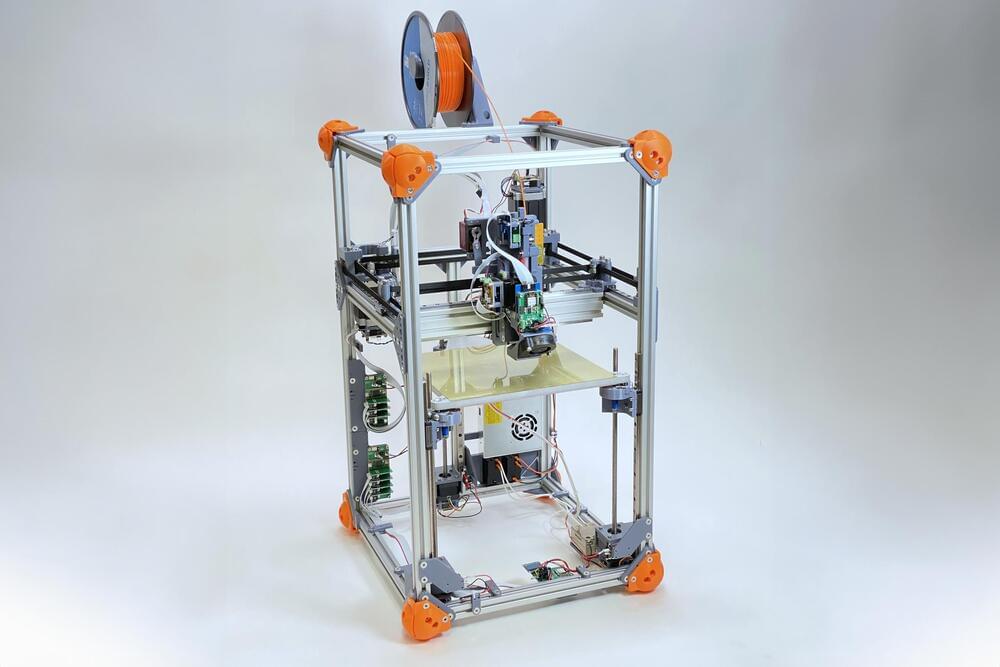

While 3D printing has exploded in popularity, many of the plastic materials these printers use to create objects cannot be easily recycled.

The automatically generated parameters can replace about half of the parameters that typically must be tuned by hand. In a series of test prints with unique materials, including several renewable materials, the researchers showed that their method can consistently produce viable parameters.

This research could help to reduce the environmental impact of additive manufacturing, which typically relies on nonrecyclable polymers and resins derived from fossil fuels.