Discover how NGate, a new Android malware, steals contactless payment data using NFC relay attacks. Learn about the latest cybersecurity threat target.

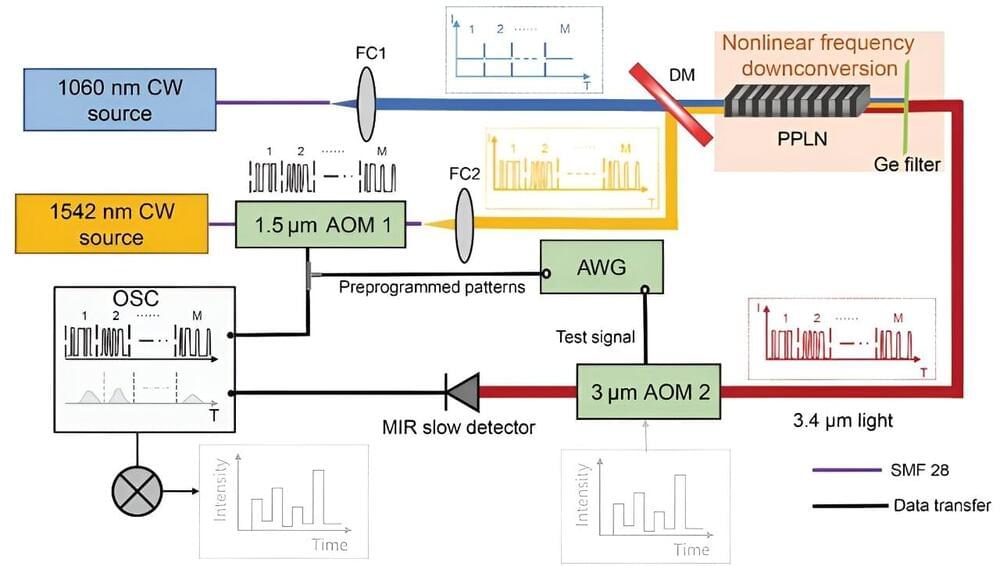

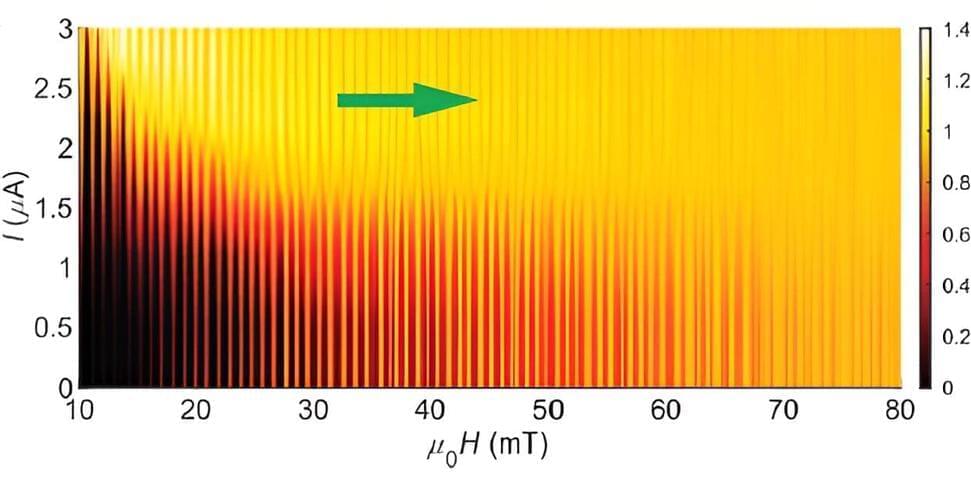

Ghost imaging in the time domain allows for reconstructing fast temporal objects using a slow photodetector. The technique involves correlating random or pre-programmed probing temporal intensity patterns with the integrated signal measured after modulation by the temporal object. However, the implementation of temporal ghost imaging necessitates ultrafast detectors or modulators for measuring or pre-programming the probing intensity patterns, which are not available in all spectral regions especially in the mid-infrared region.

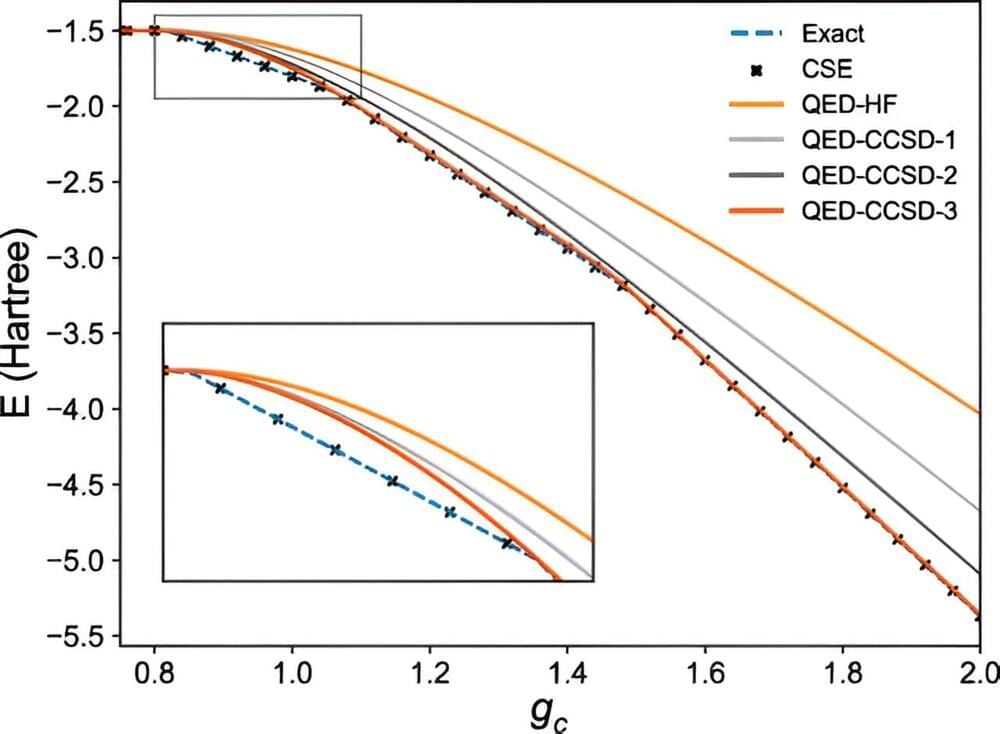

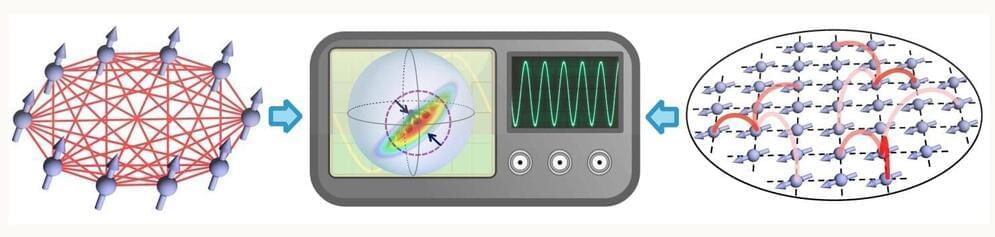

A study coordinated by the University of Trento with the University of Chicago proposes a generalized approach to the interactions between electrons and light. In the future, it may contribute to the development of quantum technologies as well as to the discovery of new states of matter. The study is published in Physical Review Letters.

Topological materials are materials that have unusual properties that arise because their wavefunction—the physical law guiding the electrons—is knotted or twisted. Where the topological material meets the surrounding space, the wavefunction must unwind. To accommodate this abrupt change, the electrons at the edge of the material must behave differently than they do in the main bulk of the material.

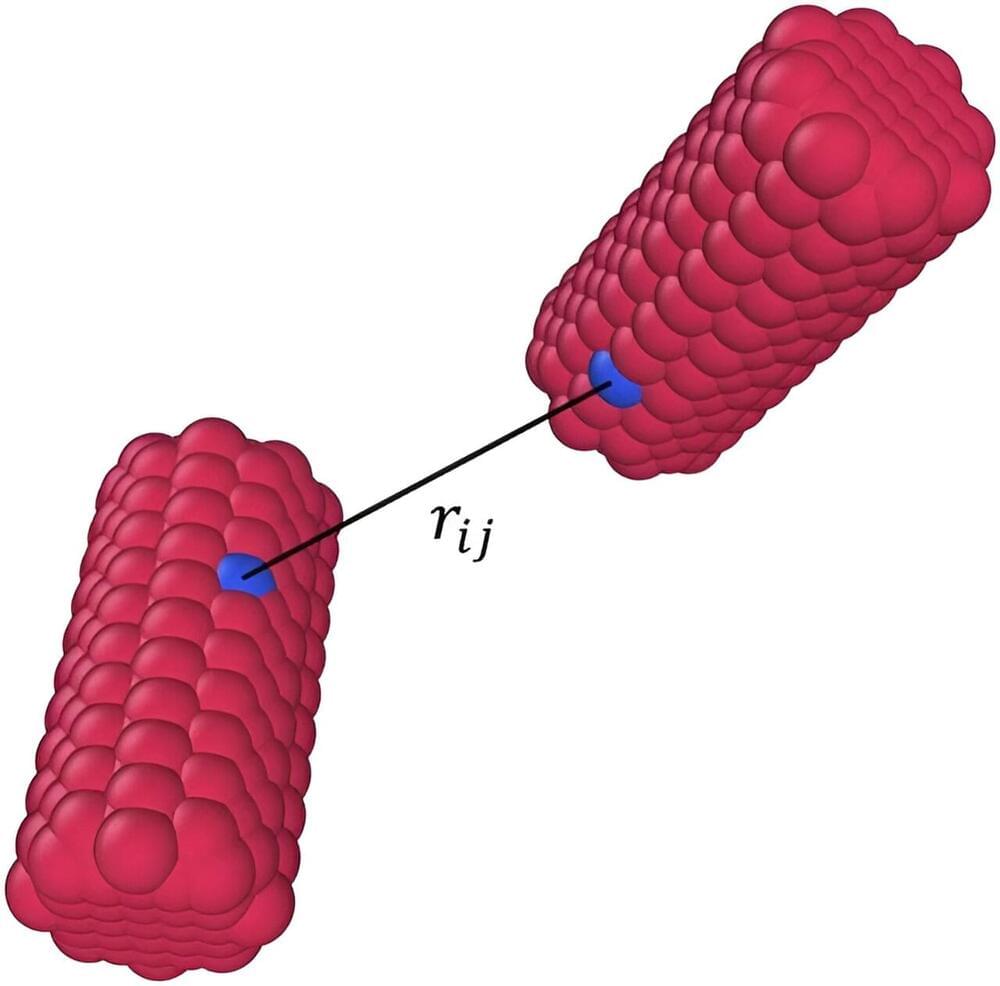

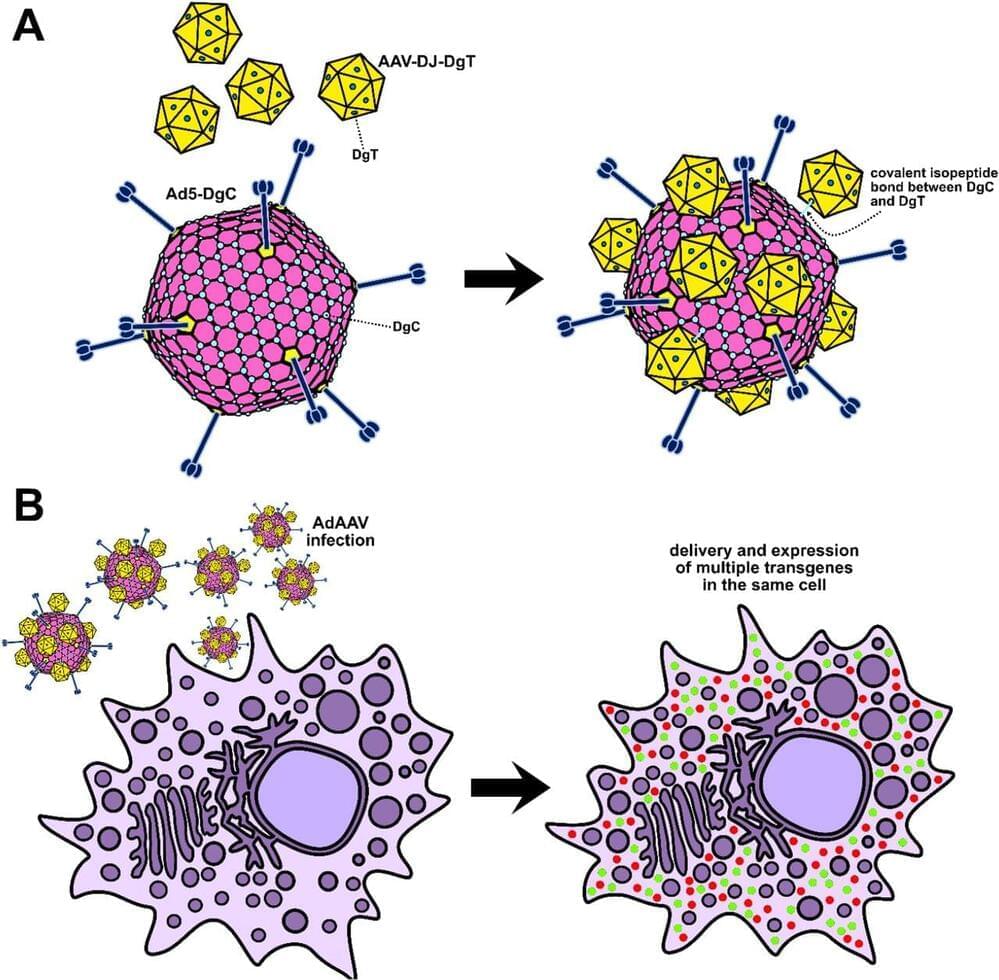

Adeno-associated virus (AAV) has found immense success as a delivery system for gene therapy, yet the small 4.7 kb packaging capacity of the AAV sharply limits the scope of its application. In addition, high doses of AAV are frequently required to facilitate therapeutic effects, leading to acute toxicity issues. While dual and triple AAV approaches have been developed to mitigate the packaging capacity problem, these necessitate even higher doses to ensure that co-infection occurs at sufficient frequency. To address these challenges, we herein describe a novel delivery system consisting of adenovirus (Ad) covalently linked to multiple adeno-associated virus (AAV) capsids as a new way of more efficiently co-infecting cells with lower overall amounts of AAVs. We utilize the DogTag-DogCatcher (DgT-DgC) molecular glue system to construct our AdAAVs and we demonstrate that these hybrid virus complexes achieve enhanced co-transduction of cultured cells. This technology may eventually broaden the utility of AAV gene delivery by providing an alternative to dual or triple AAV which can be employed at lower dose while reaching higher co-transduction efficiency.

Although adeno-associated virus (AAV) gene therapy has shown enormous promise and led to five clinically approved treatments,1–3 it is consistently hampered by the vector’s low DNA packaging capacity of 4.7 kb. Great effort has gone into developing dual AAV systems, which deliver two parts of a therapeutic gene in separate capsids that aim to co-infect the same cells.4–7 Analogous triple AAV systems have also been explored.8,9 Dual and triple AAV systems can recombine their split genes into complete form through mechanisms of DNA trans-splicing, RNA trans-splicing, or protein splicing via split inteins.5,7 However, dual and triple AAVs typically require higher doses to achieve efficient co-transduction of cells, especially when systemic administration is necessary.10 This makes sense since the likelihood of two or three cargos reaching the same cell should roughly correspond to the proportion of a single cargo reaching the cell squared or cubed respectively.

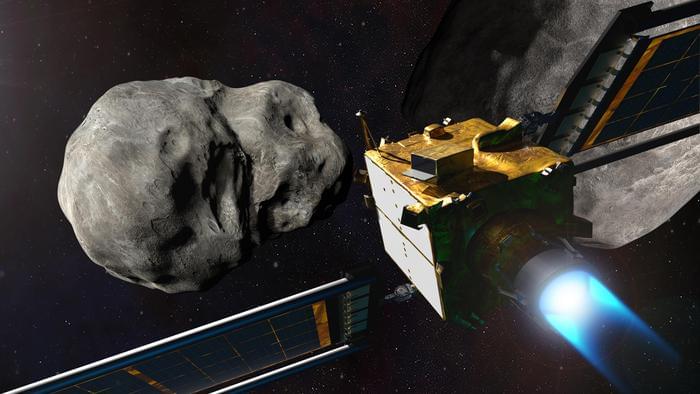

What did NASA’s Double Asteroid Redirection Test (DART) spacecraft on the asteroid moon, Dimorphos, teach astronomers about altering the trajectory of asteroids and asteroids’ formation and evolution? This is what a recent study published in The Planetary Science Journal hopes to address as an international team of researchers investigated the potential geological changes made to Dimorphos, which orbits its parent asteroid, Didymos, when DART impacted the former in September 2022. This study holds the potential to help scientists better understand the formation and evolution of asteroids throughout, and potentially beyond, the solar system, which could hold implications for the early history of the solar system, as well.

“For the most part, our original pre-impact predictions about how DART would change the way Didymos and its moon move in space were correct,” said Dr. Derek Richardson, who is a professor of astronomy at the University of Maryland, a DART investigation working group lead, and lead author on the study. “But there are some unexpected findings that help provide a better picture of how asteroids and other small bodies form and evolve over time.”

For the study, the researchers analyzed post-impact data of the DART spacecraft on Dimorphos based on predictions made prior to the impact, which could help in planning for the European Space Agency’s upcoming Hera spacecraft mission to Didymos, which is slated to launch in October 2024 and arrive at Didymos in December 2026. In the end, the researchers found that along with Dimorphos’ orbital parameters being influenced by the impact, its physical shape was altered, as well. This resulted in the small asteroid moon becoming elongated towards its poles, whereas its poles were squished prior to impact. This indicates varying formation and evolutionary processes regarding its geologic composition.