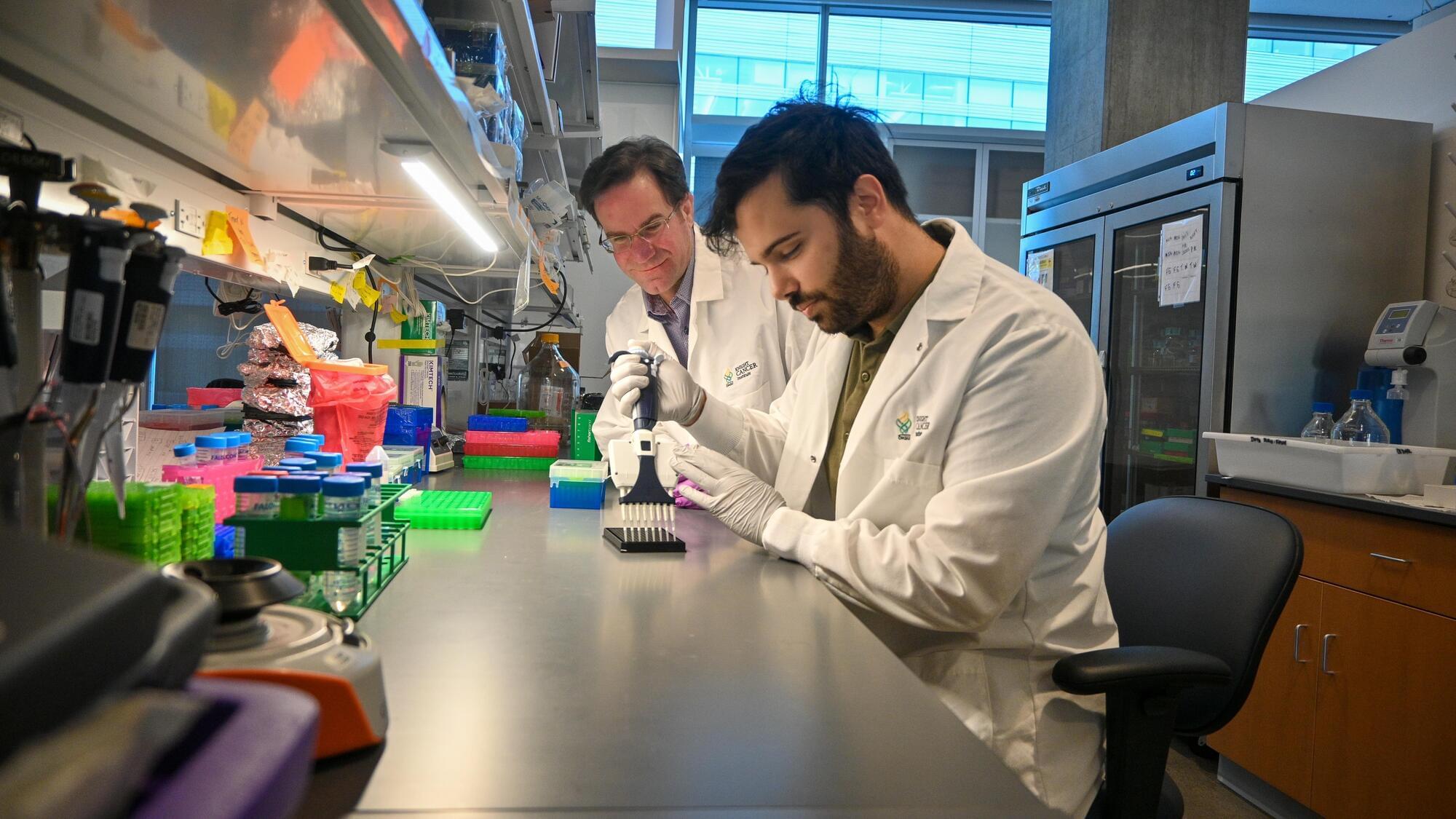

A new test called PAC-MANN is designed to detect signs of pancreatic cancer in blood. It’s still in development, but the hope is that it will help catch the deadly cancer early.

IN A NUTSHELL 🌟 Coherent Corp. unveils a powerful new laser to accelerate the production of high-temperature superconducting tape. ⚡ The LEAP 600C laser utilizes Pulsed Laser Deposition, offering twice the power and longer maintenance intervals. 🔬 HTS tape is essential for fusion energy and various technologies, including MRI machines and power grids. 🌍 This

“Historically, this has been a very challenging problem. People don’t know where to start,” said senior author Megan Dennis, associate director of genomics at the UC Davis Genome Center and associate professor in the Department of Biochemistry and Molecular Medicine and MIND Institute at the University of California, Davis.

In 2022, Dennis was a co-author on a paper describing the first sequence of a complete human genome, known as the ‘telomere to telomere’ reference genome. This reference genome includes the difficult regions that had been left out of the first draft published in 2001 and is now being used to make new discoveries.

Dennis and colleagues used the telomere-to-telomere human genome to identify duplicated genes. Then, they sorted those for genes that are: expressed in the brain; found in all humans, based on sequences from the 1,000 Genomes Project; and conserved, meaning that they did not show much variation among individuals.

They came out with about 250 candidate gene families. Of these, they picked some for further study in an animal model, the zebrafish. By both deleting genes and introducing human-duplicated genes into zebrafish, they showed that at least two of these genes might contribute to features of the human brain: one called GPR89B led to slightly bigger brain size, and another, FRMPD2B, led to altered synapse signaling.

“It’s pretty cool to think that you can use fish to test a human brain trait,” Dennis said.

The dataset in the Cell paper is intended to be a resource for the scientific community, Dennis said. It should make it easier to screen duplicated regions for mutations, for example related to language deficits or autism, that have been missed in previous genome-wide screening.

“It opens up new areas,” Dennis said.

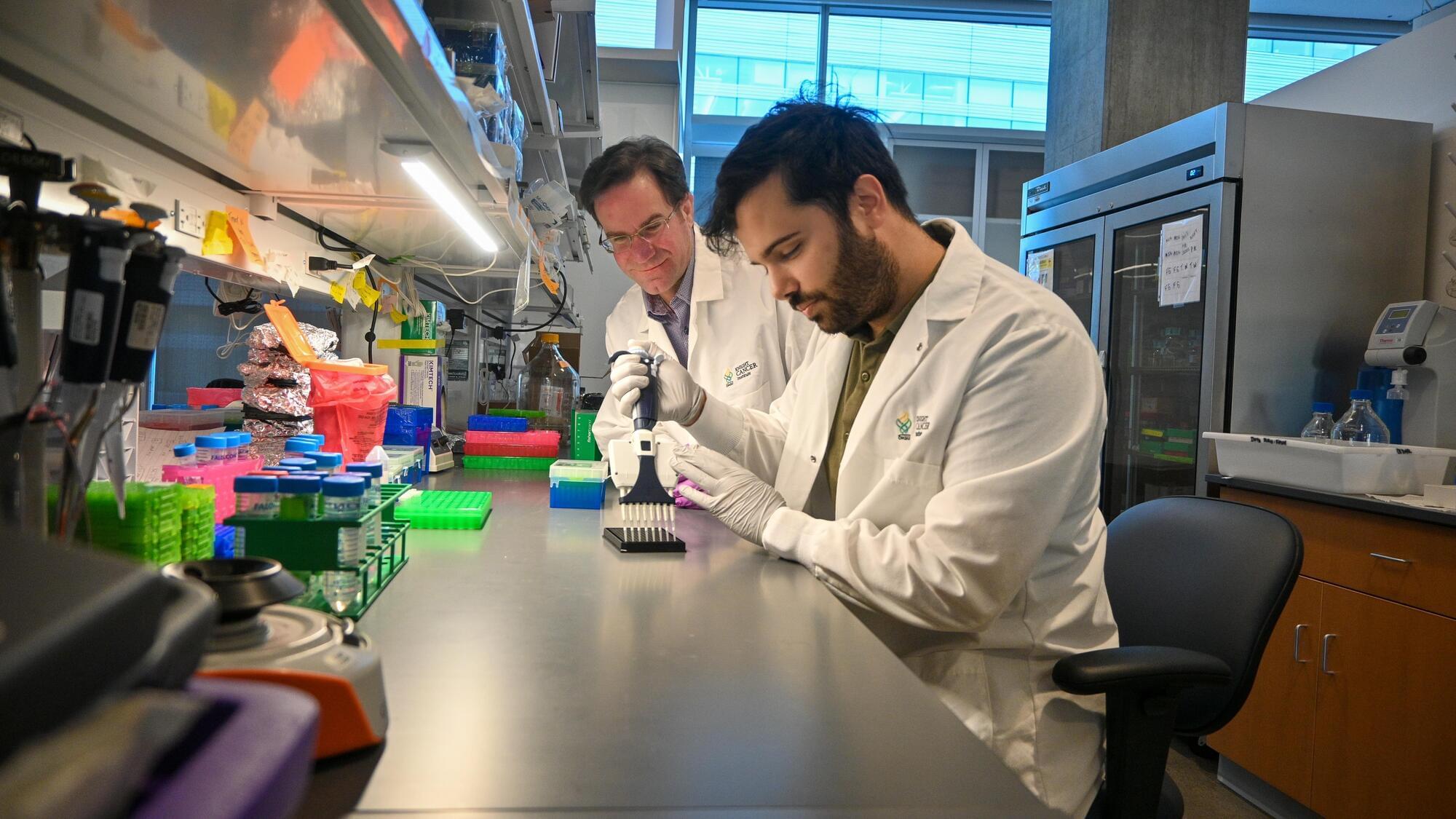

Over the last five decades, progress in neural recording techniques has allowed the number of simultaneously recorded neurons to double approximately every 7 years, mimicking Moore’s law. Following Stevenson and Kording (2011), this site aims to keep track of these advances in electrophysiology. If you notice a missing data point email me with a reference and the number of neurons recorded.

OpenAI recently told Axios that their AI tool ChatGPT handles over 2.5 billion user instructions every single day. That’s the equivalent of about 1.7 million instructions per minute or 29,000 per second.

This is a stark increase from December 2024, when ChatGPT was handling about 1 billion messages per day. Having launched in November 2022, it’s become one of the fastest growing consumer apps of all time.

Extrachromosomal DNA in cancer.

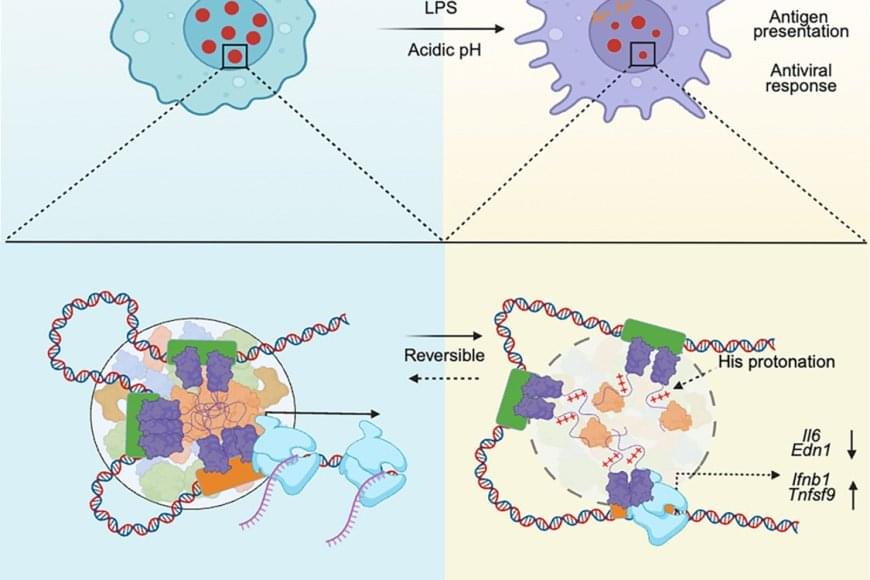

This review discusses open questions on the evolutionary role of extrachromosomal DNA (ecDNA) in tumor development, including tumorigenesis and metastatic seeding.

The author discuss the mutational landscape on ecDNA, the dynamic ecDNA genotype– phenotype map, the structural evolution of ecDNA, and how knowledge of tissue-specific ecDNA evolutionary paths can be leveraged to deliver more effective clinical treatment.

They also describe how evolutionary theoretical modeling will be instrumental in advancing new research in the field, and we explore how modeling has contributed to our understanding of the evolutionary principles governing ecDNA dynamics.

https://www.cell.com/trends/cancer/fulltext/S2405-8033(25)00146-3 https://sciencemission.com/Extrachromosomal-DNA

Cancers are complex, diverse, and elusive, with extrachromosomal DNA (ecDNA) recently emerging as a crucial player in driving the evolution of about 20% of all tumors. In this review we discuss open questions concerning the evolutionary role of ecDNA in tumor development, including tumorigenesis and metastatic seeding, the mutational landscape on ecDNA, the dynamic ecDNA genotype–phenotype map, the structural evolution of ecDNA, and how knowledge of tissue-specific ecDNA evolutionary paths can be leveraged to deliver more effective clinical treatment. Looking forward, evolutionary theoretical modeling will be instrumental in advancing new research in the field, and we explore how modeling has contributed to our understanding of the evolutionary principles governing ecDNA dynamics.

Completed in 2003, the Human Genome Project gave us the first sequence of the human genome, albeit based on DNA from a small handful of people. Building upon its success, the 1000 Genomes Project was conceived in 2007. The project began with the ambitious aim of sequencing 1,000 human genomes and exceeded it, publishing results gleaned from over 2,500 individuals of varying ancestries in 2015.

Explores the groundbreaking world of 3D bioprinting in regenerative medicine, where custom organs printed layer-by-layer from human cells are transforming transplantation. In this video, we uncover the latest advances in bioprinting technology, from biocompatible bioinks to vascularized tissue scaffolds that mimic natural organ architecture.

Dive into the science behind printing life as we showcase flagship projects: a beating mini heart engineered with human cardiomyocytes; 3D-printed liver organoids that perform metabolic functions; and personalized kidney scaffolds seeded with patient-derived stem cells. Learn how bio-printed skin grafts with integrated blood vessels accelerate wound healing and reduce scarring and discover innovations in printing complex structures like pancreas and lung tissue.

We break down key techniques—extrusion-based bioprinting, stereolithographic printing, and sacrificial ink methods—that enable high-resolution, cell-friendly constructs. Our experts explain challenges in tissue vascularization, bioink formulation, and regulatory pathways for clinical use. Gain insights into clinical trials driving the future of organ transplants without donor shortages.

Whether you’re a biotech researcher or tech enthusiast, this video offers insights and case studies. Don’t miss this cutting-edge guide to 3D bio-printed organs and tissue engineering.

#techforgood #futureofmedicine #aiinhealthcare #medicalai #bioprinting #tissueengineering #explainervideo #scienceexplained

Exposure to endocrine-disrupting chemicals in early life, including during gestation and infancy, results in a higher preference for sugary and fatty foods later in life, according to an animal study being presented Sunday at ENDO 2025, the Endocrine Society’s annual meeting in San Francisco, Calif.

Endocrine-disrupting chemicals are substances in the environment (air, soil or water supply), food sources, personal care products and manufactured products that interfere with the normal function of the body’s endocrine system. To determine if early-life exposure to these chemicals affects eating behaviors and preferences, researchers from the University of Texas at Austin conducted a study of 15 male and 15 female rats exposed to a common mixture of these chemicals during gestation or infancy.

“Our research indicates that endocrine-disrupting chemicals can physically alter the brain’s pathways that control reward preference and eating behavior. These results may partially explain increasing rates of obesity around the world,” said Emily N. Hilz, Ph.D., a postdoctoral research fellow at the University of Texas at Austin in Austin, Texas.