After forty years, the creator of scar theory has observed the phenomenon in real time.

Quantum scarring is a phenomenon in which traveling electrons end up following the same repeating path.

Scars of Chaos: Visualizing Mysteries in Graphene Dots probabilities cluster along the paths of unstable orbits from their classical counterparts. These scars, while predicted, have remained elusive to direct observation—until now.

+

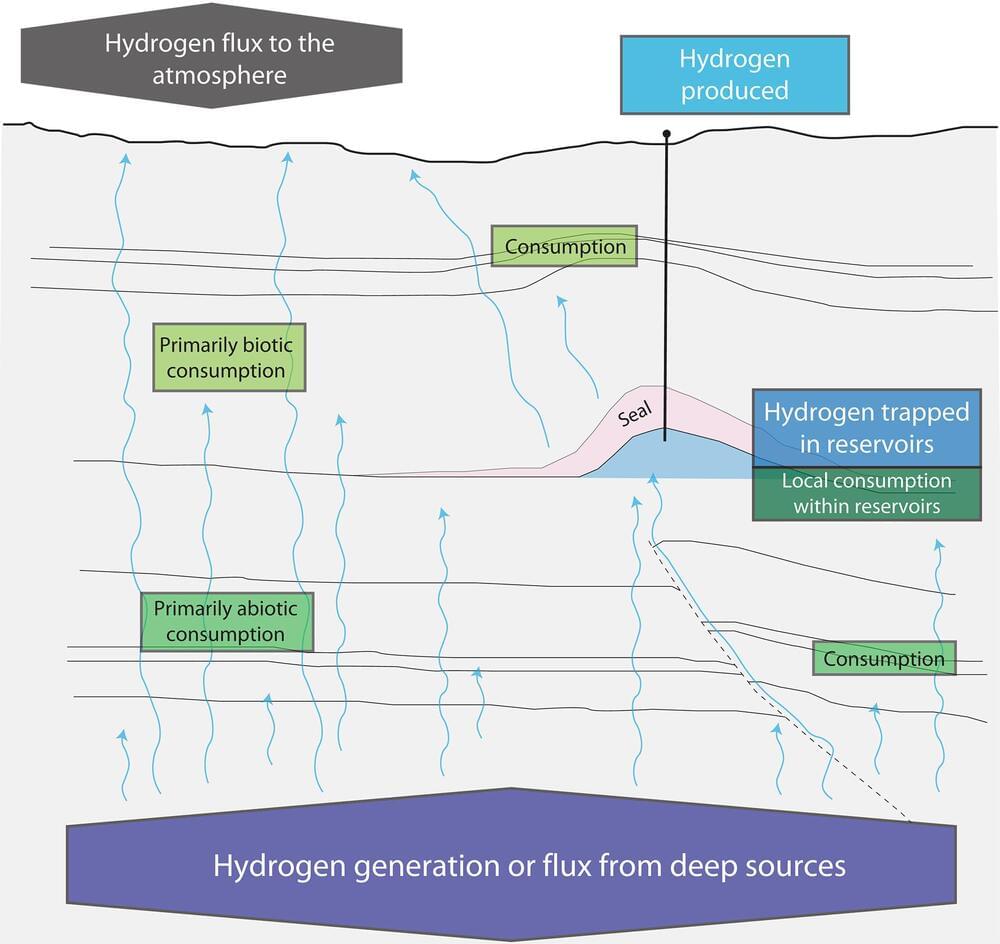

Using an innovative combination of graphene dot fabrication and advanced wavefunction mapping via scanning tunneling microscopy, researchers captured stunning images of scars. Within stadium-shaped GQDs, they observed striking lemniscate (∞-shaped) and streak-like probability patterns. These features recur at equal energy intervals, aligning with theoretical predictions for relativistic scars—a fascinating blend of mechanics and relativity.

The researchers further confirmed that these patterns are connected to two specific unstable periodic orbits within the GQD, bridging the chaotic motion of classical systems with the world. Beyond providing the first visual proof of scarring, this work lays the foundation for exploring other exotic scar phenomena, such as those induced by perturbations, chirality, or antiscarring effects.

This sets the stage for new discoveries in [#mechanics](https://www.facebook.com/hashtag/mechanics?__eep__=6&__cft__[0]=AZXPCRQF-knoMxWsHdGuAINl_hxSgWpjd9vUPszcDQDED9B4XtpXqPPhvcrED0NuOfXnWgthLMzgHmb5MWHbg6_KCiMiM3QaLJM2p6zXDiZd5oSUVWZeKR8qhHn2bevNFEnZj4T-bvc595A_jLYg-RLGWJOGrgLefEZI-7CDt6hSLX7CskI28RIoWnxvrZR2Xks&__tn__=*NK-R), [#chaos](https://www.facebook.com/hashtag/chaos?__eep__=6&__cft__[0]=AZXPCRQF-knoMxWsHdGuAINl_hxSgWpjd9vUPszcDQDED9B4XtpXqPPhvcrED0NuOfXnWgthLMzgHmb5MWHbg6_KCiMiM3QaLJM2p6zXDiZd5oSUVWZeKR8qhHn2bevNFEnZj4T-bvc595A_jLYg-RLGWJOGrgLefEZI-7CDt6hSLX7CskI28RIoWnxvrZR2Xks&__tn__=*NK-R) theory, and material science, with potential applications ranging from technologies to our understanding of fundamental physical laws.

Explore #quantum at Facebook.