Researchers at the Universitat Autònoma de Barcelona (UAB) have successfully created a new form of magnetic state known as a magneto-ionic vortex, or “vortion.” Their findings, published in Nature Communications, demonstrate an unprecedented ability to control magnetic properties at the nanoscale under normal room temperature conditions. This achievement could pave the way for next-generation magnetic technologies.

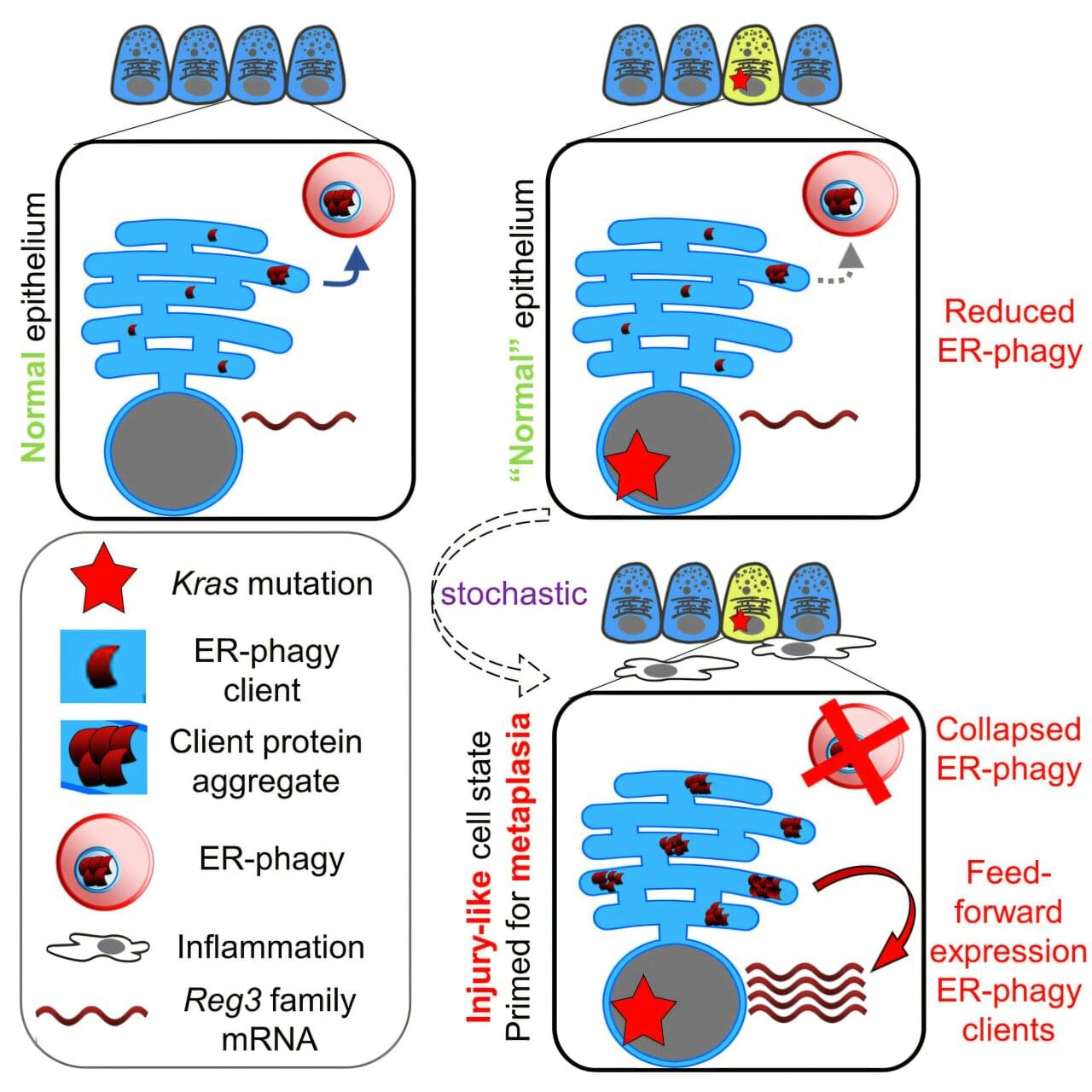

As the growth of Big Data continues, the energy needs of information technologies have risen sharply. In most systems, data is stored using electric currents, but this process generates excess heat and wastes energy. A more efficient approach is to control magnetic memory through voltage rather than current. Magneto-ionic materials make this possible by enabling their magnetic properties to be adjusted when ions are inserted or removed through voltage polarity changes. Up to now, research in this field has mainly focused on continuous films, instead of addressing the nanoscale “bits” that are vital for dense data storage.

At very small scales, unique magnetic behaviors can appear that are not seen in larger systems. One example is the magnetic vortex, a tiny whirlpool-like magnetic pattern. These structures play an important role in modern magnetic data recording and also have biomedical applications. However, once a vortex state is established in a material, it is usually very difficult to modify or requires significant amounts of energy to do so.