An international study published in Communications Earth & Environment has advanced earthquake simulations to better anticipate the rupture process of large earthquakes.

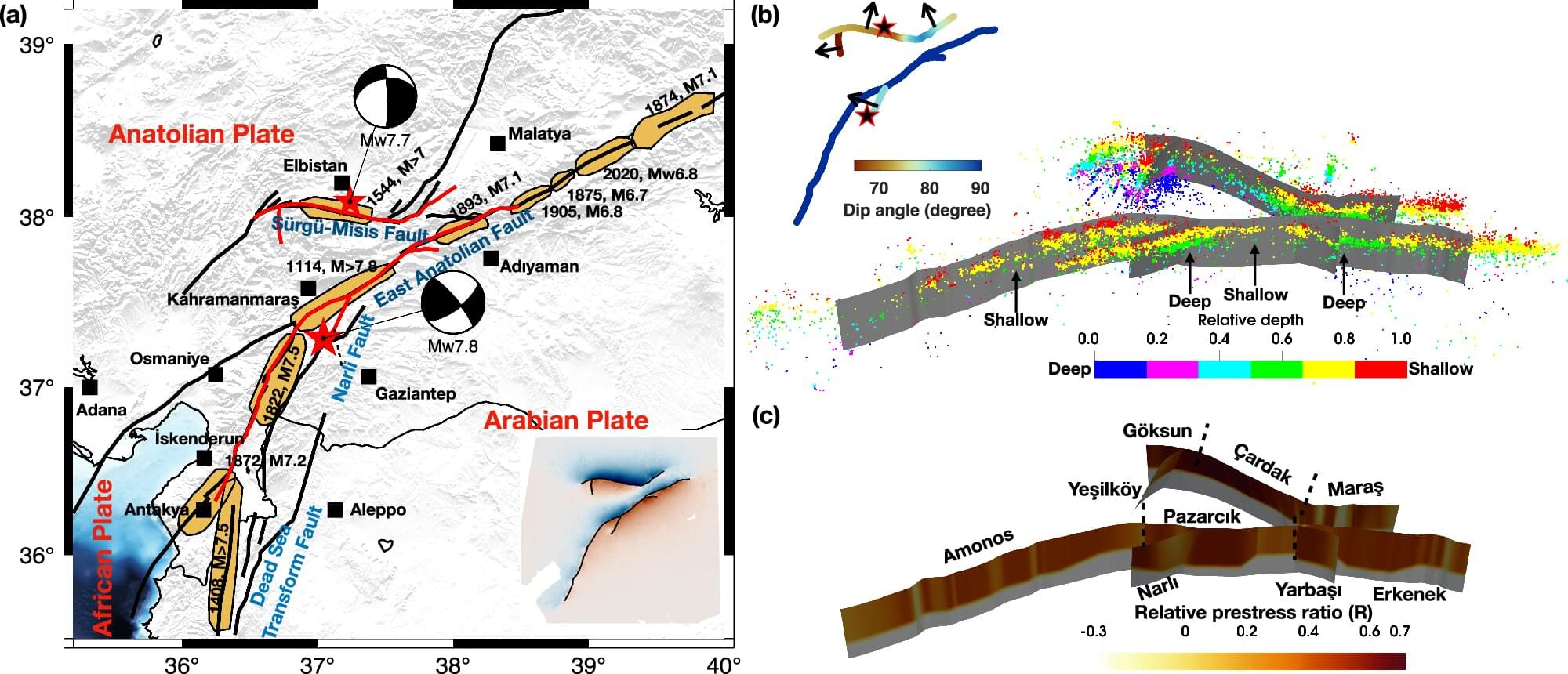

Using data for the Turkey earthquake of February 2023, the scientists have developed a detailed 3D dynamic model that provides a more accurate understanding of the strong shaking during this earthquake and hence information for future seismic hazard assessments. The research was led by King Abdullah University of Science and Technology (KAUST) Professor Martin Mai and scientist Bo Li.

The Turkey earthquake was responsible for the death of tens of thousands of people. It was marked by a doublet, which describes two major earthquakes separated by a short time. The first rupture fractured a long stretch of the fault approximately 350 km long, breaking different sections in succession. Just hours later, a second massive rupture followed, amplifying the destruction. Doublets do not show typical aftershock behavior and are a challenge to mathematically describe.