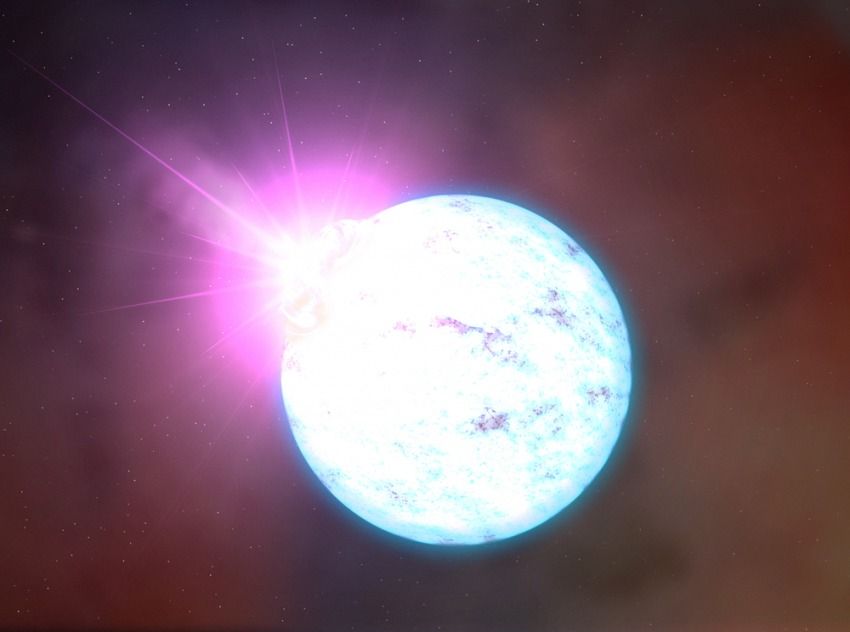

A trio of researchers affiliated with several institutions in the U.S. and Canada has found evidence that suggests nuclear material beneath the surface of neutron stars may be the strongest material in the universe. In their paper published in the journal Physical Review Letters, M. E. Caplan, A. Schneider, and C. J. Horowitz describe their neutron star simulation and what it showed.

Prior research has shown that when stars reach a certain age, they explode and collapse into a mass of neutrons; hence the name neutron star. And because they lose their neutrinos, neutron stars become extremely densely packed. Prior research has also found evidence that suggests the surface of such stars is so dense that the material would be incredibly strong. In this new effort, the researchers report evidence suggesting that the material just below the surface is even stronger.

Astrophysicists have theorized that as a neutron star settles into its new configuration, densely packed neutrons are pushed and pulled in different ways, resulting in formation of various shapes below the surface. Many of the theorized shapes take on the names of pasta, because of the similarities. Some have been named gnocchi, for example, others spaghetti or lasagna. Caplan, Schneider and Horowitz wondered about the density of these formations—would they be denser and thus stronger even than material on the crust? To find out, they created some computer simulations.