O.o.

How does the universe work? It appears that a new AI could help find the answer as it was able to work well beyond the parameters set by its developers.

According to the Kardashev Scale, a Type III Civilization should be able to harness the power of an entire galaxy… Humanity isn’t quite there yet, but what will our lives be like if we ever do become an advanced, Type III, intergalactic species? In this video, Unveiled looks far into the future, to see how far humankind could possibly go as we continue to explore and understand the universe around us…

This is Unveiled, giving you incredible answers to extraordinary questions!

Find more amazing videos for your curiosity here:

What If There’s A Mirror Universe — https://www.youtube.com/watch?v=dvs-H5vU3NY

What If Andromeda Collides With the Milky Way? — https://www.youtube.com/watch?v=X5-fSADihd4

Are you constantly curious? Are you a fiend for facts? Then subscribe for more from Unveiled ► https://goo.gl/GmtyPv

#KardashevScale #Civilization #Advanced #Future #Futuristic

LAUSANNE, Switzerland — Fifty years ago this month, NASA’s Apollo 11 mission transformed the idea of putting people on the moon from science fiction to historical fact. Not much has changed on the moon since Apollo, but if the visions floated by leading space scientists from the U.S., Europe, Russia and China come to pass, your grandchildren might be firing up lunar barbecues in 2069.

“Definitely in 50 years, there will be more tourism on the moon,” Anatoli Petrukovich, director of the Russian Academy of Sciences’ Space Research Institute, said here today during the World Conference of Science Journalists. “The moon will just look like a resort, as a backyard for grilling some meat or whatever else.”

Wu Ji, former director general of the Chinese Academy of Sciences’ National Space Science Center, agreed that moon tourism could well be a thing in 2069.

NASA has awarded Carnegie Mellon University (CMU) and Astrobotic a US$5.6 million contract to build a new suitcase-sized robotic lunar rover that could land on the Moon as soon as 2021. One of 12 proposals selected as part of the agency’s Lunar Surface and Instrumentation and Technology Payload (LSITP) program, the 24-lb (11-kg) MoonRanger rover is designed to operate autonomously on week-long missions within 0.6 mi (1 km) of its lander.

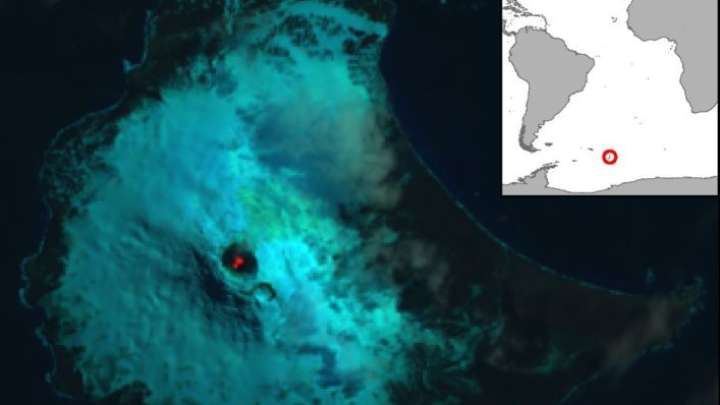

Nestled amongst glaciers in one of the world’s most remote and volcanically active island ranges lies an incredibly rare lake of lava only now confirmed by scientists in the Journal of Volcanology and Geothermal Research.

Residing on Saunders Island in the South Sandwich Islands, measuring between 90 and 215 meters (295 and 700 feet) in diameter, and with temperatures soaring above 1,200°C (2,160°F), the lava lake of Mount Michel joins just seven other known features of this nature on Earth. Lava lakes are extremely rare with even the most persistent ceasing after just a century.

“We are delighted to have discovered such a remarkable geological feature in the British Overseas Territory,” said study author and geologist Alex Burton-Johnson in a statement. “Identifying the lava lake has improved our understanding of the volcanic activity and hazard on this remote island, and tells us more about these rare features, and finally, it has helped us develop techniques to monitor volcanoes from space.”

For the first time, researchers have been able to observe the atmosphere of a planet between the sizes of Earth and Neptune. Gliese 3470 b is a world 96 light-years away, orbiting a star roughly half the size of the Sun. It is 12.6 times the mass of our planet and slightly smaller than Neptune, which weighs 17 times the mass of the Earth.

As reported in Nature Astronomy, this planet delivered quite the surprise to astronomers. Using the combined power of the Hubble Space Telescope and NASA’s Spitzer infrared observatory, they discovered a clear atmosphere of hydrogen and helium, the main components of stars.

“We expected an atmosphere strongly enriched in heavier elements like oxygen and carbon which are forming abundant water vapor and methane gas, similar to what we see on Neptune,” lead author Björn Benneke, of the University of Montreal, said in a statement. “Instead, we found an atmosphere that is so poor in heavy elements that its composition resembles the hydrogen/helium-rich composition of the Sun.”

Every type of atom in the universe has a unique fingerprint: It only absorbs or emits light at the particular energies that match the allowed orbits of its electrons. That fingerprint enables scientists to identify an atom wherever it is found. A hydrogen atom in outer space absorbs light at the same energies as one on Earth.