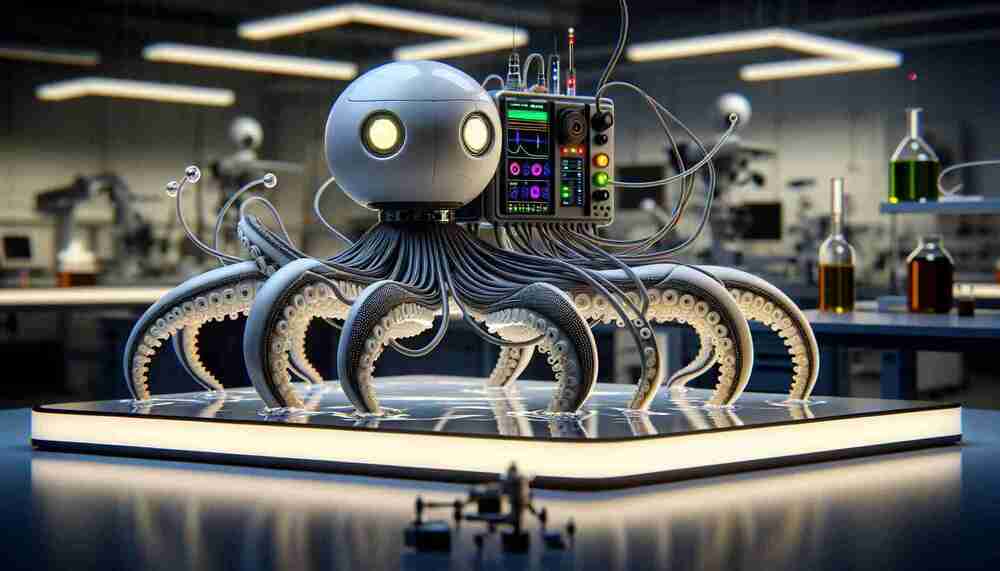

SUMMARY: A soft robot with octopus-inspired sensory and motion capabilities represents significant progress in robotics, offering nimbleness and adaptability in uncertain environments.

Robotic engineers have made a leap forward with the development of a soft robot that closely resembles the dynamic movements and sensory prowess of an octopus. This groundbreaking innovation from an international collaboration involving Beihang University, Tsinghua University, and the National University of Singapore has the potential to redefine how robots interact with the world around them.

The blueprint for this highly adaptable robot draws upon the intelligent, soft-bodied mechanics of an octopus, enabling smooth movements across a variety of surfaces and environments with precision. The sensorized soft arm, lovingly named the electronics-integrated soft octopus arm mimic (E-SOAM), embodies advancements in soft robotics with its incorporation of elastic materials and sophisticated liquid metal circuits that remain resilient under extreme deformation.