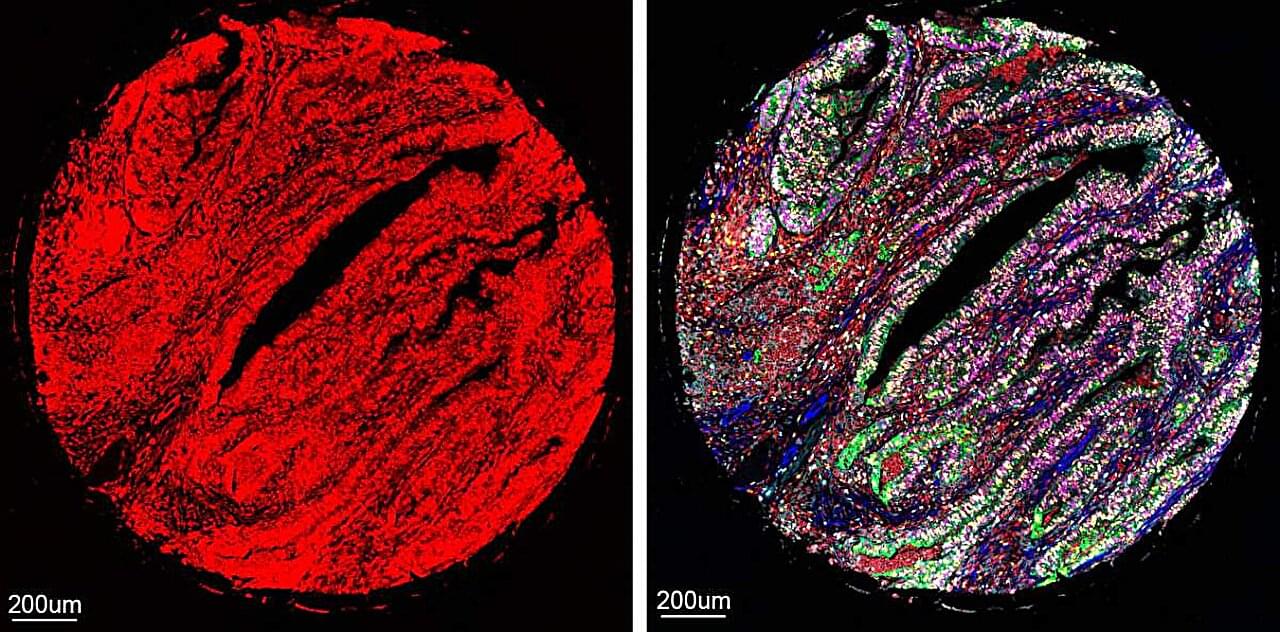

AI systems already work their magic in many areas of biomedical science, helping to solve protein structure, discover hidden patterns in the genome and process massive amounts of biological data. Now, an AI-assisted technology developed at the Weizmann Institute of Science and published in Nature Biotechnology may grant researchers and physicians an unprecedented means of peering deep into the body’s tissues by making it possible to simultaneously view more proteins than ever before, in a tissue sample.

“To understand how any particular tissue works, it’s crucial to measure lots of its proteins at the same time,” says Dr. Leeat Keren of Weizmann’s Molecular Cell Biology Department, who headed the research team. “This gives us an idea of which cells are present in the tissue and how they communicate and interact with one another.”

Keren explains that this knowledge is vital to the study of disease processes. Cancerous growths, for example, contain, in addition to tumor cells, various other cell types, including healthy cells of the tissue the tumor is growing on and of the immune system. The cellular makeup of the tumor and how those cell types interact with one another can determine the effectiveness of therapies or be used to predict which patients have a better prognosis and which are likely to develop metastases. Such findings, in turn, can lead to improved personalized treatments.