“We’re so cooked.”

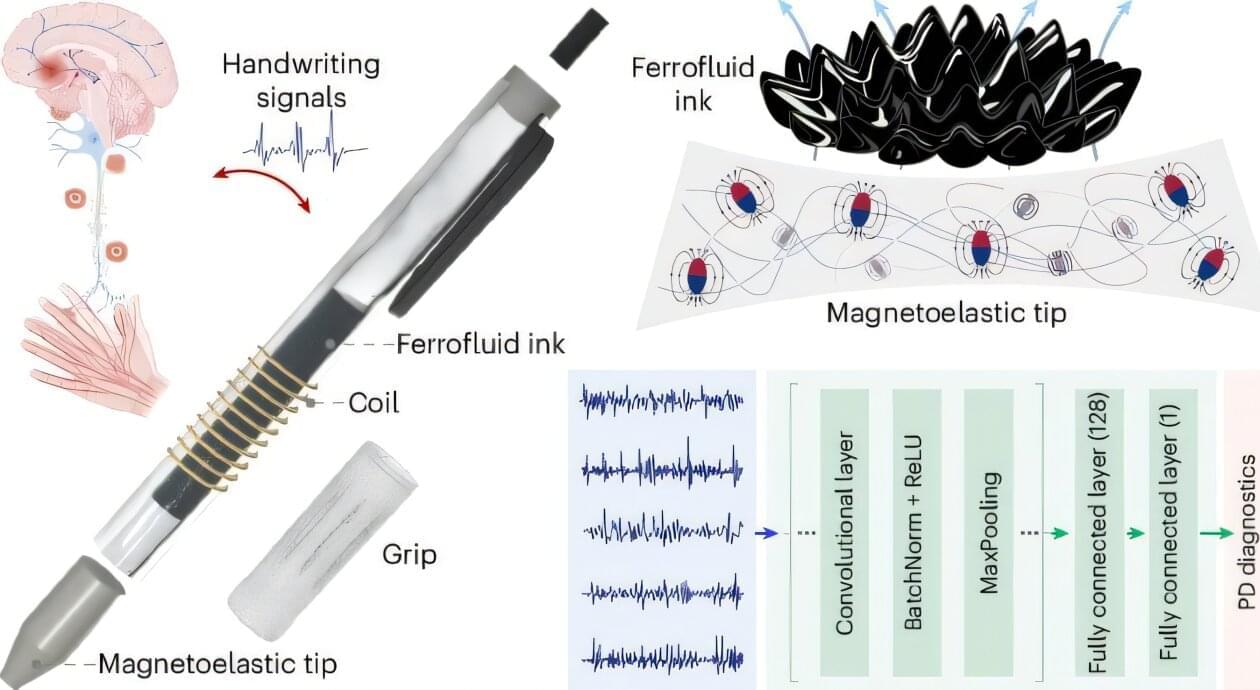

A team at the University of California, Los Angeles has developed a low-cost diagnostic pen that converts handwriting into electrical signals for early detection of Parkinson’s disease, achieving 96.22% accuracy in a pilot study.

Parkinson’s disease impairs the motor system, leading to tremors, stiffness, and slowed movements that impair fine motor functions such as handwriting. Clinical diagnosis today largely relies on subjective observations, which are prone to inconsistency and often inaccessible in low-resource settings. Biomarker-based diagnostics, while objective, remain constrained by cost and technical complexity.

In the study, “Neural network-assisted personalized handwriting analysis for Parkinson’s disease diagnostics,” published in Nature Chemical Engineering, researchers engineered a diagnostic pen to capture real-time motor signals during handwriting and convert them into quantifiable electrical outputs for disease classification.

What if accessing knowledge, which used to require hours of analyzing handwritten scrolls or books, could be done in mere moments?

Throughout history, the way humans acquire knowledge has experienced great revolutions. The birth of writing and books altered learning, allowing ideas to be preserved and shared across generations. Then came the Internet, connecting billions of people to vast information at their fingertips.

Today, we stand at another shift: the age of AI tools, where AI doesn’t just give us answers—it provides reliable, tailored responses in seconds. We no longer need to gather and evaluate the correct information for our problems. If knowledge is now a tool everyone can hold, the real revolution starts when we use this superpower to solve problems and improve the world.

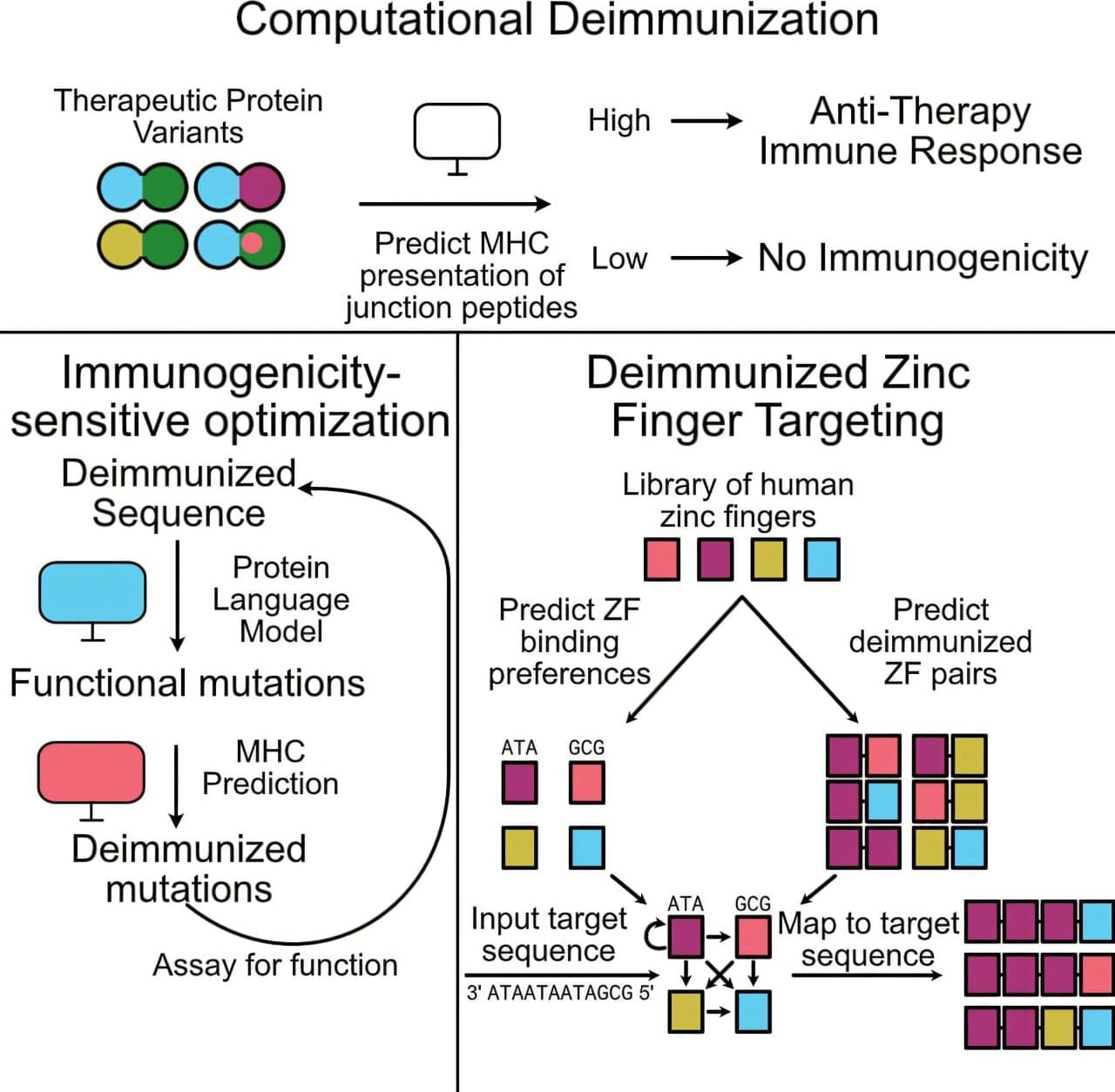

Machine learning models have seeped into the fabric of our lives, from curating playlists to explaining hard concepts in a few seconds. Beyond convenience, state-of-the-art algorithms are finding their way into modern-day medicine as a powerful potential tool. In one such advance, published in Cell Systems, Stanford researchers are using machine learning to improve the efficacy and safety of targeted cell and gene therapies by potentially using our own proteins.

Most human diseases occur due to the malfunctioning of proteins in our bodies, either systematically or locally. Naturally, introducing a new therapeutic protein to cure the one that is malfunctioning would be ideal.

Although nearly all therapeutic protein antibodies are either fully human or engineered to look human, a similar approach has yet to make its way to other therapeutic proteins, especially those that operate in cells, such as those involved in CAR-T and CRISPR-based therapies. The latter still runs the risk of triggering immune responses. To solve this problem, researchers at the Gao Lab have now turned to machine learning models.