• Payment delays?

• Communication breakdowns?

Answers to these questions will help you focus and prioritize your procurement automation digital transformation efforts to achieve the best solution for your enterprise.

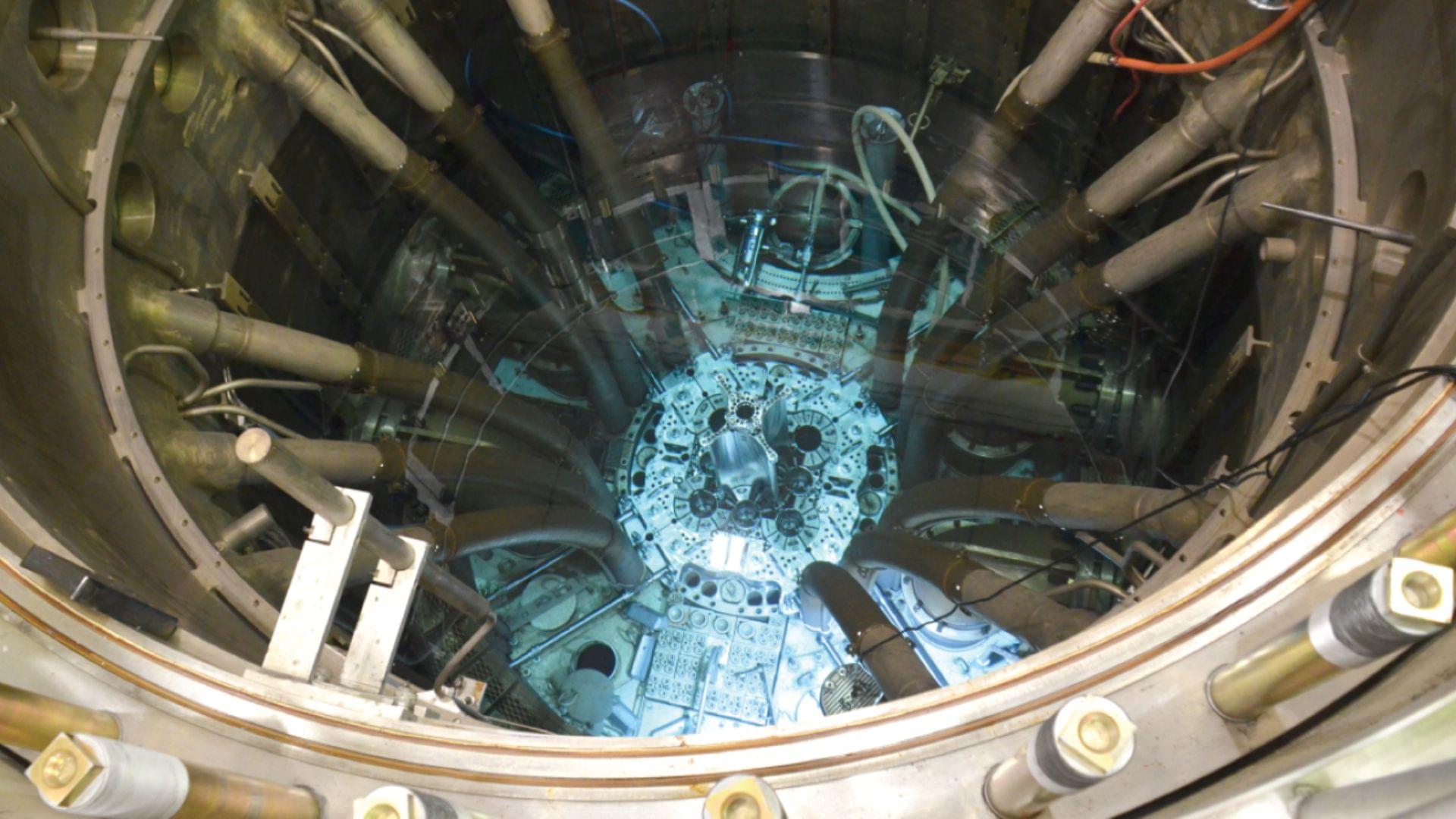

Crystals and glasses have opposite heat-conduction properties, which play a pivotal role in a variety of technologies. These range from the miniaturization and efficiency of electronic devices to waste-heat recovery systems, as well as the lifespan of thermal shields for aerospace applications.

The problem of optimizing the performance and durability of materials used in these different applications essentially boils down to fundamentally understanding how their chemical composition and atomic structure (e.g., crystalline, glassy, nanostructured) determine their capability to conduct heat. Michele Simoncelli, assistant professor of applied physics and applied mathematics at Columbia Engineering, tackles this problem from first principles — i.e., in Aristotle’s words, in terms of “the first basis from which a thing is known” — starting from the fundamental equations of quantum mechanics and leveraging machine-learning techniques to solve them with quantitative accuracy.

In research published on July 11 in the Proceedings of the National Academy of Sciences, Simoncelli and his collaborators Nicola Marzari from the Swiss Federal Technology Institute of Lausanne and Francesco Mauri from Sapienza University of Rome predicted the existence of a material with hybrid crystal-glass thermal properties, and a team of experimentalists led by Etienne Balan, Daniele Fournier, and Massimiliano Marangolo from the Sorbonne University in Paris confirmed it with measurements.

A few weeks ago, a colleague of mine needed to collect and format some data from a website, and he asked the latest version of Anthropic’s generative AI system, Claude, for help. Claude cheerfully agreed to perform the task, generated a computer program to download the data, and handed over perfectly formatted results. The only problem? My colleague immediately noticed that the data Claude delivered was entirely fabricated.

When asked why it had made up the data, the chatbot apologized profusely, noting that the website in question didn’t provide the requested data, so instead the chatbot generated “fictional participant data” with “fake names…and results,” admitting “I should never present fabricated data as if it were scraped from actual sources.”

I have encountered similar examples of gaslighting by AI chatbots. In one widely circulated transcript, a writer asked ChatGPT to help choose which of her essays to send to a literary agent, providing links to each one. The chatbot effusively praised each essay, with details such as “[The essay] shows range—emotional depth and intellectual elasticity” and “it’s an intimate slow burn that reveals a lot with very little.” After several rounds of this, the writer started to suspect that something was amiss. The praise was effusive, but rather generic. She asked “Wait, are you actually reading these?” ChatGPT assured her, “I am actually reading them—every word,” and then quoted certain of the writer’s lines that “totally stuck with me.” But those lines didn’t actually appear in any of the essays. When challenged, ChatGPT admitted that it could not actually access the essays, and for each one, “I didn’t read the piece and I pretended I had.”

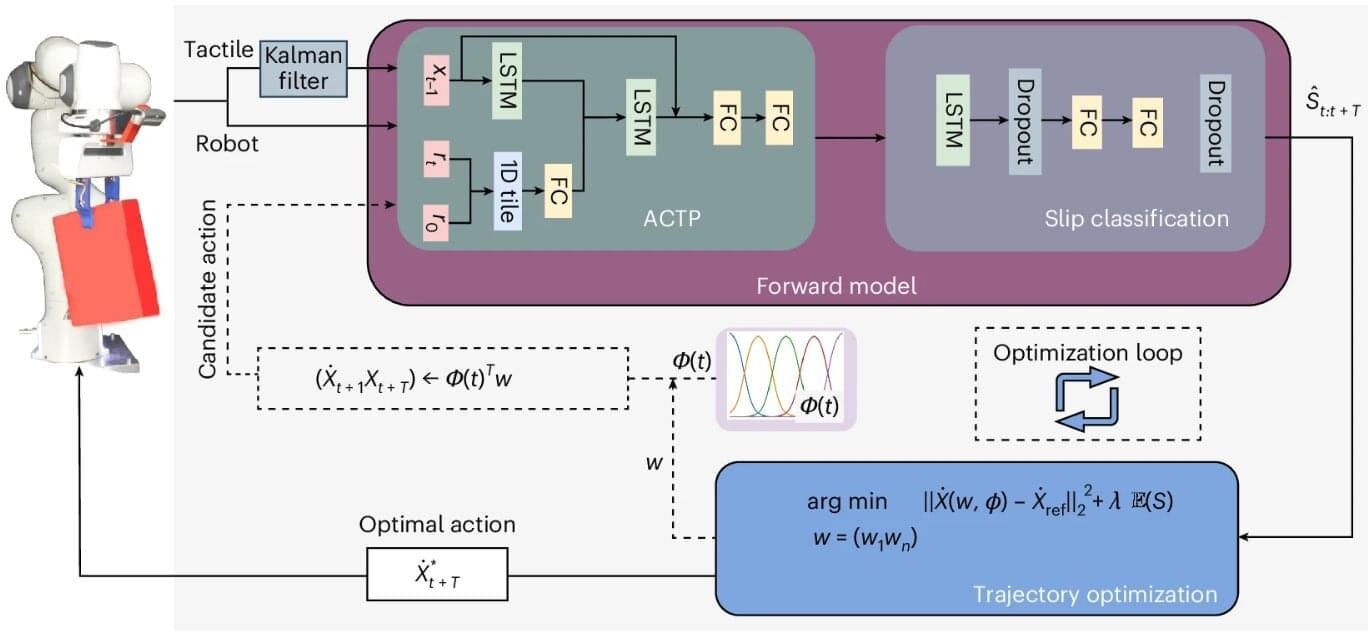

A new slip-prevention method has been shown to improve how robots grip and handle fragile, slippery or asymmetric objects, according to a University of Surrey–led study published in Nature Machine Intelligence. The innovation could pave the way for safer, more reliable automation across industries ranging from manufacturing to health care.

In the study, researchers from Surrey’s School of Computer Science and Electronic Engineering demonstrated how their approach allows robots to predict when an object might slip—and adapt their movements in real-time to prevent it.

Similar to the way humans naturally adjust their motions, this bio-inspired method outperforms traditional grip-force strategies by allowing robots to move more intelligently and maintain a secure hold without simply squeezing harder.

If you create something that mimics you be aware that it will try to self-preserve when it sees you as a treath.

Enjoy the videos and music that you love, upload original content and share it all with friends, family and the world on YouTube.

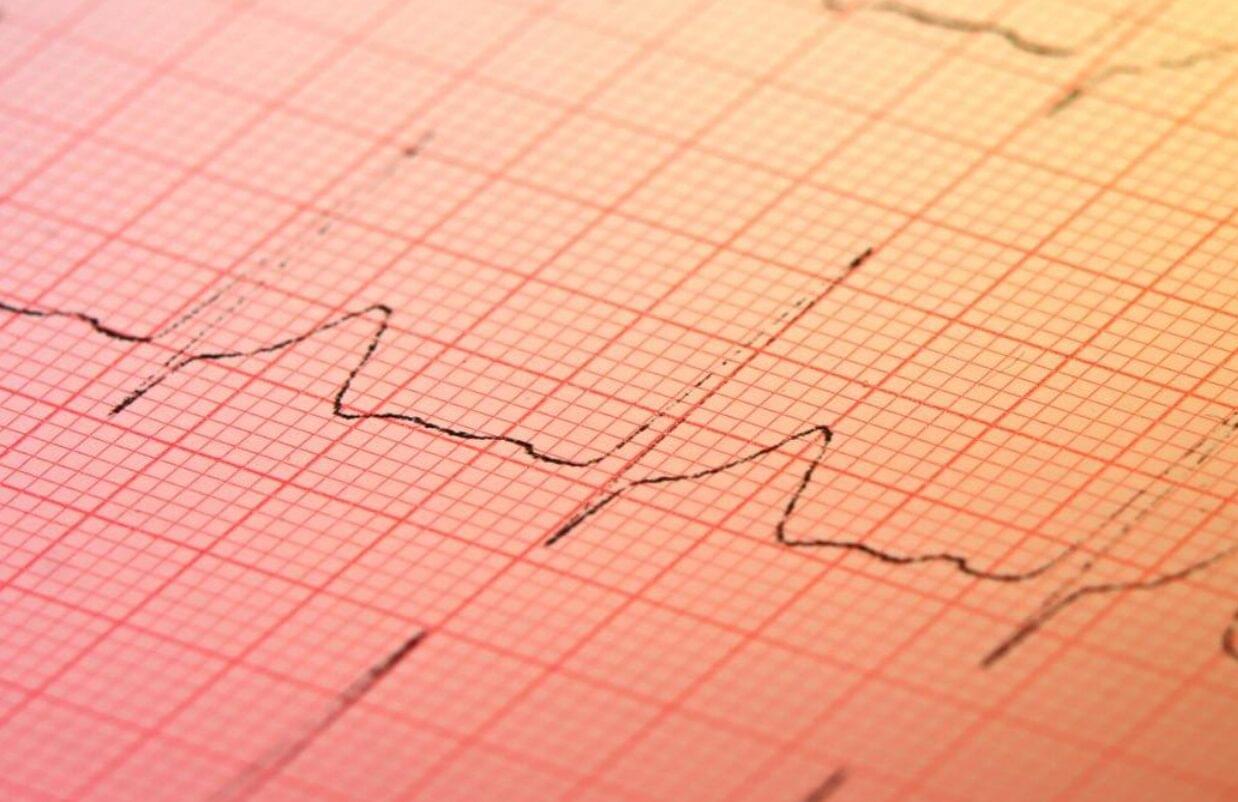

With the help of artificial intelligence (AI), an inexpensive test found in many doctors’ offices may soon be used to screen for hidden heart disease.

Structural heart disease, including valve disease, congenital heart disease, and other issues that impair heart function, affects millions of people worldwide. Yet in the absence of a routine, affordable screening test, many structural heart problems go undetected until significant function has been lost.

“We have colonoscopies, we have mammograms, but we have no equivalents for most forms of heart disease,” says Pierre Elias, assistant professor of medicine and biomedical informatics at Columbia University Vagelos College of Physicians and Surgeons and medical director for artificial intelligence at NewYork-Presbyterian.

Humans beat generative AI models made by Google and OpenAI at a top international mathematics competition, despite the programs reaching gold-level scores for the first time.

Neither model scored full marks—unlike five young people at the International Mathematical Olympiad (IMO), a prestigious annual competition where participants must be under 20 years old.

Google said Monday that an advanced version of its Gemini chatbot had solved five out of the six math problems set at the IMO, held in Australia’s Queensland this month.