Humans are not critical of AI advice and creations, despite the high probability of «hallucinations» as well as deliberate hoaxes. These two people watched a misleading video and went on a trip.

For Silicon Valley giants, getting ahead in the artificial intelligence race requires more than building the biggest, most capable models; they’re also competing to get third-party developers to build new applications based on their technology.

Now, Meta is teaming up with Amazon’s cloud computing unit, Amazon Web Services, on an initiative designed to do just that.

The program will provide six months of technical support from both companies’ engineers and $200,000 in AWS cloud computing credits each to 30 US startups looking to build AI tools on Meta’s Llama AI model. The partnership is set to be unveiled at AWS Summit in New York City on Wednesday.

Meta has reportedly acquired two more high-profile researchers from OpenAI, Jason Wei and Hyung Won Chung, as part of its effort to advance in the field of artificial general intelligence.

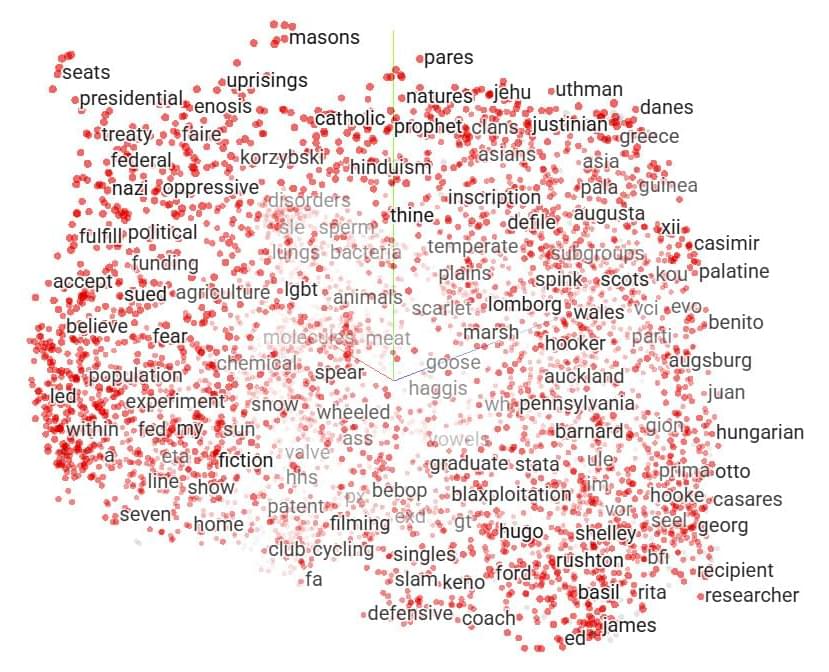

Metr recently published a paper about the impact AI tools have on open-source developer productivity1. They show that when open source developers working in codebases that they are deeply familiar with use AI tools to complete a task, then they take longer to complete that task compared to other tasks where they are barred from using AI tools. Interestingly the developers predict that AI will make them faster, and continue to believe that it did make them faster, even after completing the task slower than they otherwise would!

In a step forward for soft robotics and biomedical devices, Rice University engineers have uncovered a powerful new way to boost the strength and durability of silicone-based soft devices without changing the materials themselves. Their study, published in a special issue of Science Advances, focuses on printed and musculoskeletal robotics and offers a predictive framework that connects silicone curing conditions with adhesion strength, enabling dramatic improvements in performance for both molded and 3D-printed elastomer components.

Today’s robots are stuck—their bodies are usually closed systems that can neither grow nor self-repair, nor adapt to their environment. Now, scientists at Columbia University have developed robots that can physically “grow,” “heal,” and improve themselves by integrating material from their environment or from other robots.

Described in a new study published in Science Advances, this process, called “Robot Metabolism,” enables machines to absorb and reuse parts from other robots or their surroundings.

“True autonomy means robots must not only think for themselves but also physically sustain themselves,” explains Philippe Martin Wyder, lead author and researcher at Columbia Engineering and the University of Washington. “Just as biological life absorbs and integrates resources, these robots grow, adapt, and repair using materials from their environment or from other robots.”