The next-generation networks will provide major strides in latency, speed and localized access to AI.

Full episode with Ilya Sutskever (May 2020): https://www.youtube.com/watch?v=13CZPWmke6A

Clips channel (Lex Clips): https://www.youtube.com/lexclips

Main channel (Lex Fridman): https://www.youtube.com/lexfridman

(more links below)

Podcast full episodes playlist:

Podcasts clips playlist:

Podcast website:

https://lexfridman.com/ai

Podcast on Apple Podcasts (iTunes):

https://apple.co/2lwqZIr

Podcast on Spotify:

Hey all! I’ve recently made a video on how humans can one day become AI and how this can be good for individuals and humanity on its own! If you are interested, please give it a watch!

AI is oftentimes the ultimate bane of science-fiction. Artificial intelligence oftentimes becomes smarter than humanity and turns on us in sci-fi movies and films. However, what if people could become AI one day and use this to their advantage? And if so, would people choose to become AI? Here’s why I believe this may be possible and why people may actually voluntarily choose to become AI themselves- thus blurring the lines between computer science and biology.

Please follow our instagram at: https://www.instagram.com/the_futurist_tom/

For business inquires, please contact [email protected]

As impossible as it seems, it won’t be long before artificial intelligence is writing and creating films. As a lead up to this eventuality, here is a list of just some of the creative endeavors that AI has already accomplished:

• It is now a simple matter for AI to create new paintings after being shown a number of examples in a particular genre. For example, one AI computer was given hundreds of samples of 17th-century “Old Master” style paintings and was asked to create its own paintings. One of the paintings it came up with is titled “Portrait of Edmond De Belamy” and sold for a whopping $432,500 at a Christie’s auction.

• Another AI computer was fed hundreds of modern pop songs and came up with its own song named “Blue Jeans and Bloody Tears” complete with lyrics.

In 2033, people can be “uploaded” into virtual reality hotels run by 6 tech firms. Cash-strapped Nora lives in Brooklyn and works customer service for the luxurious “Lakeview” digital afterlife. When L.A. party-boy/coder Nathan’s self-driving car crashes, his high-maintenance girlfriend uploads him permanently into Nora’s VR world. Upload is created by Greg Daniels (The Office).

Wireless charging is already a thing (in smartphones, for example), but scientists are working on the next level of this technology that could deliver power over greater distances and to moving objects, such as cars.

Imagine cruising down the road while your electric vehicle gets charged, or having a robot that doesn’t lose battery life while it moves around a factory floor. That’s the sort of potential behind the newly developed technology from a team at Stanford University.

If you’re a long-time ScienceAlert reader, you may remember the same researchers first debuted the technology back in 2017. Now it’s been made more efficient, more powerful, and more practical – so it can hopefully soon be moved out of the lab.

Circa 2019

MIT’s Mini Cheetah robots are small quadrupedal robots capable of running, jumping, walking, and flipping.

In a recently published video, the tiny bots can be seen roaming, hopping, and marching around a field and playing with a soccer ball.

They’re not consumer products, but MIT hopes that the Mini Cheetah’s durable and modular design will make it an ideal tool for researchers.

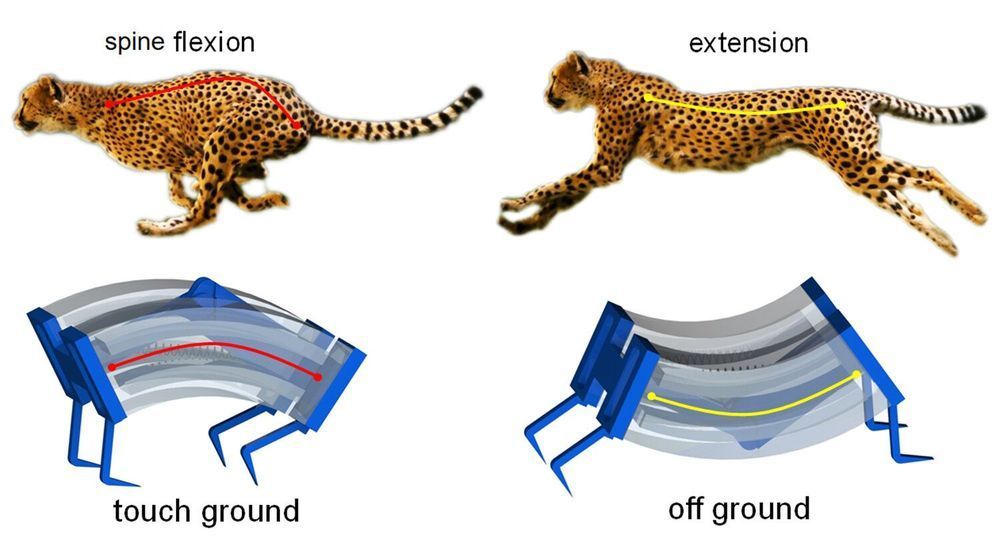

Inspired by the biomechanics of cheetahs, researchers have developed a new type of soft robot that is capable of moving more quickly on solid surfaces or in the water than previous generations of soft robots. The new soft robotics are also capable of grabbing objects delicately—or with sufficient strength to lift heavy objects.

“Cheetahs are the fastest creatures on land, and they derive their speed and power from the flexing of their spines,” says Jie Yin, an assistant professor of mechanical and aerospace engineering at North Carolina State University and corresponding author of a paper on the new soft robots.

“We were inspired by the cheetah to create a type of soft robot that has a spring-powered, ‘bistable’ spine, meaning that the robot has two stable states,” Yin says. “We can switch between these stable states rapidly by pumping air into channels that line the soft, silicone robot. Switching between the two states releases a significant amount of energy, allowing the robot to quickly exert force against the ground. This enables the robot to gallop across the surface, meaning that its feet leave the ground.

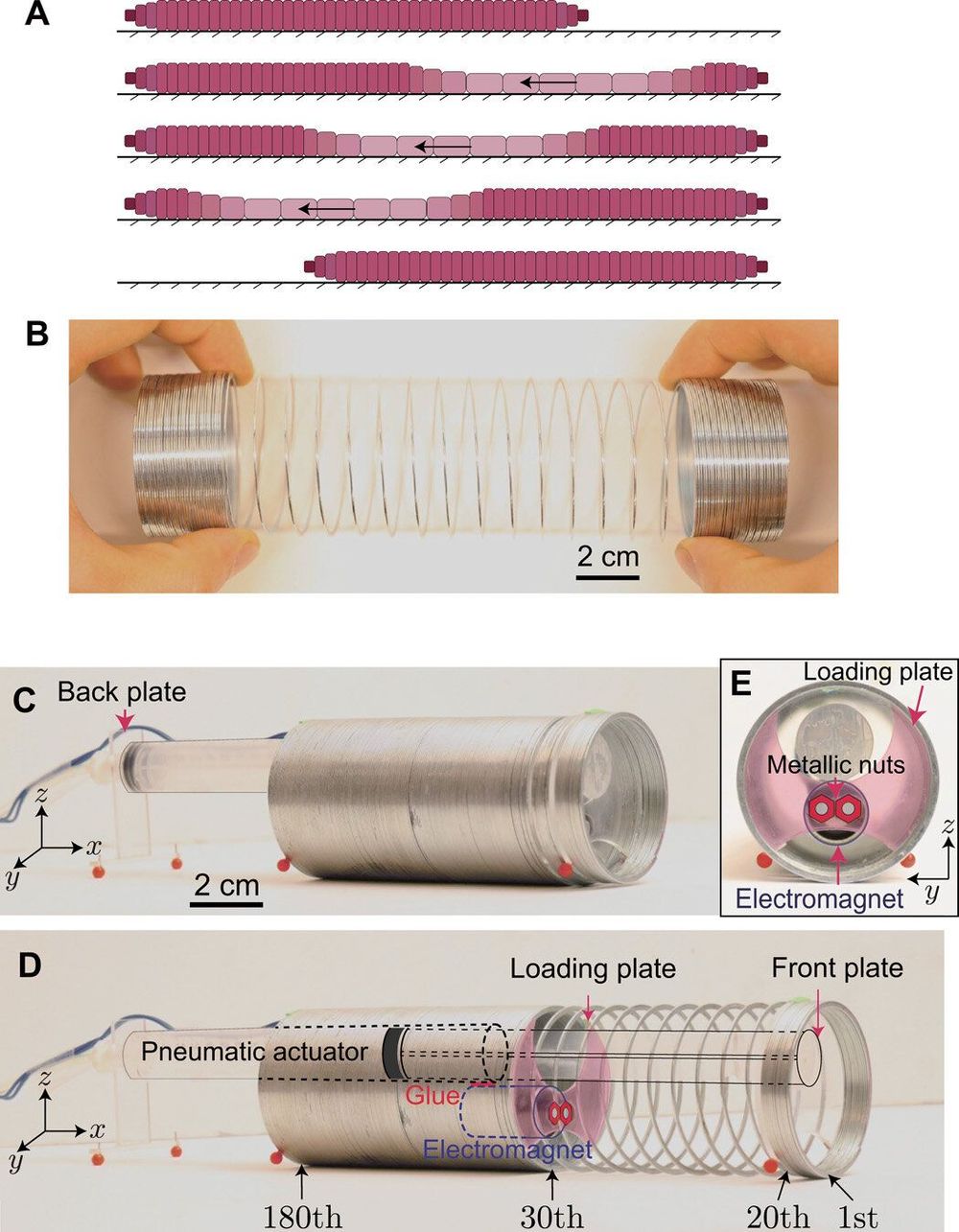

Scientists have recently explored the unique properties of nonlinear waves to facilitate a wide range of applications including impact mitigation, asymmetric transmission, switching and focusing. In a new study now published on Science Advances, Bolei Deng and a team of research scientists at Harvard, CNRS and the Wyss Institute for Biologically Inspired Engineering in the U.S. and France harnessed the propagation of nonlinear waves to make flexible structures crawl. They combined bioinspired experimental and theoretical methods to show how such pulse-driven locomotion could reach a maximum efficiency when the initiated pulses were solitons (solitary wave). The simple machine developed in the work could move across a wide range of surfaces and steer onward. The study expanded the variety of possible applications with nonlinear waves to offer a new platform for flexible machines.

Flexible structures that are capable of large deformation are attracting interest in bioengineering due to their intriguing static response and their ability to support elastic waves of large amplitude. By carefully controlling their geometry, the elastic energy landscape of highly deformable systems can be engineered to propagate a variety of nonlinear waves including vector solitons, transition waves and rarefaction pulses. The dynamic behavior of such structures demonstrate a very rich physics, while offering new opportunities to manipulate the propagation of mechanical signals. Such mechanisms can allow unidirectional propagation, wave guiding, mechanical logic and mitigation, among other applications.

In this work, Deng et al. were inspired by the biological retrograde peristaltic wave motion in earthworms and the ability of linear elastic waves to generate motion in ultrasonic motors. The team showed the propagation of nonlinear elastic waves in flexible structures to provide opportunities for locomotion. As proof of concept, they focused on a Slinky – and used it to create a pulse-driven robot capable of propelling itself. They built the simple machine by connecting the Slinky to a pneumatic actuator. The team used an electromagnet and a plate embedded between the loops to initiate nonlinear pulses to propagate along the device from the front to the back, allowing the pulse directionality to dictate the simple robot to move forward. The results indicated the efficiency of such pulse-driven locomotion to be optimal with solitons – large amplitude nonlinear pulses with a constant velocity and stable shape along propagation.