There are some tasks that traditional robots — the rigid and metallic kind — simply aren’t cut out for. Soft-bodied robots, on the other hand, may be able to interact with people more safely or slip into tight spaces with ease. But for robots to reliably complete their programmed duties, they need to know the whereabouts of all their body parts. That’s a tall task for a soft robot that can deform in a virtually infinite number of ways.

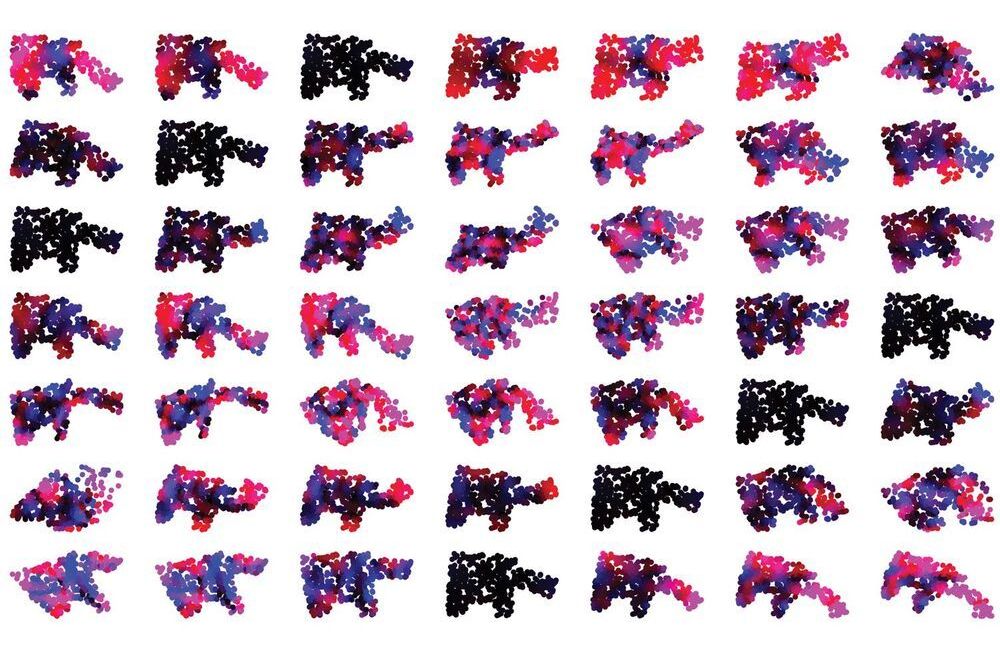

MIT researchers have developed an algorithm to help engineers design soft robots that collect more useful information about their surroundings. The deep-learning algorithm suggests an optimized placement of sensors within the robot’s body, allowing it to better interact with its environment and complete assigned tasks. The advance is a step toward the automation of robot design. “The system not only learns a given task, but also how to best design the robot to solve that task,” says Alexander Amini. “Sensor placement is a very difficult problem to solve. So, having this solution is extremely exciting.”

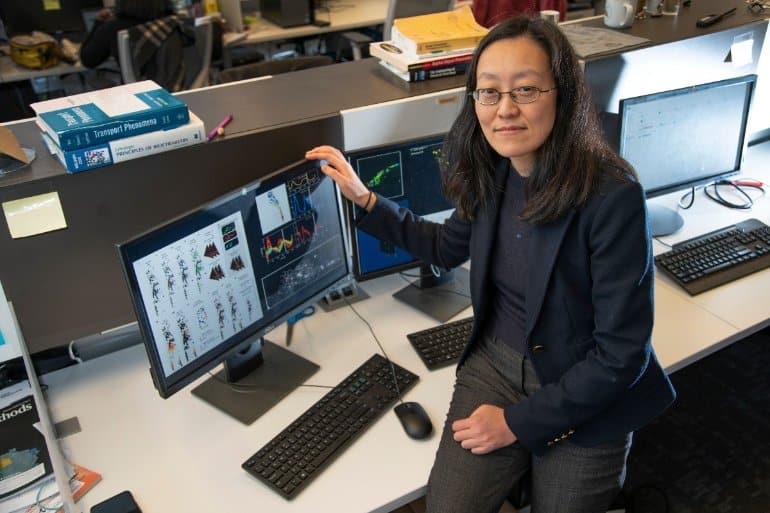

The research will be presented during April’s IEEE International Conference on Soft Robotics and will be published in the journal IEEE Robotics and Automation Letters. Co-lead authors are Amini and Andrew Spielberg, both PhD students in MIT Computer Science and Artificial Intelligence Laboratory (CSAIL). Other co-authors include MIT PhD student Lillian Chin, and professors Wojciech Matusik and Daniela Rus.