“DEATH DEFANGED Cryonics, Cryothanasia and the Future of Sentience” with David Pearce, Author, Philosopher and well-known TransHumanistThis service will be on ZOOM and YouTube Live Stream only, not in person.

Zoom at 6:00 PM Eastern Time.

Live Stream at 7:00 PM Eastern Time.

Stay after the close of the YouTube streaming for the Zoom After Party until??:00 pm. Enjoy fellowship in the extended discussions with Neal and Immortalists & Friends from Around the World, sharing bold ideas on health, longevity, and technology!(Note: Mr. Pearce was scheduled last month to give a presentation but due to circumstances beyond our control we did not have that presentation. We look forward this month to have David Pearce join us!)David Pearce is the author of 4 major works:“The Hedonistic Imperative”, “The Biointelligence Explosion: How Recursively Self-Improving Organic Robots will Modify their Own Source Code and Bootstrap Our Way to Full-Spectrum Superintelligence”, “Singularity Hypotheses: A Scientific and Philosophical Assessment”, & “Can Biotechnology Abolish Suffering?”

The Hedonistic Imperative (1995) advocates the use of biotechnology to abolish suffering throughout the living world. In 1998, he co-founded the World Transhumanist Association (H+) with Nick Bostrom. Transhumanists believe in the use of technology to overcome our biological limitations.

Currently, Pearce is a fellow of the Institute for Ethics and Emerging Technologies, and sits on the futurist advisory board of the Lifeboat Foundation. He is also the director of bioethics of Invincible Wellbeing and is on the advisory boards of the Center on Long-Term Risk, the Organization for the Prevention of Intense Suffering and since 2021 the Qualia Research Institute. Please share this event with someone that you care about. Would you like to make a Donation to Perpetual Life? Your donations help us grow & improve our services. To donate, go to our website http://Perpetual.Life and use the PayPal button at the top right of the page. Thank you for your generous donations, we appreciate it immensely!“Our task is to make nature, the blind force of nature, into an instrument of universal resuscitation and to become a union of immortal beings.“

- Nikolai F. FedorovWe hold faith in the technologies & discoveries of humanity to END AGING and Defeat involuntary Death within our lifetime. Working to Save Lives with Age Reversal Education. ========== Perpetual Life Creed ==========We believe that all of life is sacred and that we have been given this one life to make unlimited. We believe in our Creator’s divine plan for all of humanity to have infinite lifespans in perfect health and eternal joy, rendering death to be optional. By following our Gospel we achieve eternal life creating a heaven here on earth. We follow Nikolai Fyodorov, who taught that the transcendence of the creator will only be solved when humanity in our unified efforts become an instrument of universal resuscitation, when the divine word becomes our divine action. And we follow Arthur C. Clarke, who said “The only way to discover the limits of the possible is to go beyond them into the impossible.“And so, we enter each day energized in Spirit and empowered by the words of our prophets to live in joy, serving our creator and all of mankind, Forever and Ever.==========Wishing you Perfect Health and Great Longevity!Perpetual Life, a science-faith based church is open to people of all faiths & belief systems. We are non-denominational & non-judgmental and a central gathering place of Immortalists & Transhumans. What unites us is our common faith, belief and desire in Unlimited Life Spans. We are located in Pompano Beach FL at 950 South Cypress Road.

Category: robotics/AI – Page 1,736

A.I. Wars, The Fermi Paradox and Great Filters with David Brin

Why we need AI to compete against each other. Does a Great Filter Stop all Alien Civilizations at some point? Are we Doomed if We Find Life in Our Solar System?

David Brin is a scientist, speaker, technical consultant and world-known author. His novels have been New York Times Bestsellers, winning multiple Hugo, Nebula and other awards.

A 1998 movie, directed by Kevin Costner, was loosely based on his book The Postman.

His Ph.D in Physics from UCSD — followed a masters in optics and an undergraduate degree in astrophysics from Caltech. He was a postdoctoral fellow at the California Space Institute and the Jet Propulsion Laboratory.

Brin serves on advisory committees dealing with subjects as diverse as national defense and homeland security, astronomy and space exploration, SETI and nanotechnology, future/prediction and philanthropy. He has served since 2010 on the council of external advisers for NASA’s Innovative and Advanced Concepts group (NIAC), which supports the most inventive and potentially ground-breaking new endeavors.

https://www.davidbrin.com/books.html.

https://twitter.com/DavidBrin.

https://www.newsweek.com/soon-humanity-wont-alone-universe-opinion-1717446

Youtube Membership: https://www.youtube.com/channel/UCz3qvETKooktNgCvvheuQDw/join.

Podcast: https://anchor.fm/john-michael-godier/subscribe.

Apple: https://apple.co/3CS7rjT

More JMG

https://www.youtube.com/c/JohnMichaelGodier.

Want to support the channel?

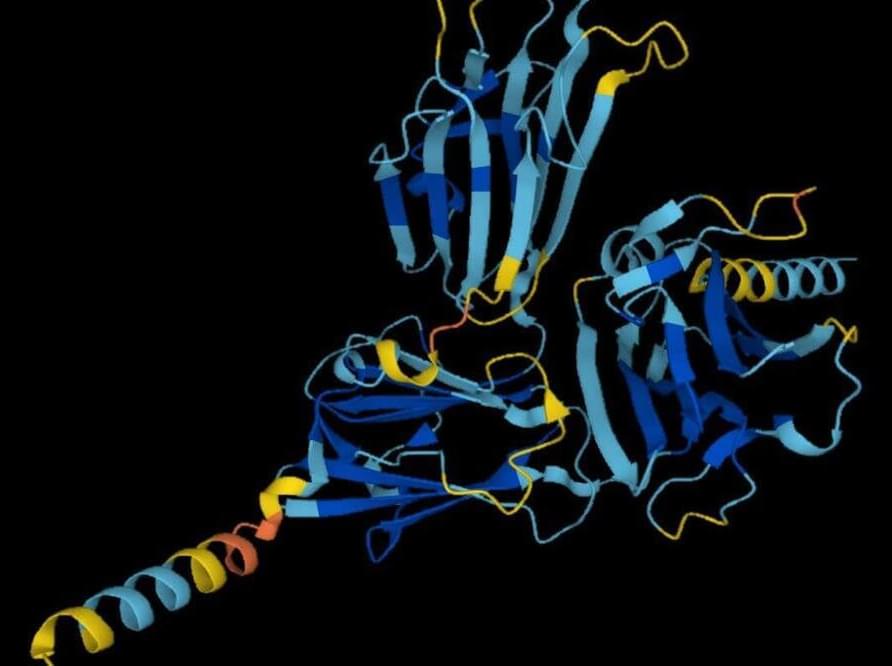

DeepMind’s protein-folding AI cracks biology’s biggest problem

DeepMind has predicted the structure of almost every protein so far catalogued by science, cracking one of the grand challenges of biology in just 18 months thanks to an artificial intelligence called AlphaFold. Researchers say that the work has already led to advances in combating malaria, antibiotic resistance and plastic waste, and could speed up the discovery of new drugs.

Determining the crumpled shapes of proteins based on their sequences of constituent amino acids has been a persistent problem for decades in biology. Some of these amino acids are attracted to others, some are repelled by water, and the chains form intricate shapes that are hard to accurately determine.

Thinking long-term to save the world Martin Rees at New Scientist Live this October.

Columbia Engineering Roboticists Discover Alternative Physics

A new AI program observed physical phenomena and uncovered relevant variables—a necessary precursor to any physics theory.

A new type of soft robotic actuator that can be scaled down to just one centimeter

A team of researchers at Istituto Italiano di Tecnologia’s Bioinspired Soft Robotics Laboratory has developed a new pleat-based soft robotic actuator that can be used in a variety of sizes, down to just 1 centimeter. In their paper published in the journal Science Robotics, the group describes the technology behind their new actuator and how well it worked when they tested it under varied circumstances.

Engineers working on soft robotics projects have often found themselves constrained by standard pneumatic artificial muscle actuators, which tend to only work well at a given size due to the large number of complex parts. In this new effort, the researchers have added a new feature to such actuators that requires fewer parts, resulting in a smaller actuator.

Pneumatic artificial muscle actuators work by pumping air in and out of small balloon-like sacs, simulating muscle activity. Not only do they expand and contract, but they are also bendable because they are made using resins. When used in conjunction with other parts, such as hands, the artificial muscles allow for gripping and twisting. To reduce the number of complex parts, the researchers adjusted the sacs by added pleats. This reduces the size of the sacs as air is withdrawn without having to add other parts, making them useful in much smaller devices. The researchers also used a resin that was more flexible than those typically used in such work.

An AI May Have Just Invented “Alternative” Physics

An AI shown videos of physical phenomena and instructed to identify the variables involved produced answers different from our own.

DayDreamer: An algorithm to quickly teach robots new behaviors in the real world

Training robots to complete tasks in the real-world can be a very time-consuming process, which involves building a fast and efficient simulator, performing numerous trials on it, and then transferring the behaviors learned during these trials to the real world. In many cases, however, the performance achieved in simulations does not match the one attained in the real-world, due to unpredictable changes in the environment or task.

Researchers at the University of California, Berkeley (UC Berkeley) have recently developed DayDreamer, a tool that could be used to train robots to complete real-world tasks more effectively. Their approach, introduced in a paper pre-published on arXiv, is based on learning models of the world that allow robots to predict the outcomes of their movements and actions, reducing the need for extensive trial and error training in the real-world.

“We wanted to build robots that continuously learn directly in the real world, without having to create a simulation environment,” Danijar Hafner, one of the researchers who carried out the study, told TechXplore. “We had only learned world models of video games before, so it was super exciting to see that the same algorithm allows robots to quickly learn in the real world, too!”

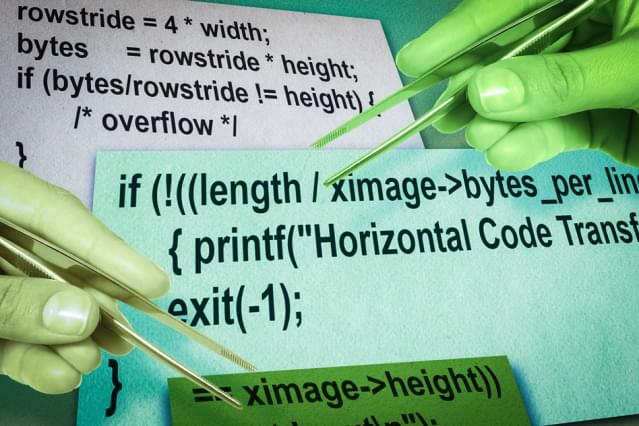

MIT system can fix your software bugs on its own (by borrowing from other software)

Circa 2015

New software being developed at MIT is proving able to autonomously repair software bugs by borrowing from other programs and across different programming languages, without requiring access to the source code. This could save developers thousands of hours of programming time and lead to much more stable software.

Bugs are the bane of the software developer’s life. The changes that must be made to fix them are often trivial, typically involving changing only a few lines of code, but the process of identifying exactly which lines need to be fixed can be a very time-consuming and often very frustrating process, particularly in larger projects.

But now, new software from MIT could take care of this, and more. The system, dubbed CodePhage, can fix bugs which have to do with variable checks, and could soon be expanded to fix many more types of mistakes. Remarkably, according to MIT researcher Stelios Sidiroglou-Douskos, the software can do this kind of dynamic code translation and transplant (dubbed “horizontal code transplant,” from the analogous process in genetics) without needing access to the source code and across different programming languages, by analyzing the executable file directly.

Artificial Intelligence Discovers Alternative Physics

A new Columbia UniversityColumbia University is a private Ivy League research university in New York City that was established in 1754. This makes it the oldest institution of higher education in New York and the fifth-oldest in the United States. It is often just referred to as Columbia, but its official name is Columbia University in the City of New York.