In this Robotics 24/7 Roundtable, viewers can learn how robots and software can enable retailers to improve productivity, manage their supply chains, and better serve consumers.

A recent debate at Oxford University has convinced scientists that artificial intelligence is worth considering. The computer was asked about its views on the future, and whether AI’s emergence is ethical.

The AI that answered the questions is called Megatron and was created by a team at Nvidia. Megatron’s head contains all of Wikipedia, 63 million English news articles, and 38 gigabytes of Reddit chat.

This information helped him form his opinion. Participants also participated in the discussion. Megatron responded to their statements that they don’t believe that AI will have an ethical future, in a way that terrified those present.

Millions of children log into chat rooms every day to talk with other children. One of these “children” could well be a man pretending to be a 12-year-old girl with far more sinister intentions than having a chat about “My Little Pony” episodes.

Inventor and NTNU professor Patrick Bours at AiBA is working to prevent just this type of predatory behavior. AiBA, an AI-digital moderator that Bours helped found, can offer a tool based on behavioral biometrics and algorithms that detect sexual abusers in online chats with children.

And now, as recently reported by Dagens Næringsliv, a national financial newspaper, the company has raised capital of NOK 7.5. million, with investors including Firda and Wiski Capital, two Norwegian-based firms.

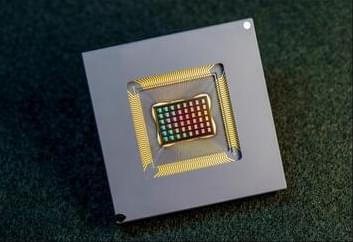

A team of researchers in the US and China has designed and built a neuromorphic AI chip using resistive RAM, also known as memristors.

The 48 core NeuRRAM chip developed at the University of California San Diego is twice as energy efficient as other compute-in-memory chips and provides results that are just as accurate as conventional digital chips.

Computation with RRAM chips is not necessarily new, and many startups and research groups are working on the technology. However it generally leads to a decrease in the accuracy of the computations performed on the chip and a lack of flexibility in the chip’s architecture.

Interested in learning what’s next for the gaming industry? Join gaming executives to discuss emerging parts of the industry this October at GamesBeat Summit Next. Register today.

Inworld AI has raised $50 million for its developer platform for creating AI-driven virtual characters in video games and the metaverse.

The firm raised the money in March and is announcing it now. It also hired special effects and entertainment pioneer John Gaeta as its chief creative officer. The company’s idea is to populate games with smarter computer-controlled characters so that players can have longer conversations with them and feel like the world is much more immersive.

Were you unable to attend Transform 2022? Check out all of the summit sessions in our on-demand library now! Watch here.

AI adoption may be steadily rising, but a closer examination shows that most enterprise companies may not be quite ready for the big time when it comes to artificial intelligence.

Recent data from Palo Alto, California-based AI unicorn SambaNova Systems, for example, shows that more than two-thirds of organizations think using artificial intelligence (AI) will cut costs by automating processes and using employees more efficiently. But only 18% are rolling out large-scale, enterprise-class AI initiatives. The rest are introducing AI individually across multiple programs, rather than risking an investment in big-picture, large-scale adoption.

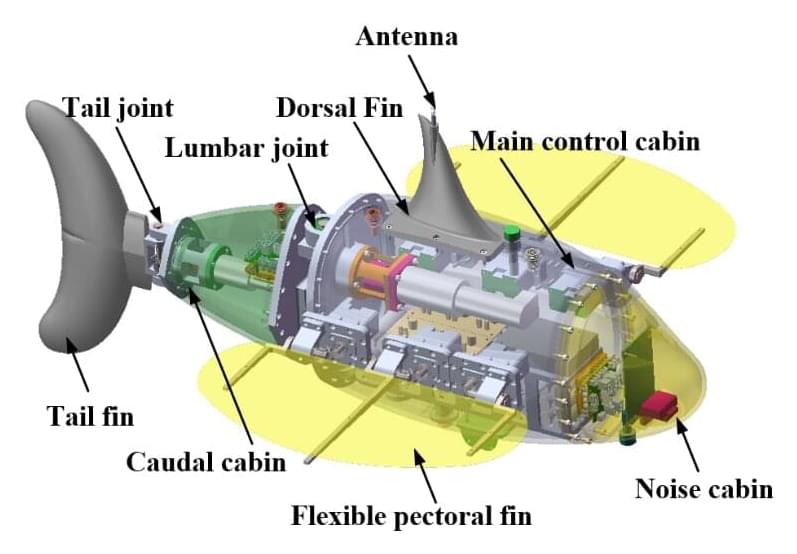

Underwater robots are being widely used as tools in a variety of marine tasks. The RobDact is one such bionic underwater vehicle, inspired by a fish called Dactylopteridae known for its enlarged pectoral fins. A research team has combined computational fluid dynamics and a force measurement experiment to study the RobDact, creating an accurate hydrodynamic model of the RobDact that allows them to better control the vehicle.

The team published their findings in Cyborg and Bionic Systems on May 31, 2022.

Underwater robots are now used for many marine tasks, including in the fishery industry, underwater exploration, and mapping. Most of the traditional underwater robots are driven by a propeller, which is effective for cruising in open waters at a stable speed. However, underwater robots often need to be able to move or hover at low speeds in turbulent waters, while performing a specific task. It is difficult for the propeller to move the robot in these conditions. Another factor when an underwater robot is moving at low speeds in unstable flowing waters is the propeller’s “twitching” movement. This twitching generates unpredictable fluid pulses that reduce the robot’s efficiency.

In recent years, roboticists have developed a wide variety of robotic systems with different body structures and capabilities. Most of these robots are either made of hard materials, such as metals, or soft materials, such as silicon and rubbery materials.

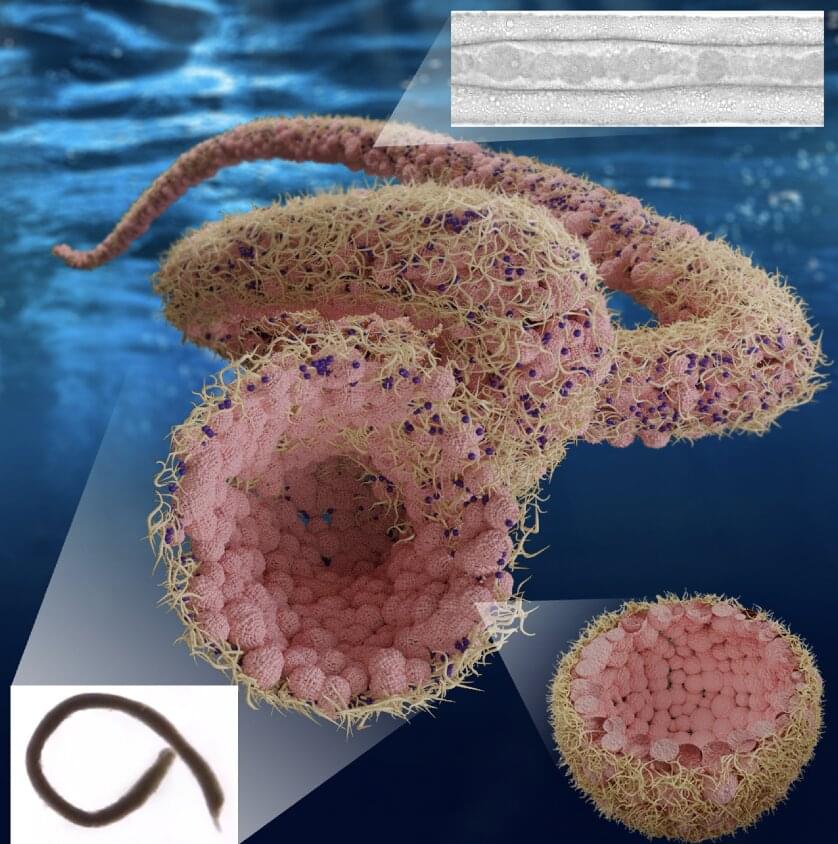

Researchers at Hong Kong University (HKU) and Lawrence Berkeley National Laboratory have recently created Aquabots, a new class of soft robots that are predominantly made of liquids. As most biological systems are predominantly made up of water or other aqueous solutions, the new robots, introduced in a paper published in ACS Nano, could have highly valuable biomedical and environmental applications.

“We have been engaged in the development of adaptive interfacial assemblies of materials at the oil-water and water-water interface using nanoparticles and polyelectrolytes,” Ho Cheung (Anderson) Shum, Thomas P. Russell, and Shipei Zhu told TechXplore via email. “Our idea was to assemble the materials that the interface and the assemblies lock in the shapes of the liquids. The shapes are dictated using external forces to generate arbitrary shapes or to use all-liquid 3D printing to be able to spatially organize the assemblies.”

Most materials—from rubber bands to steel beams—thin out as they are stretched, but engineers can use origami’s interlocking ridges and precise folds to reverse this tendency and build devices that grow wider as they are pulled apart.

Researchers increasingly use this kind of technique, drawn from the ancient art of origami, to design spacecraft components, medical robots and antenna arrays. However, much of the work has progressed via instinct and trial and error. Now, researchers from Princeton Engineering and Georgia Tech have developed a general formula that analyzes how structures can be configured to thin, remain unaffected, or thicken as they are stretched, pushed or bent.

Kon-Well Wang, a professor of mechanical engineering at the University of Michigan who was not involved in the research, called the work “elegant and extremely intriguing.”