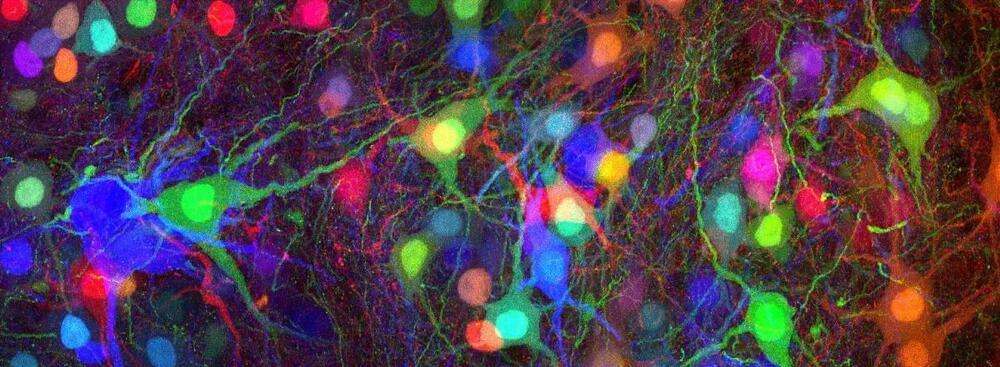

HBP researchers have trained a large-scale model of the primary visual cortex of the mouse to solve visual tasks in a highly robust way. The model provides the basis for a new generation of neural network models. Due to their versatility and energy-efficient processing, these models can contribute to advances in neuromorphic computing.

Modeling the brain can have a massive impact on artificial intelligence (AI): since the brain processes images in a much more energy-efficient way than artificial networks, scientists take inspiration from neuroscience to create neural networks that function similarly to the biological ones to significantly save energy.

In that sense, brain-inspired neural networks are likely to have an impact on future technology, by serving as blueprints for visual processing in more energy-efficient neuromorphic hardware. Now, a study by Human Brain Project (HBP) researchers from the Graz University of Technology (Austria) showed how a large data-based model can reproduce a number of the brain’s visual processing capabilities in a versatile and accurate way. The results were published in the journal Science Advances.