The finding could help scientists achieve reliable room-temperature superconductivity, which would help pave the way for innovations like levitating trains.

Category: physics – Page 404

South Pole’s next generation of discovery — By Carla Reiter | University of Chicago

“Later this year, during what passes for summer in Antarctica, a group of Chicago scientists will arrive at the Amundsen–Scott South Pole research station to install a new and enhanced instrument designed to plumb the earliest history of the cosmos.”

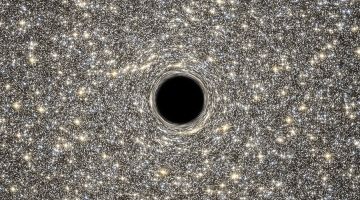

Hawking’s latest black-hole paper splits physicists

I do indeed think it solves he firewall problem, kind of trivially so. I am somewhat puzzled they didn’t even mention this in their paper.

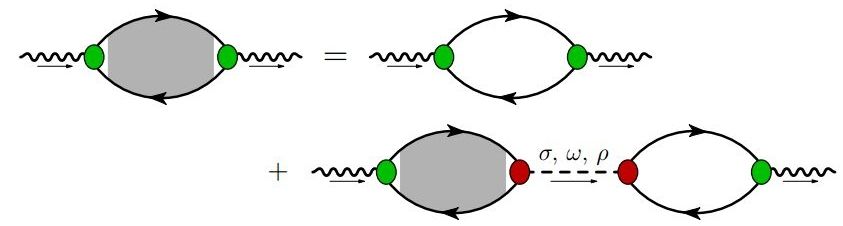

Why a new physics theory could rewrite the textbooks

Scientists are closer to changing everything we know about one of the basic building blocks of the universe, according to an international group of physics experts involving the University of Adelaide.

How Time Could Move Backwards In Parallel Universes

Understanding time is one of the big open questions of physics, and it has puzzled philosophers throughout history. What is time? Why does it appear to have a direction? The concept is defined as the “arrow of time,” which is used to indicate that time is asymmetric – even though most laws of the universe are perfectly symmetric.

A potential explanation for this has now been put forward. Physicist Sean Carroll from CalTech and cosmologist Alan Guth from MIT created a simulation that shows that arrows of time can arise naturally from a perfectly symmetric system of equations.

The arrow of time comes from observing that time does indeed seem to pass for us and that the direction of time is consistent with the increase in entropy in the universe. Entropy is the measure of the disorder of the world; an intact egg has less entropy than a broken one, and if we see a broken egg, we know that it used to be unbroken. Our experience tells us that broken eggs don’t jump back together, that ice cubes melt, and that tidying up a room requires a lot more energy than making it messy.

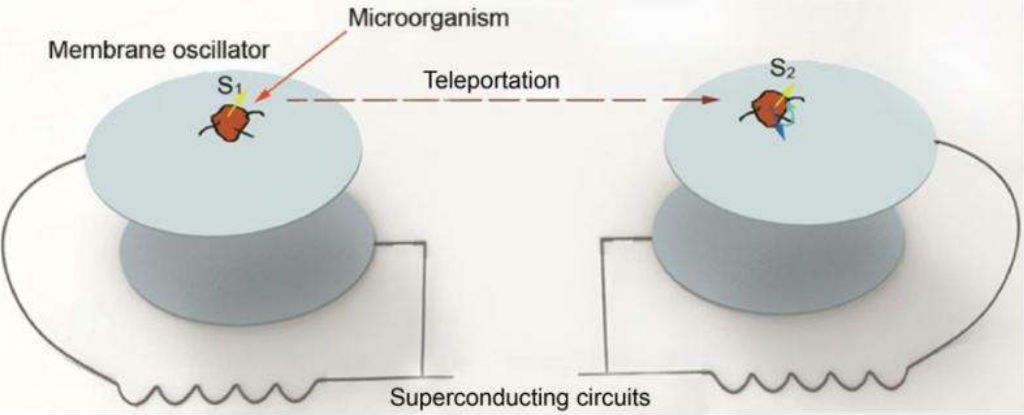

Physicists propose new method to teleport the memory of a living creature

While the possibility of teleporting entire objects from one place to another like they do in the movies is way beyond our current — and near-future — capabilities, the same can’t be said for the memory of our existence.

Graphene ‘optical capacitors’ can make chips that mesh biophysics and semiconductors

Graphene’s properties make it a tantalizing target for semiconductor research. Now a team from Princeton has showed that flakes of graphene can work as fast, accurate optical capacitors for laser transistors in neuromorphic circuits.

It’s possible that there is a “mirror universe” where time moves backwards, say scientists

Although we experience time in one direction—we all get older, we have records of the past but not the future—there’s nothing in the laws of physics that insists time must move forward.

In trying to solve the puzzle of why time moves in a certain direction, many physicists have settled on entropy, the level of molecular disorder in a system, which continually increases. But two separate groups of prominent physicists are working on models that examine the initial conditions that might have created the arrow of time, and both seem to show time moving in two different directions.

When the Big Bang created our universe, these physicists believe it also created an inverse mirror universe where time moves in the opposite direction. From our perspective, time in the parallel universe moves backward. But anyone in the parallel universe would perceive our universe’s time as moving backward.

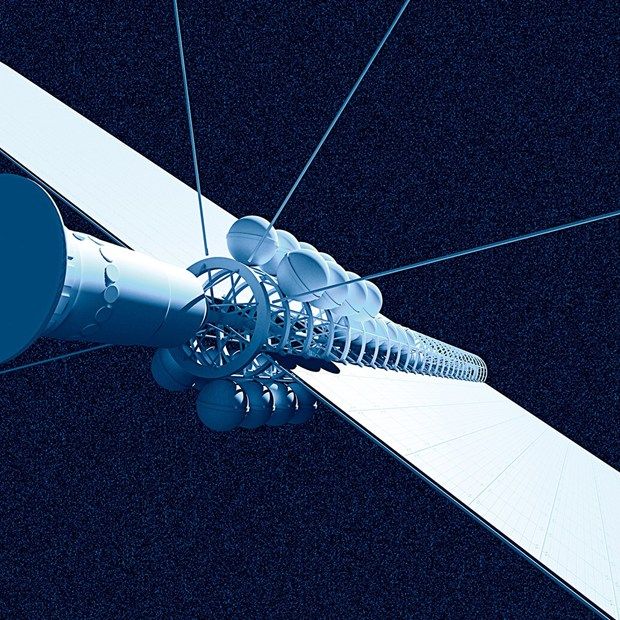

Physicists hope for interstellar travel

Asteroid mining and space tourism are all well and good, but a network of researchers around the world is thinking bigger when it comes to space exploration: interstellar travel.