Researchers Robert Hazen and Michael Wong have put forward a bold new law of nature — one that could explain how everything in the universe evolves, from atoms, minerals and stars to living cells, ecosystems and even human civilization. At the heart of their theory is the idea that information is as fundamental to the cosmos as mass, energy or charge. Their law revolves around a concept called functional information — a measure of the ratcheting-up of complexity and function in evolving systems over time.

Category: particle physics – Page 105

Wild New Theory Suggests Gravitational Waves Shaped The Universe

Just as ocean waves shape our shores, ripples in space-time may have once set the Universe on an evolutionary path that led to the cosmos as we see it today.

A new theory suggests gravitational waves – rather than hypothetical particles called inflatons – drove the Universe’s early expansion, and the redistribution of matter therein.

“For decades, we have tried to understand the early moments of the Universe using models based on elements we have never observed,” explains the first author of the paper, theoretical astrophysicist Raúl Jiménez of the University of Barcelona.

10x increase in atom array size boosts China’s quantum leap

Chinese researchers unveil 10x larger atom array for next-gen quantum processors.

Scientists in China have achieved a significant breakthrough in advancing quantum physics.

A team of researchers has developed the largest array of atoms for quantum computing.

The key component for a quantum computer is reportedly capable of creating arrays 10 times larger than previous systems.

Scientists Now Propose that the Far Away Galaxies JWST Spotted Could be from Another Universe

Head to https://squarespace.com/territory to save 10% off your first purchase of a website or domain using code TERRITORY

Become a member today:

/ @territoryspace.

Some scientists now propose that our universe might have been born inside a massive black hole within a larger parent cosmos. In their model, the universe before ours followed the same laws of physics we know today, expanding for billions of years before gravity overcame that outward push. Space began to contract, galaxies moved closer, and the cosmos collapsed toward extreme densities. Instead of ending in a singularity where physics breaks down, quantum effects pushed back against gravity, halting the collapse and triggering a cosmic rebound. That bounce could have launched our own universe’s expansion, making the Big Bang not the true beginning, but a continuation.

This idea draws on the Pauli Exclusion Principle and degeneracy pressure, which in smaller-scale examples prevent white dwarfs and neutron stars from collapsing indefinitely. The same resistance, applied on a universe-wide scale, could stop total collapse inside a black hole. Simulations suggest such a process could occur without invoking exotic new particles or forces. In this framework, the formation of our universe is a purely gravitational event, governed by the physics we already understand, just operating under extreme conditions beyond what we have directly observed.

One striking prediction is that ancient relics from the parent universe could have survived the bounce. These might include primordial black holes or neutron stars that predate our own cosmos. If detected, especially in the early universe, they could serve as evidence that a cosmic bounce occurred. The James Webb Space Telescope’s discovery of unexpectedly massive galaxies soon after the Big Bang could align with this idea, as such galaxies may have formed more easily if early black holes were already present to seed them.

Recent JWST findings on how galaxies spin across the universe may also fit the model. If confirmed, these patterns could point toward a shared origin and support the possibility that we live inside a black hole. While the concept remains controversial, it offers a potential bridge between general relativity and quantum mechanics, challenging the assumption that singularities are inevitable and suggesting that the life cycle of universes may be far more connected than we thought.

The first experimental realization of quantum optical skyrmions in a semiconductor QED system

Skyrmions are localized, particle-like excitations in materials that retain their structure due to topological constraints (i.e., restrictions arising from properties that remain unchanged under smooth deformations). These quasiparticles, first introduced in high-energy physics and quantum field theory, have since attracted intense interest in condensed matter physics and photonics, owing to their potential as robust carriers for information storage and manipulation.

Researchers at Sun Yat-sen University and Tianjin University recently reported the first experimental realization of single-photon quantum skyrmions (i.e., localized light structures) in a semiconductor cavity quantum electrodynamics (QED) system. Their paper, published in Nature Physics, could open new possibilities for the study of quantum light-matter interactions, while also contributing to the advancement of photonic quantum devices.

“Our work was motivated by the longstanding challenge of realizing topological photonic structures—specifically skyrmions—at the quantum level,” Ying Yu, co-senior author of the paper, told Phys.org.

The shape of the universe revealed through algebraic geometry

How can the behavior of elementary particles and the structure of the entire universe be described using the same mathematical concepts? This question is at the heart of recent work by the mathematicians Claudia Fevola from Inria Saclay and Anna-Laura Sattelberger from the Max Planck Institute for Mathematics in the Sciences, recently published in the Notices of the American Mathematical Society.

Mathematics and physics share a close, reciprocal relationship. Mathematics offers the language and tools to describe physical phenomena, while physics drives the development of new mathematical ideas. This interplay remains vital in areas such as quantum field theory and cosmology, where advanced mathematical structures and physical theory evolve together.

In their article, the authors explore how algebraic structures and geometric shapes can help us understand phenomena ranging from particle collisions such as happens, for instance, in particle accelerators to the large-scale architecture of the cosmos. Their research is centered around algebraic geometry. Their recent undertakings also connect to a field called positive geometry—an interdisciplinary and novel subject in mathematics driven by new ideas in particle physics and cosmology.

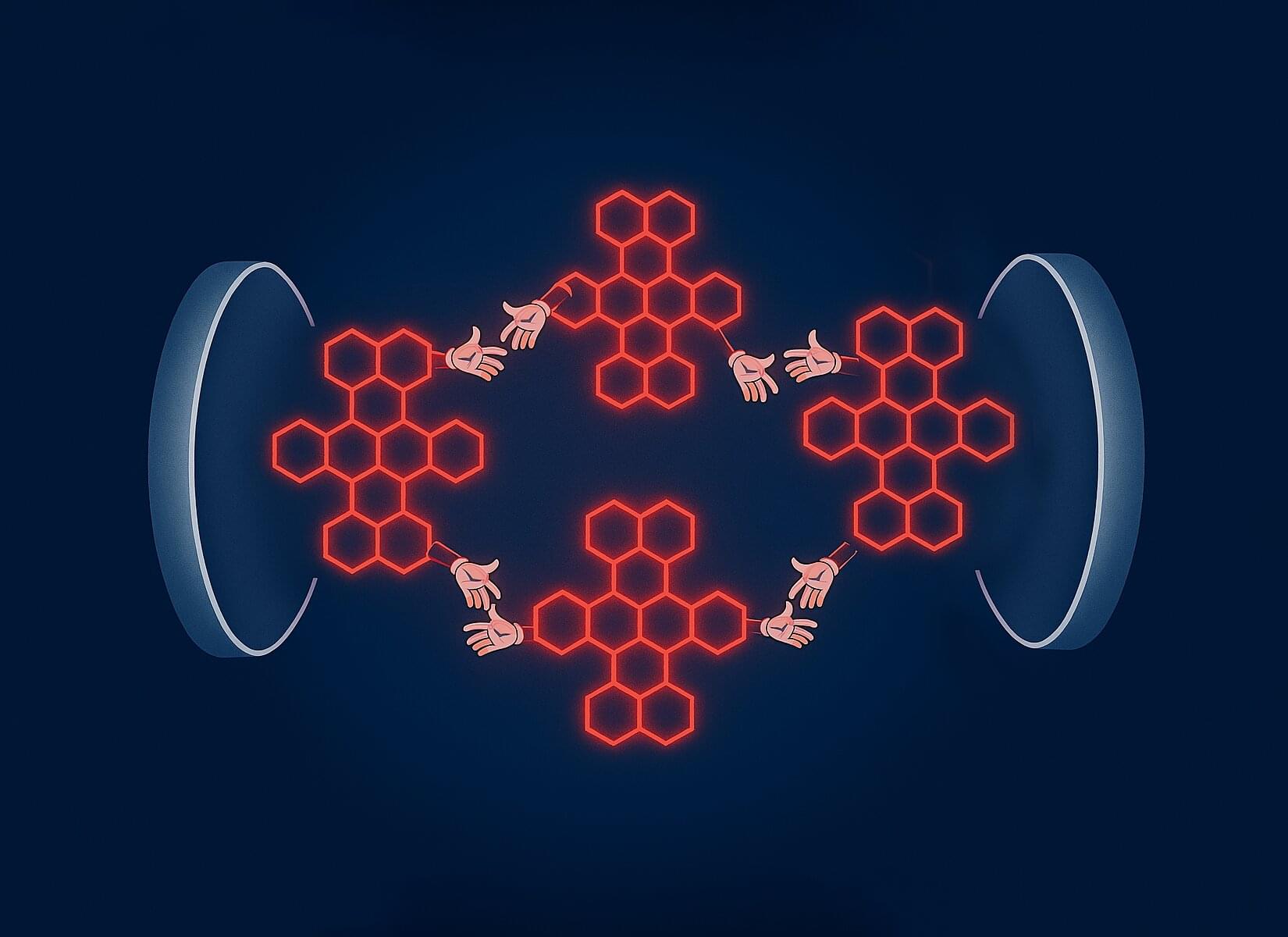

Molecular hybridization achieved through quantum vacuum manipulation

Interactions between atoms and molecules are facilitated by electromagnetic fields. The bigger the distance between the partners involved, the weaker these mutual interactions are. In order for the particles to be able to form natural chemical bonds, the distance between them must usually be approximately equal to their diameter.

Using an optical resonator which strongly alters the quantum vacuum, scientists at the Max Planck Institute for the Science of Light (MPL) have succeeded for the first time in optically “bonding” several molecules at greater distances. The physicists are thus experimentally creating synthetic states of coupled molecules, thereby establishing the foundation for the development of new hybrid light-matter states. The study is published in the journal Proceedings of the National Academy of Sciences.

Atoms and molecules have clearly defined, discrete energy levels. When they are combined to form a new molecule, the energy states change. This process is referred to as molecular hybridization and is characterized by the overlap of electron orbitals, i.e., the areas where electrons typically reside. However, at a scale of a few nanometers, the interaction becomes so weak that molecules are no longer able to communicate with each other.

Unlocking the sun’s secret messengers: DUNE experiment set to reveal new details about solar neutrinos

Neutrinos—ghostly particles that rarely interact with normal matter—are the sun’s secret messengers. These particles are born deep within the sun, a byproduct of the nuclear fusion process which powers all stars.

Neutrinos escape the sun and stream through Earth in immense quantities. These particles are imprinted with information about the inner workings of the sun.

Our new theoretical paper published in Physical Review Letters shows that the Deep Underground Neutrino Experiment (DUNE), currently under construction, will help us unlock the deepest secrets of these solar messengers.

Columbia’s radiation-proof chip built to decode the universe at CERN

A new specialized, radiation-hardened chip has been designed for CERN’s Large Hadron Collider (LHC) upgrade.

Engineers at Columbia University have developed this analog-to-digital converter (ADC) chip.

The custom-designed chips will be used in the ATLAS detector to measure up to 1.5 billion particle collisions per second.

Scientists Say the Universe Might Be a HOAX — Here’s Why

Which leads us to a strange but necessary question:

If the universe is just structure — just syntax — then where’s the meaning?

Because that’s what we’ve been trying to find all along, isn’t it? Not just patterns. Not just formulas. But something is behind it. Something in it. A message. A cause. A reason why anything is the way it is. Something we could point to and say, “There — that’s what it’s all about.”

3:04 The Illusion of Physical Reality — Is Anything Really There?

10:16 Quantum Mechanics — When Reality Stops Making Sense.

18:04 The Holographic Principle — A Universe Made of Information.

26:24 Quantum Fields, Not Particles — The Fabric Beneath Matter.

33:29 Emergence — Time, Space, and Matter Are Not Fundamental.

41:49 Simulation Theory — But with a Physics Twist.

49:12 Quantum Gravity and the End of Local Reality.

57:29 Consciousness and the Collapse of Reality.

1:06:11 The “It from Bit” Hypothesis.

1:15:37 Experimental Clues — When the Universe Disobeys Logic.

1:23:46 If the Universe Isn’t Real, What Are We?

1:33:13 Could Physics Be Telling Us There’s No ‘There’ There?

1:39:33 Is the Universe a Language Without a Speaker?

1:46:53 So… What’s Left? Do We Actually Exist?

1:52:07 The Ultimate Twist — Could “Nothing” Be the Most Real Thing?

1:57:07 What If the Universe Is the Biggest Illusion Ever Constructed?

If you keep peeling everything back, does anything actually remain?

That’s the uncomfortable part. Because there’s a difference between saying “nothing exists the way we thought” and saying “nothing exists at all.” The first is about interpretation. The second is about presence. One reframes reality. The other questions whether there’s anything there to reframe.