Machines lace almost all social, political cultural and economic issues currently being discussed. Why, you ask? Clearly, because we live in a world that has all its modern economies and demographic trends pivoting around machines and factories at all scales.

We have reached the stage in the evolution of our civilization where we cannot fathom a day without the presence of machines or automated processes. Machines are not only used in sectors of manufacturing or agriculture but also in basic applications like healthcare, electronics and other areas of research. Although, machines of varying types had entered the industrial landscape long ago, technologies like nanotechnology, the Internet of Things, Big Data have altered the scenario in an unprecedented manner.

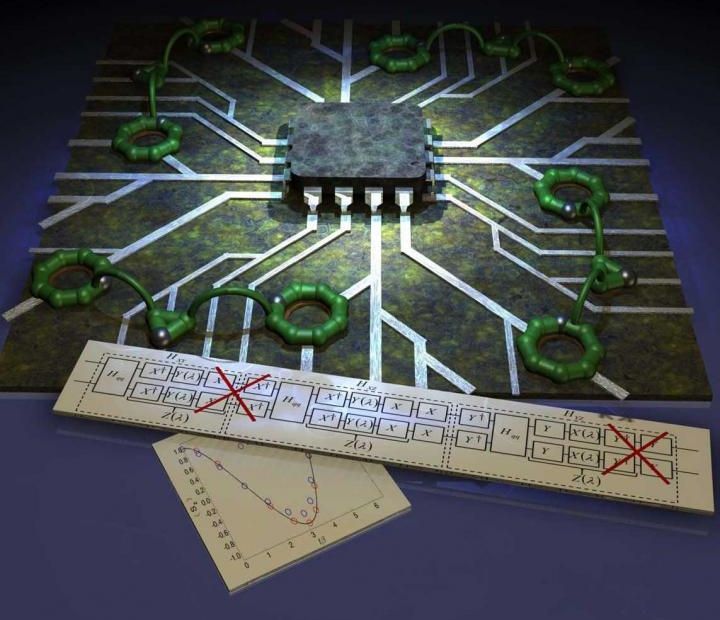

The fusion of nanotechnology with conventional mechanical concepts gives rise to the perception of ‘molecular machines’. Foreseen to be a stepping stone into nano-sized industrial revolution, these microscopic machines are molecules designed with movable parts that behave in a way that our regular machines operate in. A nano-scale motor that spins in a given direction in presence of directed heat and light would be an example of a molecular machine.