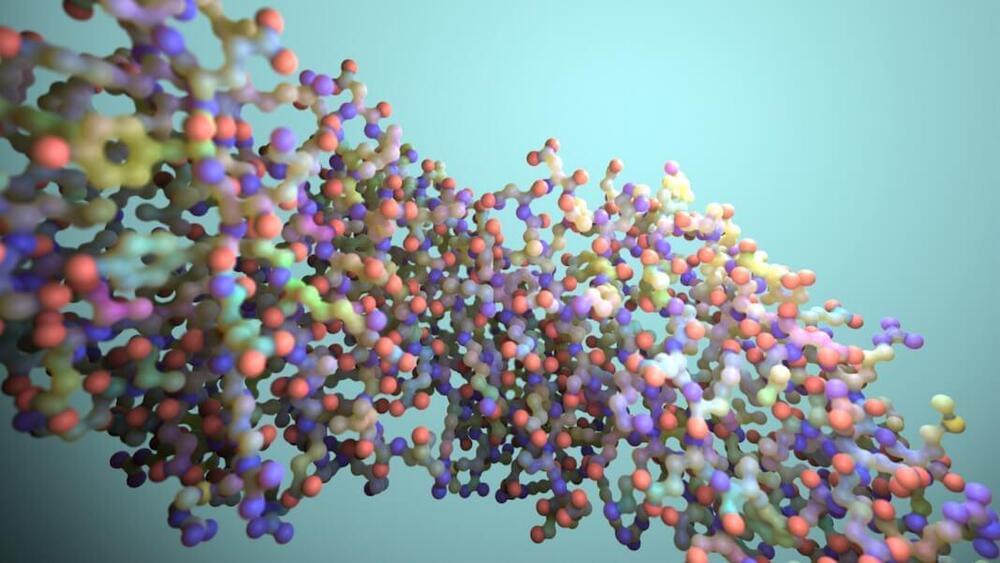

While “protein” often evokes pictures of chicken breasts, these molecules are more similar to an intricate Lego puzzle. Building a protein starts with a string of amino acids—think a myriad of Christmas lights on a string— which then fold into 3D structures (like rumpling them up for storage).

DeepMind and Baker both made waves when they each developed algorithms to predict the structure of any protein based on their amino acid sequence. It was no simple endeavor; the predictions were mapped at the atomic level.

Designing new proteins raises the complexity to another level. This year Baker’s lab took a stab at it, with one effort using good old screening techniques and another relying on deep learning hallucinations. Both algorithms are extremely powerful for demystifying natural proteins and generating new ones, but they were hard to scale up.