Quantum computing has entered a bit of an awkward period. There have been clear demonstrations that we can successfully run quantum algorithms, but the qubit counts and error rates of existing hardware mean that we can’t solve any commercially useful problems at the moment. So, while many companies are interested in quantum computing and have developed software for existing hardware (and have paid for access to that hardware), the efforts have been focused on preparation. They want the expertise and capability needed to develop useful software once the computers are ready to run it.

For the moment, that leaves them waiting for hardware companies to produce sufficiently robust machines—machines that don’t currently have a clear delivery date. It could be years; it could be decades. Beyond learning how to develop quantum computing software, there’s nothing obvious to do with the hardware in the meantime.

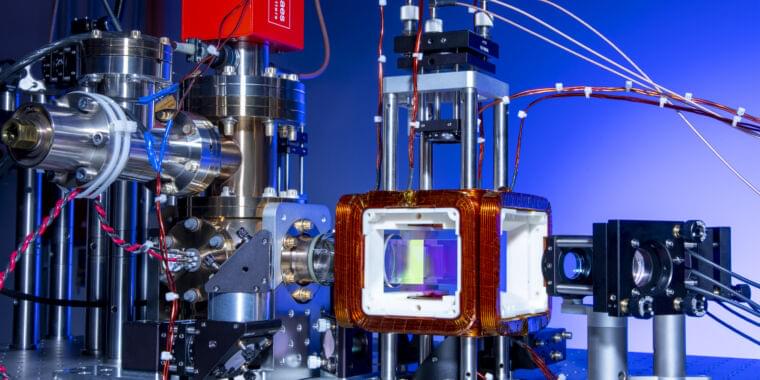

But a company called QuEra may have found a way to do something that’s not as obvious. The technology it is developing could ultimately provide a route to quantum computing. But until then, it’s possible to solve a class of mathematical problems on the same hardware, and any improvements to that hardware will benefit both types of computation. And in a new paper, the company’s researchers have expanded the types of computations that can be run on their machine.